An organisation transferring 100TB of data between cloud providers each month is paying somewhere between £80,000 and £115,000 in egress charges annually, and that is before accounting for the cost of the network engineers debugging BGP flaps at 2am, the week lost when a colocation cross-connect was provisioned to the wrong cage, or the security audit that revealed private interconnects were carrying unencrypted production data because nobody read the small print. Multi-cloud is the operational reality for most enterprises: Flexera’s 2025 State of the Cloud report found that 89% of enterprises run workloads across more than one provider. What those adoption statistics do not capture is how consistently organisations underestimate the networking layer, treating it as a configuration task rather than an architectural discipline, and then spend years paying for that mistake.

The traditional approach has been to connect each cloud provider to the corporate WAN and route traffic through on-premises infrastructure. It works at small scale and terrible scale. Once inter-cloud traffic volumes exceed a few terabytes per month, hairpinning everything through a data centre adds latency, saturates on-premises bandwidth, and turns the network team into a bottleneck for every new cloud workload. The alternative (direct cloud-to-cloud connectivity) introduces its own complexity: different routing models, incompatible ASN conventions, IP address ranges that overlap in ways nobody anticipated, and security controls that each provider implements differently.

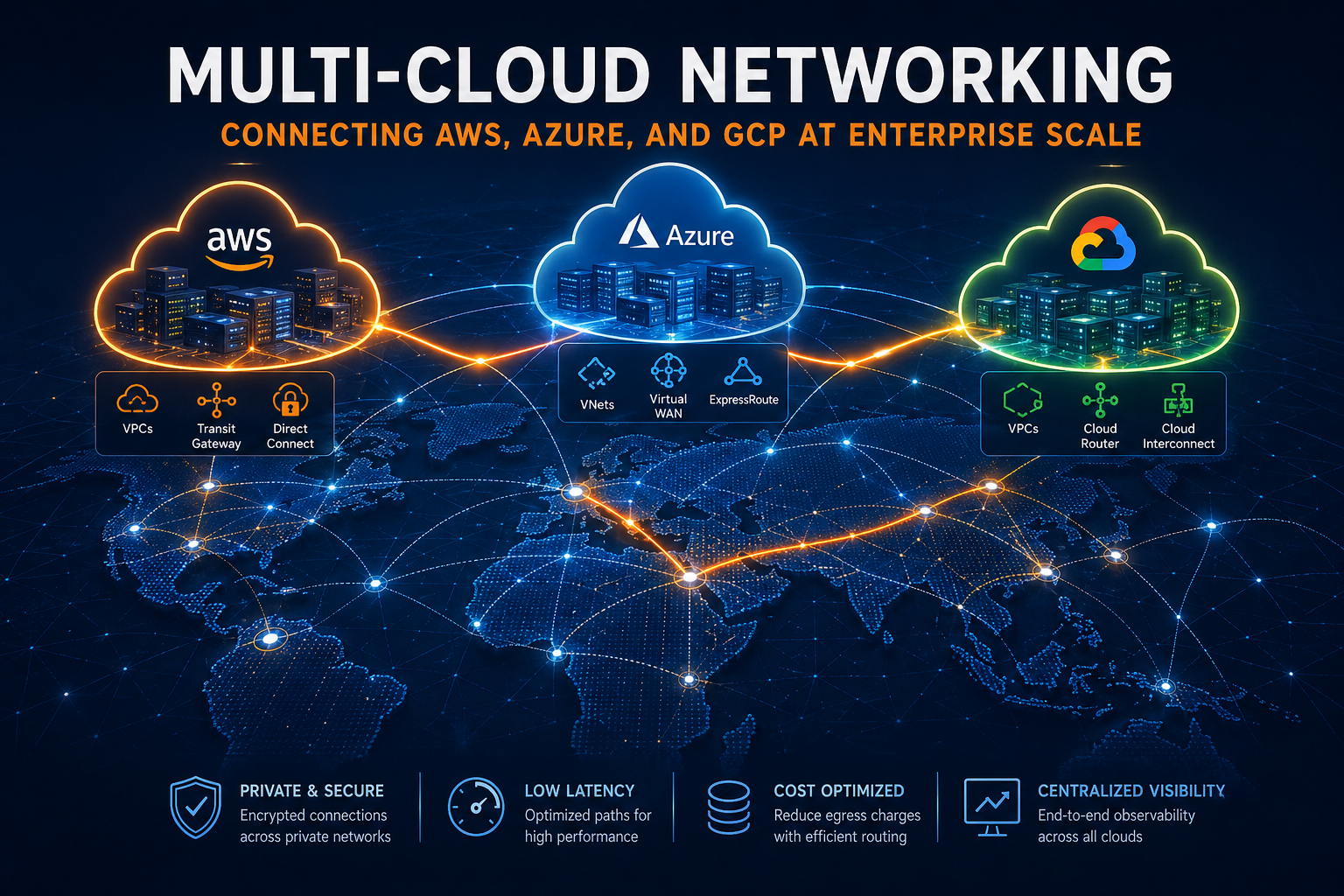

This post addresses both the intra-platform layer (how you build a scalable network within each cloud) and the inter-cloud layer (how you actually stitch AWS, Azure, and GCP together in production). It covers the three dominant hub architectures, the connectivity options for crossing cloud boundaries, the failure modes that vendor documentation does not mention, and the cost economics that determine when native VPN tunnels stop being acceptable. It also covers AWS Interconnect multicloud, which reached general availability in April 2026 and changes the calculus for AWS-GCP connectivity in ways that deserve attention from any architect planning a deployment this year.

The State of Multi-Cloud Networking in 2026

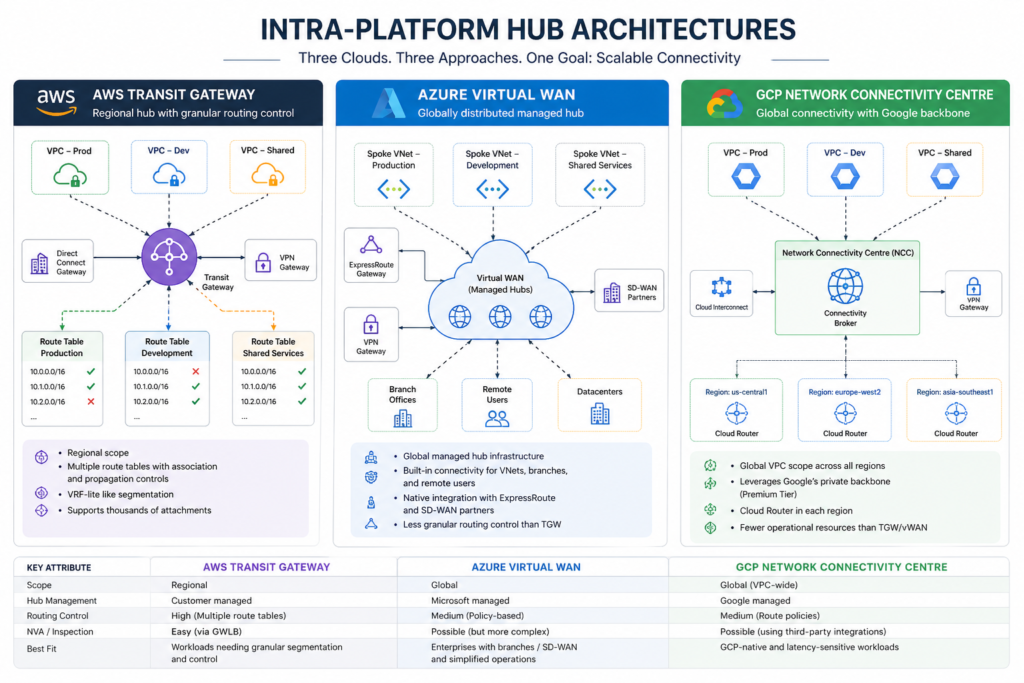

Multi-cloud networking has matured considerably in the last three years, but the maturity has been uneven. Within each provider, the hub-and-spoke networking model is well-understood: AWS Transit Gateway, Azure Virtual WAN, and GCP Network Connectivity Centre (NCC) have all moved from early-adopter territory into standard enterprise deployment. The tooling for managing VPCs, VNets, and firewall rules at scale is solid.

The inter-cloud layer is a different story. Until late 2025, connecting two hyperscalers privately meant either routing through customer-managed on-premises infrastructure or negotiating colocation arrangements with a network exchange provider such as Equinix or Megaport. Both approaches work, but both require physical infrastructure management that cloud teams are neither staffed nor incentivised to handle. The announcement of AWS Interconnect multicloud at re:Invent 2025, and its general availability in April 2026, represents the first attempt by the hyperscalers themselves to solve this problem at the managed-service layer. The service currently supports AWS-GCP connectivity; Azure integration is expected later in 2026.

The other significant shift is in egress pricing. AWS has moved AWS Interconnect multicloud to a flat-rate bandwidth model rather than per-GB charging, responding to sustained enterprise pressure on data transfer costs. This changes the break-even analysis for high-volume workloads in ways that the older economics did not accommodate.

Before getting into connectivity patterns, it is worth understanding why the intra-platform layer matters so much for inter-cloud architecture. The design of your hub within each cloud determines what your options are for crossing cloud boundaries, and some common hub configurations create constraints that only become apparent when you try to connect them.

The Intra-Platform Layer: Three Hubs, Three Design Philosophies

AWS Transit Gateway

AWS Transit Gateway (TGW) is the most mature and operationally flexible of the three hub services. It centralises VPC-to-VPC, VPN, and Direct Connect routing through a regional hub that supports thousands of attachments. Its defining feature is multiple route tables with association and propagation controls : the closest analogue to VRF-lite available in any hyperscaler networking product. A spoke VPC can be associated with one route table and propagate routes to others, which gives platform teams granular control over traffic segmentation without deploying separate hub VPCs per environment.

The critical limitation is regional scope. A Transit Gateway exists within a single AWS Region, and cross-region connectivity requires explicit inter-region TGW peering with additional data transfer charges. Organisations with workloads across multiple regions typically build a spine of TGW peering connections, which works well but requires careful route table management to avoid creating asymmetric paths. For global-scale deployments, AWS Cloud WAN (which sits above TGW and provides a policy-driven global backbone) is becoming the preferred pattern, particularly because it integrates directly with AWS Interconnect multicloud for cross-provider routing.

For inter-cloud connectivity, TGW is the right attachment point when you need to serve multiple VPCs through a single Direct Connect gateway or Interconnect attachment. The Transit Gateway route tables give you the segmentation to keep, say, production AWS workloads separated from the shared-services VPC that connects to your Azure environment, without needing separate physical connections.

Azure Virtual WAN

Azure Virtual WAN takes a fundamentally different approach. Instead of a regional hub that you manage, vWAN provides globally distributed managed hub infrastructure: Microsoft operates the hub routers, you do not deploy your own hub VNet. The hubs support BGP, provide built-in transit between branches and Azure VNets, and integrate natively with ExpressRoute and Azure SD-WAN partners. For enterprises with significant branch office infrastructure (particularly those already standardised on Cisco or VMware SD-WAN): vWAN’s automated hub-and-spoke model simplifies the operational model considerably.

The trade-off is reduced granular control. You cannot inspect traffic at the hub with your own NVA without additional configuration, routing policies are less flexible than TGW route tables, and the managed nature of the hub means debugging is harder when things go wrong. Engineers who want VRF-like isolation between security domains tend to find vWAN’s model frustrating compared to TGW.

For inter-cloud connectivity from Azure, ExpressRoute is the primary private connectivity mechanism to on-premises exchange points. Direct Azure-to-AWS or Azure-to-GCP private connectivity without going through on-premises infrastructure currently requires either a colocation exchange (Equinix Fabric, Megaport) or waiting for Azure’s integration with AWS Interconnect multicloud, which is expected but not yet available. This is a genuine limitation worth factoring into timing decisions.

GCP Network Connectivity Centre

GCP’s Network Connectivity Centre (NCC) acts as a connectivity broker that leverages Google’s private fibre backbone. The standout characteristic is Premium Tier networking: traffic entering NCC travels on Google’s private network from the nearest edge point rather than the public internet, giving GCP a measurable latency advantage for workloads that benefit from Google’s global PoP footprint. GCP’s VPC model is also globally scoped rather than regional: a single VPC spans all regions, which simplifies subnet planning considerably compared to AWS’s regional VPC model.

NCC has the smallest install base of the three hubs and fewer community resources than TGW or vWAN. That is a genuine operational consideration for teams without GCP networking expertise. It is not, however, an indication of technical capability: for workloads routing through Google’s backbone, NCC delivers transit performance that neither TGW nor vWAN can match, and its global VPC scope eliminates the regional segmentation complexity that AWS architects spend considerable time managing. The honest framing is that NCC is the right choice for GCP-native and AI-heavy workloads, and the harder choice for general-purpose enterprise deployments where ecosystem maturity matters more than backbone performance.

For inter-cloud connectivity, GCP’s Cross-Cloud Interconnect provides managed direct connections to AWS and Azure at colocation facilities. Combined with the new AWS Interconnect multicloud, GCP-AWS connectivity is now available as a fully managed service without customer-managed physical infrastructure.

The Inter-Cloud Layer: Connecting Clouds in Production

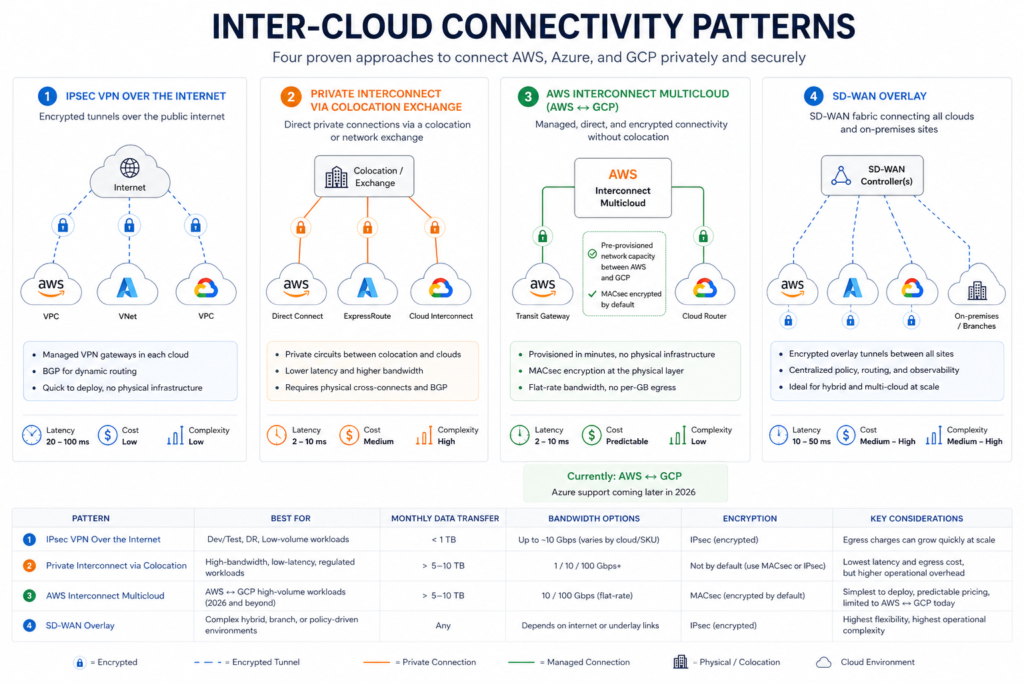

Connecting two or three clouds privately involves choosing from four connectivity patterns, each with different cost structures, operational complexity, and performance characteristics.

Pattern 1: IPsec VPN Over the Internet

The simplest inter-cloud connectivity option uses the managed VPN services each provider offers (AWS Site-to-Site VPN, Azure VPN Gateway, and GCP HA VPN) to build encrypted tunnels between cloud environments. All three support BGP for dynamic route exchange. Bandwidth varies by provider and SKU: GCP HA VPN provisions tunnels at 3Gbps each; AWS Transit Gateway VPN attachments support 1.25Gbps per tunnel but scale via ECMP across multiple tunnels; Azure VPN Gateway scales to 10Gbps on higher SKUs. For most inter-cloud use cases at low transfer volumes this is sufficient, but per-tunnel limits become a constraint as workloads grow.

This pattern is the right choice for development environments, disaster recovery standby configurations, and workloads transferring less than 1TB per month, where the simplicity and speed of deployment outweigh the performance limitations. Gateway costs run around £0.04 per hour per tunnel endpoint plus standard internet egress rates. Latency is variable: 20-100ms depending on internet routing conditions, which makes this pattern unsuitable for synchronous database replication or latency-sensitive applications.

The hidden cost to watch is egress at scale. AWS charges £0.07 per GB for the first 10TB of monthly internet egress; Azure charges around £0.068 per GB; GCP charges £0.09 per GB. A workload moving 10TB per month between clouds via internet VPN pays £700-900 in egress charges alone, every month, around £9,000-11,000 annually. This is before gateway fees. At 50TB per month, you are looking at £35,000-55,000 annually in egress, which is the point at which the economics of private interconnects become difficult to ignore.

Pattern 2: Private Interconnect via Colocation Exchange

The traditional enterprise pattern for high-bandwidth, low-latency inter-cloud connectivity routes traffic through a colocation facility where all three providers have Direct Connect, ExpressRoute, and Cloud Interconnect presence. Major providers with this kind of multi-cloud PoP include Equinix, Megaport, and Coresite. At the exchange point, you can establish private peering to all three clouds from a single physical location.

Private interconnects reduce egress charges substantially: AWS Direct Connect egress rates drop to approximately £0.016 per GB versus £0.07 per GB for internet egress, a 78% reduction. For workloads transferring 10TB per month, a 10Gbps Direct Connect circuit at approximately £1,300 per month plus reduced egress charges of around £160 per month produces a total cost of £1,460 per month, compared to approximately £2,000 per month using VPN connectivity. The break-even point is typically 5-10TB of monthly transfer, beyond which private interconnect is cheaper on a total cost basis.

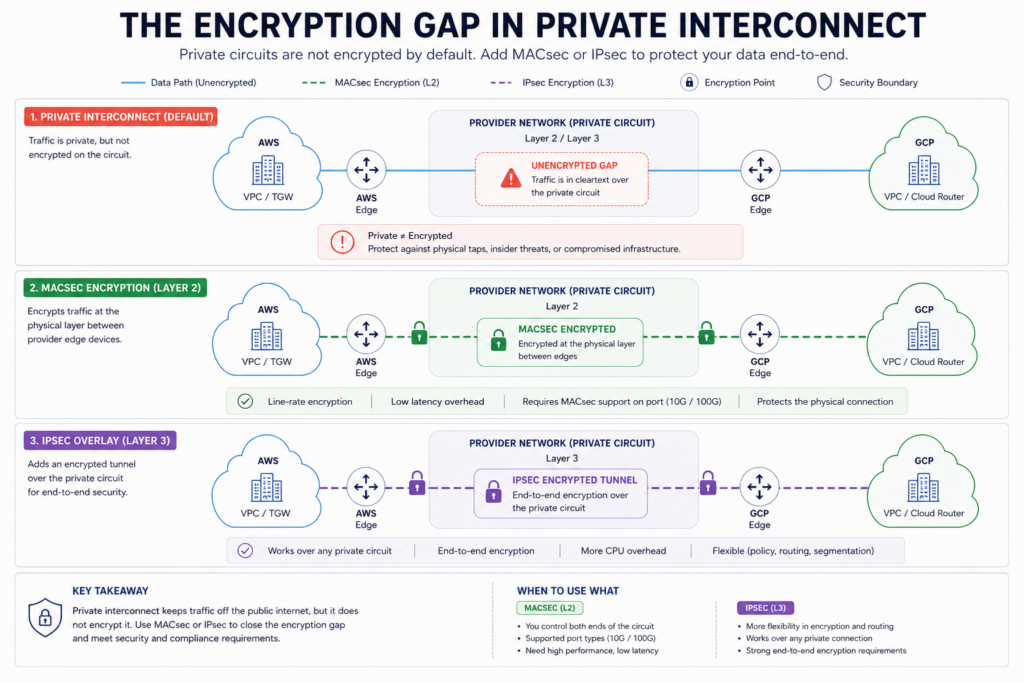

There is a critical security point that vendor documentation buries: private interconnects are not encrypted by default. AWS Direct Connect, Azure ExpressRoute, and GCP Cloud Interconnect provide private routing but operate at Layer 2/Layer 3 without encryption at the circuit level. For regulated workloads (anything subject to PCI-DSS, FCA requirements, or GDPR data protection obligations),, FCA requirements you must add MACsec at the physical port level (supported on 10Gbps and 100Gbps dedicated connections) or run IPsec over the private circuit, creating an encrypted overlay inside the private path. This is a design decision that must be made at provisioning time, not retrofitted after the fact.

The operational complexity of this pattern is significant. You are managing physical cross-connects at colocation facilities, BGP sessions to multiple providers from a shared network device, and routing policies that must account for the different ASN models each provider uses. AWS recommends separate ASNs per cloud attachment; GCP requires specific ASN ranges for Cloud Router configurations. An organisation running BGP sessions to all three providers from a single colocation router needs careful route filtering to prevent accidental route redistribution between providers and to ensure that a BGP session reset on one provider’s side does not affect the others.

Pattern 3: AWS Interconnect Multicloud

Launched at re:Invent in November 2025 and reaching general availability in April 2026, AWS Interconnect multicloud is the first purpose-built managed service for direct cloud-to-cloud private connectivity without customer-managed physical infrastructure. AWS and Google Cloud have pre-provisioned network capacity at five region pairs (including London (eu-west-2) paired with Google Cloud London (europe-west2)), eliminating the need for colocation arrangements or BGP configuration on customer-managed devices.

The provisioning experience is a significant departure from traditional interconnect. You select your AWS Region, the paired Google Cloud Region, and your required bandwidth; both clouds provision and configure the physical infrastructure in minutes rather than the days or weeks that colocation-based interconnect requires. The connection is MACsec-encrypted by default at the physical layer between AWS and GCP edge devices, addressing the encryption gap that catches teams out with traditional private interconnects.

Pricing has moved to a flat-rate bandwidth model: you pay for the bandwidth capacity provisioned, with no per-GB egress charges within that capacity. For workloads with high transfer volumes or variable traffic patterns, this eliminates the egress unpredictability that has historically made multi-cloud data transfers expensive to forecast. The service integrates with Transit Gateway for regional AWS deployments, and with AWS Cloud WAN for global multi-region topologies.

The current limitation is scope. AWS-GCP is the only supported pairing at general availability; Azure integration is expected later in 2026, and until it arrives, AWS-Azure private connectivity still requires the colocation route or internet VPN. Organisations that need all three clouds connected today cannot rely solely on AWS Interconnect multicloud.

Pattern 4: SD-WAN Overlay

For enterprises managing three or more clouds alongside on-premises data centres and branch offices, SD-WAN provides the most operationally coherent approach at the cost of the highest upfront complexity. The architecture deploys SD-WAN virtual appliances (Cisco Catalyst 8000V is the most commonly deployed option, available on all three hyperscaler marketplaces) as instances in each cloud region. These cloud instances join the SD-WAN overlay as regular sites, establishing encrypted tunnels back to the SD-WAN controllers and to all other sites, including on-premises locations.

The management advantage is consistency: routing policy, security policy, QoS, and traffic steering apply uniformly across all sites from a single management plane, regardless of which cloud or physical location the traffic is crossing. An application team deploying in a new cloud region does not need to engage the network team to provision a new circuit; they add a new SD-WAN site and inherit the existing policy. This model is operationally compelling once running, but requires significant investment to deploy correctly and demands SD-WAN expertise that many cloud-native platform teams do not have in-house.

The Problems Nobody Documents

IP Address Management

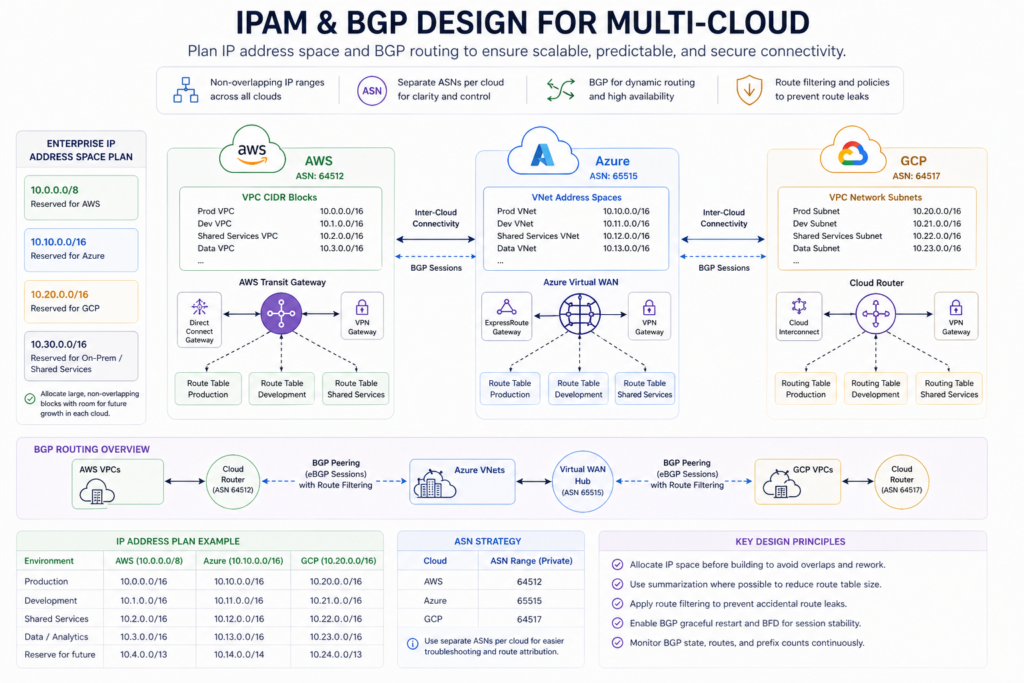

IP address management is the foundational challenge of multi-cloud networking and the one that causes the most production incidents. Every VPC and VNet starts with an RFC 1918 CIDR block from the 10.0.0.0/8, 172.16.0.0/12, or 192.168.0.0/16 ranges. Without a deliberate addressing plan applied before any cloud environment is built, teams discover overlapping CIDR blocks at the point they try to connect environments together, at which point re-addressing is extraordinarily painful. The typical failure mode is that individual cloud accounts or projects were provisioned independently with default or convenient address ranges, and nobody maintained a central IP address management (IPAM) registry.

AWS, Azure, and GCP all now offer native IPAM services that integrate with their respective VPC and VNet provisioning workflows. The right approach is to use one of these to allocate non-overlapping ranges across all cloud environments before connecting them, with space reserved for future expansion. If you are connecting clouds that already have overlapping ranges, NAT-based solutions exist but add operational complexity and break any assumptions about private IP transparency across cloud boundaries. Fixing IPAM before interconnecting is cheaper than operating around overlapping ranges indefinitely.

BGP Design and Session Stability

Each cloud provider handles BGP differently, and the differences matter at scale. AWS routing is regional: each Transit Gateway maintains its own BGP table and route propagation is controlled through TGW route table associations. GCP routing is global: Cloud Router propagates routes across all regions within a VPC network by default, which requires careful prefix filtering to prevent over-advertisement. Azure Virtual WAN manages its own BGP routing internally, limiting visibility into the routing decisions the platform is making.

When connecting clouds via private interconnect, BGP session stability is the leading cause of intermittent connectivity failures. The root cause chain is typically a physical link event at the colocation facility triggering a BFD timeout, which causes BGP session teardown and re-establishment, during which traffic either falls back to a less-preferred internet VPN path or drops entirely. The mitigations are well-understood but require deliberate configuration: BFD with aggressive timers on all BGP sessions, a fallback IPsec VPN path at lower preference using higher MED values, BGP graceful restart on all peers, and alerting on BGP session state changes before users report issues. Teams that have not done this configuration will eventually discover it is needed during an incident.

The Encryption Gap

The most commonly missed design decision in private interconnect deployments is the encryption gap. Teams choose private interconnect because it avoids the public internet; they assume this means the traffic is secure. It does not. AWS Direct Connect, Azure ExpressRoute, and GCP Cloud Interconnect are private circuits, but they are not encrypted circuits. Traffic travels in cleartext at Layer 2/Layer 3 unless you explicitly add MACsec at the port level or IPsec as an overlay.

For regulated workloads, this is not acceptable. The appropriate architecture adds either MACsec (which requires compatible port types and provider support) or IPsec over the private circuit, creating a defence-in-depth model where traffic is both private (not on the public internet) and encrypted (protected if the physical circuit is compromised or tapped). This does add latency and throughput overhead, but for workloads handling payment card data, customer financial records, or health information, it is a compliance requirement rather than an option. AWS Interconnect multicloud addresses this by providing MACsec encryption by default between the AWS and GCP edge devices, one of its meaningful advantages over traditional interconnect.

Real-World Implementations

Enterprise multi-cloud networking deployments tend to cluster around a small number of recurring patterns, driven by the same underlying pressures: regulated data that cannot traverse the public internet, analytics pipelines that need to move large volumes between clouds economically, and acquisitions that land an organisation on two providers overnight with no plan for connecting them.

SAP RISE across AWS and Azure is the most extensively documented enterprise inter-cloud networking pattern, with official architecture guidance published by both AWS and SAP. Enterprises running RISE with SAP on one provider while retaining workloads on another require private, low-latency connectivity with a compliance posture that internet VPN cannot satisfy. The standard architecture routes traffic through a shared colocation facility where AWS Direct Connect and Azure ExpressRoute both terminate, with the customer managing BGP routing between the two providers from network devices at the exchange point. This gives the enterprise full control over route propagation and traffic inspection without either cloud provider’s infrastructure sitting in the path of the other’s traffic. The pattern is explicitly recommended for production RISE deployments requiring predictable latency and compliance-grade isolation, and is in use across a significant number of large UK enterprises running S/4HANA in a split-cloud configuration.

Source: AWS Documentation, Connectivity Patterns for Multi-Cloud SAP RISE: https://docs.aws.amazon.com/sap/latest/general/rise-multi-cloud.html

AWS-to-GCP connectivity for AI and analytics workloads is the pattern that AWS Interconnect multicloud was designed to serve. The canonical deployment is an organisation running production applications and transactional data on AWS that needs to move data to GCP for BigQuery analytics or Vertex AI model training without paying internet egress rates or routing through on-premises infrastructure. Before AWS Interconnect multicloud reached general availability in April 2026, this required a colocation exchange arrangement. The managed service removes that requirement: both sides provision the connection through their respective consoles, capacity is live in minutes rather than weeks, and MACsec encryption is active by default at the physical layer. The London region pair (eu-west-2 to europe-west2) is directly relevant for UK-based deployments with data residency obligations, as traffic stays within UK data centre boundaries throughout.

Source: AWS Interconnect multicloud documentation and architecture reference https://docs.aws.amazon.com/interconnect/latest/userguide/what-is.html

Microsoft’s own published architecture for Azure-to-AWS private connectivity illustrates the three options enterprises encounter when connecting these two providers today. The AWS and Microsoft joint guidance identifies internet-based site-to-site VPN as the entry point for low-volume or early-stage connectivity; Direct Connect and ExpressRoute co-located at a shared facility as the production pattern for high-bandwidth or regulated workloads; and a managed multi-cloud connectivity provider (Equinix Fabric, Megaport) as the operational simplification layer for enterprises that want colocation-grade connectivity without managing the cross-connect provisioning themselves. The guidance explicitly notes that the customer bears responsibility for routing configuration between the two providers in the self-managed colocation pattern, which is where most of the operational complexity sits.

Source: AWS Architecture Blog, Designing Private Network Connectivity Between AWS and Microsoft Azure https://aws.amazon.com/blogs/modernizing-with-aws/designing-private-network-connectivity-aws-azure/

Decision Framework: Which Pattern for Which Scenario

The right inter-cloud connectivity pattern depends on four variables: monthly transfer volume, latency sensitivity, the clouds you are connecting, and your operational model.

For environments transferring under 1TB per month (development, DR standby, occasional data pipelines), IPsec VPN over the internet is the correct starting point. It deploys in hours, requires no physical infrastructure, and the egress cost is proportionate to the low volume. The moment you are consistently transferring 5-10TB or more per month, the economics shift and private connectivity becomes cheaper on total cost.

For AWS-GCP connectivity in 2026, AWS Interconnect multicloud is the default recommendation for new deployments. It provides managed, encrypted private connectivity without colocation infrastructure, is available in the London region pair for UK-based deployments, and removes the operational burden of managing BGP sessions to a physical exchange point. The flat-rate bandwidth model also removes egress unpredictability. This does not apply to AWS-Azure or Azure-GCP connectivity yet, where the colocation route remains the only private option.

For deployments spanning all three clouds, or for organisations with existing colocation footprint at Equinix or Megaport locations, private interconnect via colocation exchange is the appropriate choice. Ensure MACsec or IPsec encryption is in the design from the start rather than retrofitted. Plan IPAM before provisioning any cloud environments, not after.

For enterprises with complex traffic policies, branch offices in the connectivity mix, or existing SD-WAN deployments, extending SD-WAN to cloud instances provides the most operationally consistent model. The upfront investment is significant, but the ongoing operational simplicity for teams that already operate SD-WAN at scale is compelling.

The one pattern to avoid at scale is traffic hairpinning through on-premises data centres for inter-cloud flows. It works until it does not, typically when cloud traffic volumes grow beyond what the corporate WAN was provisioned to carry, which happens faster than network teams expect.

Find Your Connectivity Pattern

The right inter-cloud connectivity approach depends on how much data you move, which clouds you are connecting, and what infrastructure you already have in place. Answer three questions to get a recommendation tailored to your situation.

Multi-cloud connectivity decision framework: answer three questions to find the right pattern for your environment

Implementation Roadmap

Phase 1: Foundation (Weeks 1-4)

Before connecting anything, establish your IP address management strategy. Allocate non-overlapping CIDR blocks across all cloud environments, deploy your preferred IPAM tool (AWS IPAM, Azure IPAM, or a third-party such as BlueCat or Infoblox integrated with cloud APIs), and document the addressing scheme. Define your BGP ASN strategy: separate ASNs per cloud provider simplify route attribution and debugging, though some organisations use a single global enterprise ASN with appropriate prefix filtering.

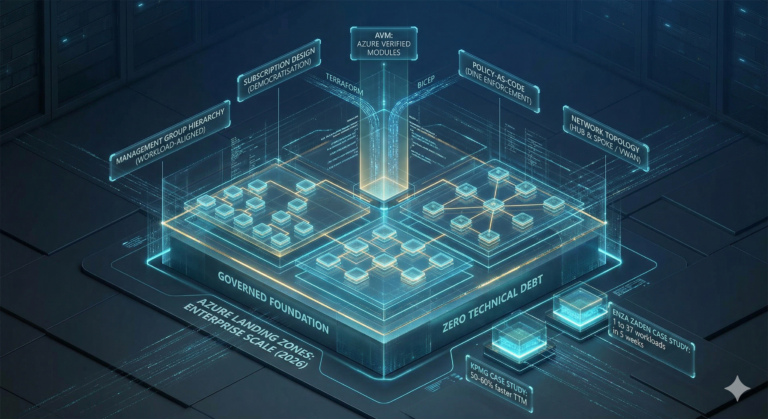

Establish your intra-platform hub architecture in each cloud. For AWS, deploy Transit Gateway in each Region you operate and define your route table structure for environment segmentation. For Azure, make the vWAN versus hub-spoke VNet decision based on whether you have SD-WAN or branch connectivity requirements (vWAN) or need NVA inspection and granular route control (hub-spoke). For GCP, deploy Cloud Router in each region and configure NCC if you need cross-region transit.

Phase 2: Inter-Cloud Connectivity (Weeks 5-10)

For AWS-GCP connectivity, evaluate AWS Interconnect multicloud for region pairs where it is available. For the London pairing specifically, this is the recommended default. For AWS-Azure and Azure-GCP, assess whether your monthly transfer volumes justify the colocation approach or whether internet VPN is sufficient at current scale. Deploy monitoring on all inter-cloud connectivity paths from day one: BGP session state, tunnel health, and latency between cloud environments should feed into your existing observability platform.

Add MACsec or IPsec encryption to any private interconnect carrying regulated data. This is the configuration step that teams most consistently defer and then scramble to retrofit before an audit.

Phase 3: Security and Observability (Weeks 11-16)

Deploy consistent firewall policy across cloud boundaries. This typically means either an SD-WAN overlay that applies unified policy, or a combination of each provider's native security controls with a centralised policy management tool. Establish flow log collection across all inter-cloud paths and route them to your SIEM for cross-cloud visibility. Define and test the failover behaviour for each connectivity path: what happens when a BGP session drops, when an interconnect location has a power event, or when a provider has a regional outage affecting the hub infrastructure.

What Changes Next

The most significant near-term development is Azure's integration with AWS Interconnect multicloud, expected later in 2026. When this arrives, enterprises will be able to connect all three hyperscalers using fully managed cloud-native services without colocation infrastructure, at which point the colocation route becomes a legacy pattern rather than the default. Organisations planning major multi-cloud connectivity deployments should factor this into their architecture decisions now, designing for managed interconnect from the start is less expensive than migrating off colocation circuits later.

The second trend worth watching is the shift to flat-rate bandwidth pricing for inter-cloud connectivity. AWS's move away from per-GB egress charges for Interconnect multicloud is a direct response to enterprise demand and competitive pressure. If Azure and GCP follow with equivalent pricing models, the cost calculus for multi-cloud networking changes substantially. The organisations best positioned to benefit are those with well-designed IPAM and hub architectures that can take advantage of new connectivity options without needing to re-address their cloud environments.

As multi-cloud AI workloads grow (training on one provider's GPU infrastructure, running inference on another, accessing proprietary data stored on a third), the networking layer that connects these environments will become an even more critical architectural concern. Latency between clouds directly affects AI pipeline performance; egress costs directly affect AI workload economics. The organisations that treat multi-cloud networking as a first-class architectural discipline rather than a plumbing problem will have a meaningful operational advantage as these workloads mature.

Next Steps

If you are starting a multi-cloud networking project today, the first three actions are: audit your existing IP address ranges and identify overlaps before connecting anything; define your BGP ASN strategy per cloud provider; and assess whether your AWS-GCP transfer volumes make AWS Interconnect multicloud the right choice for your region pairs. For the Azure layer, plan around the current limitation that managed cloud-to-cloud private connectivity is not yet available, and design your colocation or VPN approach to be replaceable when it arrives.

For further context on Azure networking patterns and the hub-spoke versus Virtual WAN decision, the Azure Networking Architecture 2025 post covers the Azure-specific design space in depth. The Azure Virtual WAN enterprise guide provides the operational detail for vWAN deployments specifically.

If you are assessing whether multi-cloud is the right strategy for your organisation at all, the When NOT to Go Multi-Cloud risk assessment covers the scenarios where the operational complexity outweighs the benefits, which is a reasonable starting point before committing to the networking architecture that multi-cloud requires.

For the deeper AWS VPC design decisions that underpin Transit Gateway deployments, Mastering AWS VPC covers the subnet and routing table design choices that determine how cleanly your VPCs attach to TGW.

Useful Links

- AWS Interconnect: multicloud documentation

- AWS Interconnect: multicloud architecture reference

- GCP patterns for connecting to other CSPs

- Azure multicloud networking native and partner solutions

- AWS and Azure private connectivity design

- Google Cloud hybrid connectivity overview

- AWS multi-cloud and SAP RISE connectivity patterns

- Could AWS Interconnect spell the end of egress fees? (Fierce Network analysis)