Over 5% of Azure storage accounts are misconfigured in ways that create DNS resolution failures with Private Endpoints, according to Palo Alto Networks’ Unit 42 threat research. This isn’t a minor deployment hiccup. For organisations managing 100+ Azure services, that DNS complexity represents the difference between a functioning zero-trust architecture and an expensive sprawl of unusable private networking that forces teams back to public endpoints. Yet despite these challenges, enterprises from Michelin to ELCA IT have successfully deployed Private Endpoints at scale, eliminating public internet exposure entirely whilst achieving measurable security and compliance gains.

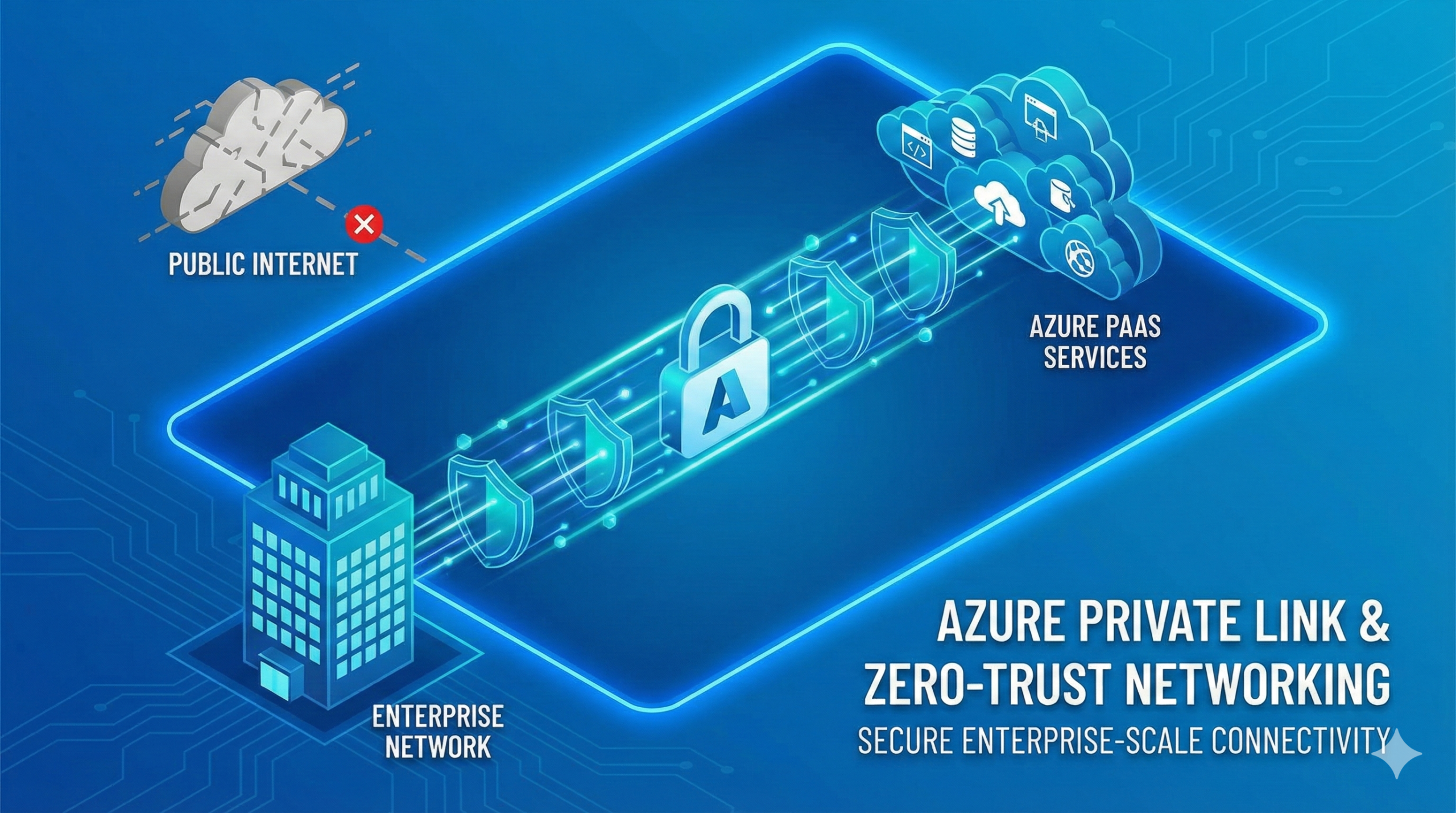

The reason organisations persist through the complexity is simple. Private Endpoints are the single most impactful network security control available in Azure today. By injecting a private network interface into your VNet for every PaaS service you consume, they eliminate the public internet from the data path completely. Traffic stays on Microsoft’s backbone, each endpoint maps to one specific resource rather than an entire service type, and on-premises clients reach cloud services over ExpressRoute or VPN without a single public IP in the path. This architectural approach directly addresses the zero-trust principle of never trusting network location whilst providing built-in data exfiltration prevention that Service Endpoints cannot match.

The trade-off is operational complexity that scales with deployment size. DNS architecture becomes the central engineering challenge, requiring centralised Private DNS zones, conditional forwarders from on-premises infrastructure, and careful management of CNAME chains that can break connectivity if misconfigured. CI/CD pipelines require re-engineering to use self-hosted agents within VNets since Microsoft-hosted agents cannot reach private endpoints. Infrastructure-as-Code modules roughly double in size compared to public endpoint configurations. At approximately £1,200 monthly infrastructure cost for 100 endpoints, the financial investment is modest, but the operational transformation demands rigorous upfront planning and phased rollout strategies proven at organisations like Michelin’s global virtual datacentre.

This guide provides the architecture patterns, cost models, DNS strategies, and implementation frameworks needed to deploy Private Endpoints successfully at enterprise scale. Drawing on documented case studies from Michelin, KPMG UK, ELCA IT, and Erste Group alongside Microsoft’s Cloud Adoption Framework guidance, we’ll examine exactly how organisations eliminate public PaaS exposure whilst managing the DNS, routing, and automation challenges that determine success or failure.

How Private Link Components Work Together

Azure Private Link is the platform capability. Private Endpoints and Private Link Service are its two resource types, one for consumers accessing Azure services and one for providers exposing custom applications. Understanding this relationship is essential before designing any enterprise architecture.

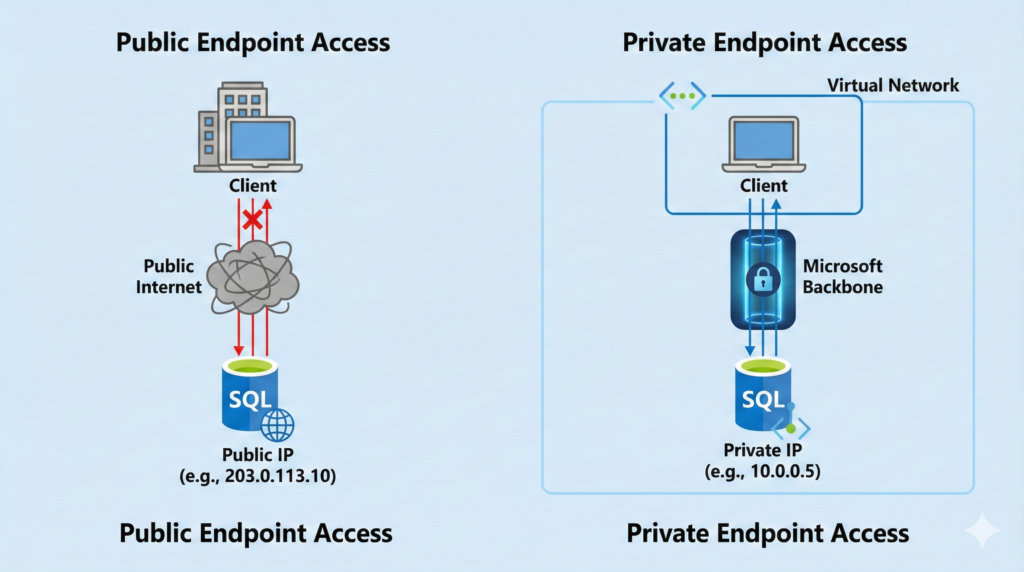

A Private Endpoint creates a read-only network interface that Azure places in your subnet. It receives a dynamic private IP address from that subnet and retains it for the endpoint’s lifetime. The NIC connects to exactly one PaaS resource instance: one specific storage account, one SQL logical server, one Key Vault. Connections are unidirectional and TCP-only, meaning clients initiate connections toward the endpoint whilst the service cannot push traffic back into your VNet. For services with multiple sub-resources, you need a separate Private Endpoint per sub-resource. Azure Storage presents the most complex scenario, requiring distinct endpoints for blob, file, table, queue, dfs, and web sub-resources, each with its own private IP and DNS record.

A Private Link Service sits on the provider side, fronting your own application behind a Standard Load Balancer and exposing it for private consumption by other tenants. A dedicated NAT subnet handles address translation so consumer traffic appears to originate from the NAT pool. Microsoft recommends at least 8 NAT IPs for production deployments. Up to 1,000 Private Endpoints across any number of subscriptions and Entra tenants can connect to a single Private Link Service, with visibility and auto-approval controls restricting who can connect. Creating a Private Link Service itself is free, with consumers paying the Private Endpoint charges.

More than 60 Azure services now support Private Endpoints at general availability, spanning storage, databases, AI, messaging, security, and DevOps platforms. The full list is maintained at Microsoft Learn under Private Link availability documentation. Notable service-specific behaviours include Key Vault becoming immediately inaccessible from the public internet when a Private Endpoint is associated unless public access is explicitly re-enabled, App Service behaving similarly, and Azure Storage requiring GPv2 accounts. A preview feature called Private Link Service Direct Connect extends connectivity to any privately routable destination IP, signalling Microsoft’s intent to make Private Link the universal private networking primitive.

Performance characteristics are straightforward. There is no throughput limitation on the Private Endpoint NIC itself, with performance bounded only by the underlying PaaS service limits. Latency within the same region equals or improves upon public endpoint access because traffic avoids internet routing. The SLA provides 99.99% availability. Cross-region access works natively, though deploying regional endpoints closer to clients avoids the latency penalty of inter-region backbone transit. A Private Endpoint in UK South can connect to a service in West Europe without additional configuration, but optimal performance requires endpoints in the same region as consuming workloads.

The relationship between these components creates the foundation for zero-trust networking in Azure. Unlike Service Endpoints which provide access to all instances of a service type within a region, Private Endpoints offer resource-level granularity that enables both network isolation and data exfiltration prevention. This architectural distinction becomes critical when implementing comprehensive Azure security architectures that meet regulatory compliance requirements.

DNS Architecture: The Make-or-Break Decision

Every architect who has deployed Private Endpoints at scale emphasises the same point. Michelin’s infrastructure team called DNS “the complicated part” of their virtual datacentre implementation. KPMG UK’s engineers found it required deep explanation even for experienced security teams. The reason is structural: DNS resolution determines whether Private Endpoints function at all, and misconfiguration creates silent failures that manifest as connection timeouts rather than obvious errors.

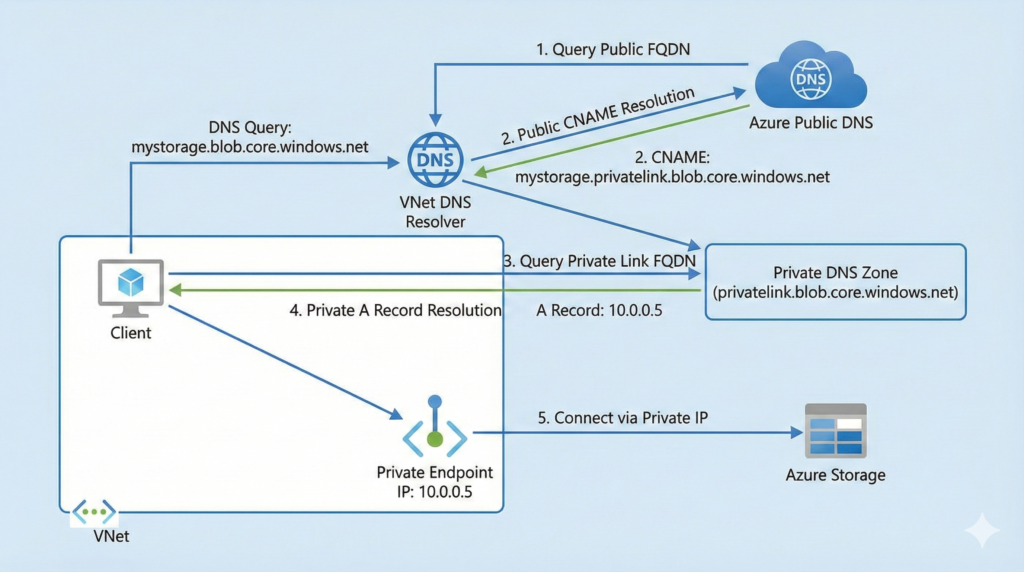

The mechanism works through a CNAME chain that Azure injects automatically. When you create a Private Endpoint for a storage account, the public FQDN myaccount.blob.core.windows.net gains a CNAME pointing to myaccount.privatelink.blob.core.windows.net. An A record in your Azure Private DNS zone resolves that privatelink FQDN to the endpoint’s private IP address. The CNAME is platform-managed, whilst the A record is created through DNS zone groups that automate record lifecycle tied to the Private Endpoint. Each DNS zone group supports up to five DNS zones, and only one group per endpoint is permitted.

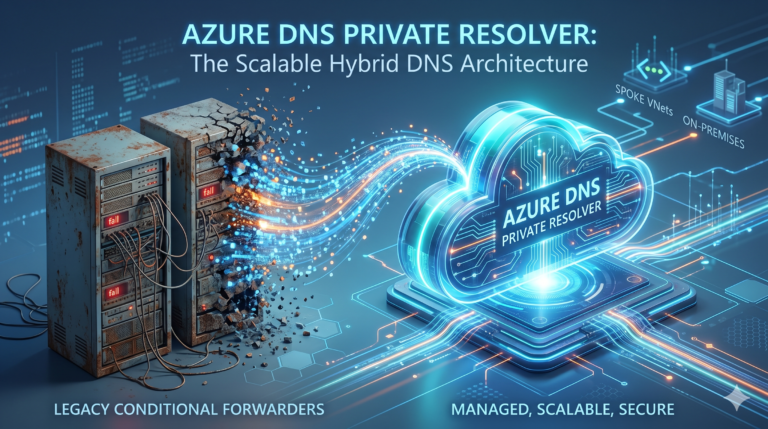

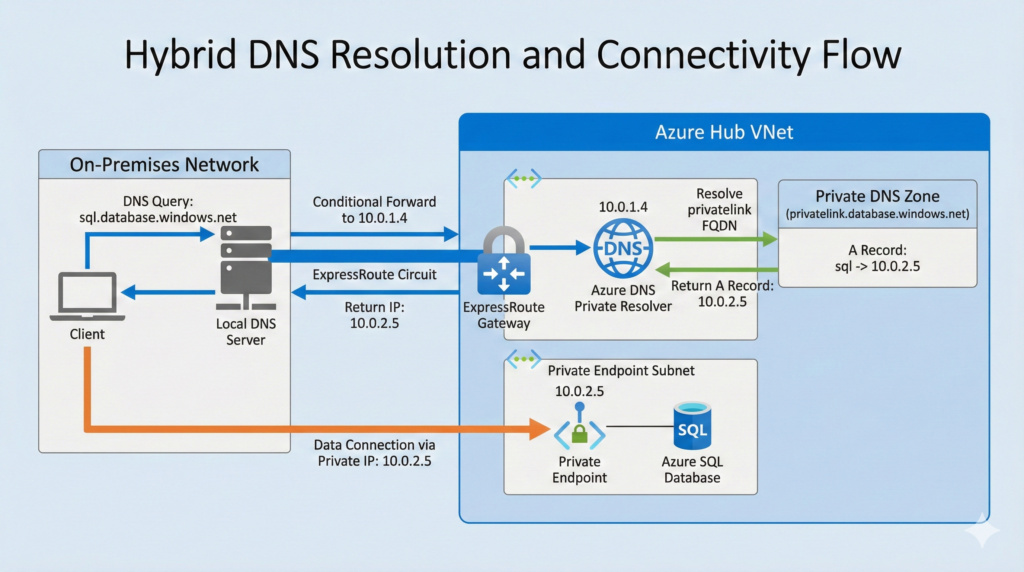

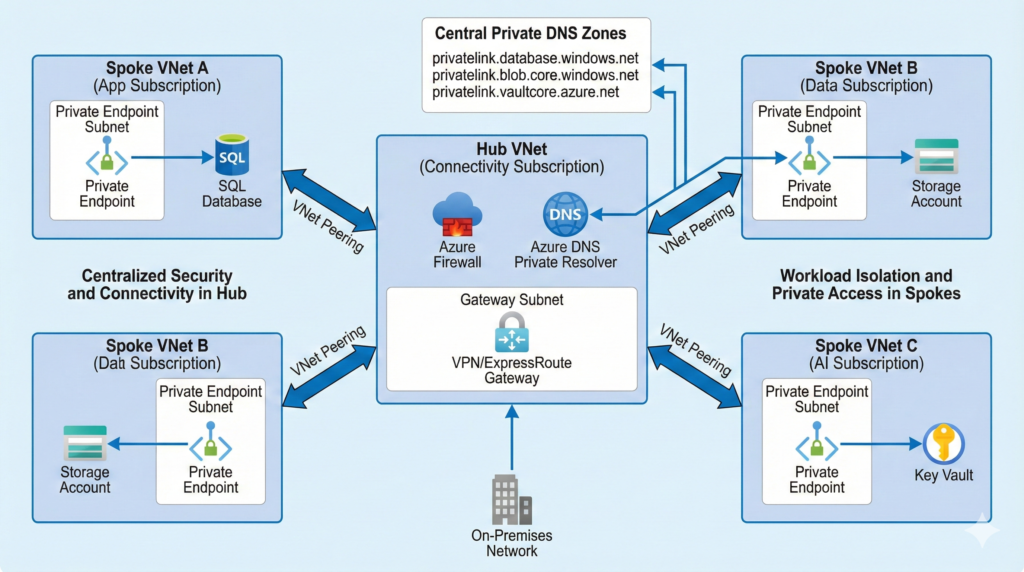

For enterprises, Microsoft’s Cloud Adoption Framework prescribes a centralised DNS model that scales reliably. Private DNS zones live in the connectivity subscription alongside the hub VNet. All spoke VNets link to these zones through VNet links. On-premises DNS servers use conditional forwarders for each public zone pointing to a DNS resolver in the hub. The modern resolver is Azure DNS Private Resolver, a managed service that replaces custom forwarder VMs. It costs £135 monthly per endpoint (inbound or outbound), handles up to 10,000 queries per second, and supports availability zones natively. A typical hub deployment uses one inbound and one outbound endpoint at £270 monthly per region.

Three critical DNS rules emerge from documented failures across multiple organisations. First, never create multiple Private DNS zones with the same name across different subscriptions or resource groups, as this forces manual record merging and creates resolution conflicts. Second, conditional forwarding from on-premises infrastructure must target the public zone name like database.windows.net rather than the privatelink prefix. Third, when a Private DNS zone is linked to a VNet and a public resource has no corresponding Private Endpoint, the zone returns NXDOMAIN, breaking access to that public resource entirely. Azure’s recent “Fallback to Internet” VNet link feature addresses this, but it must be explicitly enabled per zone link.

For multi-tenant environments, SpareBank 1’s documented architecture across 13 tenants uses a central hub tenant hosting all Private DNS zones, with Azure Policy managing DNS record lifecycle across tenants through DeployIfNotExists rules. Managed identities in each spoke tenant hold Private DNS Zone Contributor RBAC on the hub tenant’s DNS zones. This pattern scales effectively and prevents application teams from creating rogue DNS zones in spoke subscriptions that would fragment the DNS architecture.

The DNS architecture directly impacts hybrid connectivity scenarios. When on-premises clients need to access Azure PaaS services through Private Endpoints, three components must work together: an ExpressRoute or VPN gateway in the hub, the Private Endpoint’s /32 route advertised to on-premises via BGP, and DNS conditional forwarding configured so on-premises clients resolve FQDNs to private IPs. Point-to-site VPN clients require special attention because Private DNS zones don’t resolve by default over P2S connections, requiring the DNS Private Resolver’s inbound endpoint IP as the DNS server in VPN client configuration.

This DNS complexity is precisely why organisations investing in hybrid cloud architectures need rigorous DNS planning before deploying Private Endpoints at scale. The patterns documented in Microsoft’s Cloud Adoption Framework provide proven approaches, but implementation requires careful coordination between networking, security, and application teams.

Enterprise Implementation Patterns That Scale

Hub-and-spoke placement follows a clear decision tree from the Azure Architecture Center. Place Private Endpoints in the hub VNet when traffic inspection via Azure Firewall is required, on-premises access is needed, or multiple applications share the same PaaS resource. Place them in spoke VNets when using Virtual WAN (endpoints cannot deploy into virtual hubs), when only a single application needs access, or when workload isolation is the priority. For large deployments, consider a dedicated subnet for Private Endpoints to avoid hitting the 400-route limit per route table, as each endpoint creates a /32 system route that propagates across all peered VNets.

Private Endpoint subnets support NSGs and UDRs, but this functionality is disabled by default. The subnet property privateEndpointNetworkPolicies must be set to Enabled for both NSG and UDR support, or one of the partial options. Once enabled, NSGs can filter inbound traffic to endpoints, and UDRs can force-tunnel traffic through Azure Firewall for inspection. A critical routing detail that catches many implementations: use Azure Firewall Application Rules rather than Network Rules for Private Endpoint traffic, because Application Rules perform SNAT ensuring symmetric return paths. Network Rules cause asymmetric routing that drops sessions.

Migration from public to private endpoints follows a three-phase approach that minimises risk. In the prepare phase, create Private Endpoints alongside existing public access, as most services support multiple endpoints simultaneously. In the dual-access phase, validate connectivity from all clients whilst both paths are active. Connection strings typically need no changes if the DNS CNAME chain resolves correctly. In the cutover phase, disable public network access on each resource. Key Vault and App Service behave aggressively here, becoming publicly inaccessible immediately upon Private Endpoint association unless you explicitly re-enable public access.

Automation at scale relies on a three-policy Azure Policy strategy from the Cloud Adoption Framework. Policy one denies public endpoints, for example denying Storage Accounts where networkAcls.defaultAction is not Deny. Policy two denies creation of privatelink DNS zones in spoke subscriptions, forcing centralised DNS management. Policy three uses DeployIfNotExists to automatically create DNS zone groups linking new Private Endpoints to the central Private DNS zone, with a managed identity holding Private DNS Zone Contributor and Network Contributor roles. The Azure Landing Zones initiative includes a bundled policy set called “Configure Azure PaaS services to use private DNS zones” that implements these patterns for rapid deployment.

The automation patterns become particularly important when managing Azure storage at enterprise scale, where storage accounts might require multiple Private Endpoints per sub-resource type and proper lifecycle management ensures DNS records remain synchronised with endpoint creation and deletion.

What Private Endpoints Cost at Enterprise Scale

Private Endpoint pricing is structurally simple but compounds quickly across large deployments. Each endpoint costs £0.0075 per hour, totalling approximately £5.40 monthly regardless of traffic volume. Data processing adds £0.0075 per GB for the first petabyte, dropping to £0.0045 per GB at 1-5 PB and £0.003 per GB above 5 PB. These charges are additive, meaning you pay them on top of standard Azure bandwidth charges. A critical cost benefit exists: VNet peering charges are waived for traffic destined for a Private Endpoint, making hub-and-spoke architectures with centralised endpoints cost-effective compared to distributed endpoint placement.

DNS infrastructure adds modest ongoing cost. Each Private DNS zone costs £0.38 monthly, and enterprises typically need only 15-25 shared zones regardless of endpoint count. A single privatelink.blob.core.windows.net zone serves all Storage Account endpoints across the entire Azure estate. DNS queries cost £0.30 per million queries. The Azure DNS Private Resolver at £270 monthly per region represents the largest DNS expense, but only for hybrid scenarios requiring on-premises resolution.

For organisations managing 100 Private Endpoints processing 50 TB of data monthly with 20 DNS zones and one DNS resolver in a single region, monthly infrastructure cost totals approximately £1,200. At 200 endpoints processing 100 TB across 30 DNS zones, the cost reaches roughly £2,100 monthly. Scaling to 500 endpoints processing 250 TB across 40 DNS zones in two regions brings monthly cost to approximately £5,150.

Compare this to the alternative of routing all PaaS traffic through Azure Firewall Standard at £680 monthly base plus £0.012 per GB data processing. A single firewall is cheaper than 100+ endpoints in raw infrastructure cost, but it doesn’t provide resource-level isolation, built-in data exfiltration prevention, or the same compliance posture. Service Endpoints are free but don’t work from on-premises networks, lack exfiltration protection, and support only approximately 15 services compared to Private Endpoints’ 60+ supported services.

Hidden costs that catch organisations during implementation include self-hosted DevOps agents, since Microsoft-hosted agents cannot reach private endpoints. This requires VMSS-based agents at approximately £11-30 monthly per agent. Jumpboxes for management access add further cost. Premium SKU requirements also emerge for certain services: Azure API Management needs Premium tier at approximately £2,100 monthly, and Service Bus needs Premium at roughly £500 monthly to support Private Endpoints. The b.telligent consultancy reports 30-80% additional development effort and Infrastructure-as-Code modules roughly doubling in size when implementing Private Endpoints versus public access patterns.

Understanding these cost dynamics becomes essential when developing comprehensive FinOps strategies that balance security requirements against infrastructure expenditure. The key insight is that Private Endpoint cost is relatively fixed per endpoint, making them economically attractive for high-traffic services whilst potentially over-engineered for low-traffic development resources.

Real Enterprises Building With Private Endpoints

Michelin: Global Virtual Datacentre Implementation

Michelin, the global tyre manufacturer operating across 170 countries, deployed Private Endpoints at scale across their virtual datacentre built on hub-and-spoke architecture since 2015. They created dedicated VNets to host Private Endpoint NICs, peered to hub and eligible spokes, with Private DNS zones for each PaaS service type. Their implementation connected manufacturing facilities and distribution centres worldwide to Azure PaaS services without public internet exposure.

The organisation’s key lesson centred on DNS complexity. Explaining to security teams that public FQDNs now resolve to private IPs required significant education across multiple geographies. Each new Private Link zone addition demanded updating forwarding rules across their entire global DNS infrastructure, creating ongoing operational overhead. Despite these challenges, the architecture successfully eliminated public attack surface for critical manufacturing and supply chain applications, meeting stringent internal security requirements for operational technology connectivity.

The scale context remains confidential, but Michelin’s public documentation indicates hundreds of spoke VNets across global regions, suggesting several hundred Private Endpoints managing connectivity between OT systems and cloud data platforms. The security outcome achieved complete network isolation for industrial control systems accessing Azure services, a critical requirement in manufacturing environments where production downtime costs millions hourly.

Source: Michelin IT Engineering Blog

ELCA IT: Secured Data Platform Architecture

ELCA IT, a Swiss IT services firm with 2,300+ employees, deployed Private Endpoints using Terraform for secured data platform architectures serving banking and insurance clients. Each deployment includes at least four endpoints covering DataLake storage, staging storage, Terraform state storage, and Key Vault, with additional endpoints for SQL databases and analytics services depending on workload requirements.

Their engineering team documented a critical implementation challenge: Terraform pipelines could no longer access resources after disabling public access, requiring a shift to VMSS-based self-hosted agents within the VNet. This pattern has become standard across the industry, but ELCA’s early documentation helped establish best practices for Infrastructure-as-Code in private networking environments. Their Terraform modules now include automated Private Endpoint provisioning with DNS zone group configuration, streamlining deployment across multiple client environments.

The scale context encompasses dozens of client data platforms, each with 10-15 Private Endpoints protecting storage, database, and AI service access. The measurable outcome includes 100% elimination of public internet exposure for client data processing, meeting Swiss banking regulations requiring data sovereignty and network isolation. The architecture demonstrates that Private Endpoints integrate successfully with Infrastructure-as-Code workflows once the VNet-based CI/CD pipeline challenge is addressed.

Source: ELCA IT Medium Blog

Databricks Serverless with Private Link

Azure Databricks introduced comprehensive Private Link support for serverless compute in 2024, enabling organisations to maintain private connectivity for SQL warehouses, notebooks, jobs, and AI model serving without managing persistent compute infrastructure. The Network Connectivity Configuration (NCC) tool provides centralised management of private endpoints across workspaces, reducing operational overhead whilst supporting regulated workloads.

“When accessing their data, many of our customers want dedicated and private connectivity,” according to Vukola Milenkovic, Databricks Solution Manager at Erste Group, one of Central and Eastern Europe’s largest banking groups. The serverless Private Link capability supports financial services organisations processing customer data under European banking supervision requirements whilst eliminating the need for always-on compute resources.

The implementation pattern uses Private Endpoints for Databricks workspace connectivity and Azure Storage accounts, with the serverless compute plane accessing data entirely through private networking. Starting December 2024, Databricks charges for networking costs when serverless workloads connect to customer resources, making cost modelling essential for large-scale deployments. However, Private Link connections from Databricks SQL Serverless carry no additional data processing charges, reducing TCO compared to persistent cluster architectures.

Source: Databricks Blog – Azure Private Link GA Announcement

Security Posture and Compliance Acceleration

Private Endpoints constitute a core component of Microsoft’s Zero Trust architecture for Azure. They directly address three Zero Trust principles: eliminating reliance on network perimeter security through “never trust, always verify” approaches, preventing lateral movement to public PaaS endpoints under “assume breach” models, and enabling granular resource-level access control supporting “least privilege” principles. Microsoft’s Zero Trust documentation explicitly recommends Private Endpoints for securing PaaS service access across storage, SQL, Key Vault, and all supported services.

For regulated industries, Private Endpoints materially accelerate compliance. PCI-DSS network segmentation requirements under Requirement 1 are satisfied because cardholder data environments access storage and databases exclusively via private IPs within the VNet. HIPAA technical safeguards for PHI in transit benefit from traffic never leaving Microsoft’s backbone network. ISO 27001 controls for communications security (A.13) and access control (A.9) are addressed through private networking and resource-level granularity. SOC 2 logical access controls (CC6.1) and external threat reduction (CC6.6) align directly with the elimination of public endpoints. The CIS Microsoft Azure Foundations Benchmark, commonly used in SOC 2 audits, specifically recommends disabling public network access when Private Endpoints are configured.

The data exfiltration prevention capability represents the strongest security differentiator versus Service Endpoints. Because each Private Endpoint maps to a specific resource instance, a compromised workload connected to Storage Account A via Private Endpoint cannot exfiltrate data to an attacker-controlled Storage Account B. The endpoint simply doesn’t provide connectivity to any other resource. Service Endpoints, by contrast, provide access to all instances of a service type within a region. Azure Policy can further restrict which resources Private Endpoints may target, and services like Synapse Analytics and Data Factory offer built-in exfiltration protection through managed private endpoints that block connections to unapproved tenants.

One critical gap exists in monitoring capabilities. NSG flow logs cannot capture traffic at the Private Endpoint NIC, meaning traffic can only be recorded at the source VM, creating a visibility blind spot for security operations teams. The newer VNet flow logs, which replace NSG flow logs retiring September 2027, improve this situation by including a PrivateEndpointResourceId field in traffic analytics. Microsoft Defender for Cloud provides built-in recommendations tracking Private Endpoint adoption per service and maps findings to regulatory compliance frameworks including PCI-DSS, HIPAA, ISO 27001, and SOC 2.

The architectural approach aligns with patterns documented in our examination of Azure’s resilience building blocks, where network-level isolation complements availability zones and region pairs to create defence-in-depth architectures meeting enterprise reliability requirements.

Implementation Roadmap: Pilot to Enterprise-Wide

Phase 1: Foundation (Weeks 1-4)

Deploy the DNS infrastructure first, as this determines whether Private Endpoints will function correctly. Create centralised Private DNS zones in the connectivity subscription for your initial service types. The essential zones for most organisations include privatelink.blob.core.windows.net for Storage Accounts, privatelink.database.windows.net for SQL Database, privatelink.vaultcore.azure.net for Key Vault, privatelink.azurewebsites.net for App Service, and privatelink.redis.cache.windows.net for Redis Cache. Deploy Azure DNS Private Resolver if hybrid resolution is needed, with one inbound endpoint in a dedicated resolver subnet and one outbound endpoint for conditional forwarding. Link zones to the hub VNet and validate resolution from a test VM before creating any Private Endpoints.

Configure Azure Policy in Audit mode during this phase. Deploy the three-policy set covering public endpoint denial, privatelink DNS zone creation restrictions, and DNS zone group automation. Run Policy compliance scans across all subscriptions to understand the current state of public PaaS access. This baseline becomes critical for measuring progress during later phases and identifying resources requiring migration.

Phase 2: Pilot (Weeks 5-8)

Select 3-5 non-critical services across different service types for the pilot. A typical selection includes one Storage Account, one SQL Database, and one Key Vault. Create Private Endpoints in a single spoke VNet that serves a development or test workload. Enable DNS zone groups for automated record management. Test access systematically from within the VNet, from peered spokes, and from on-premises networks if hybrid connectivity is configured.

Document every DNS resolution failure during this phase. These failures inform your troubleshooting runbook and identify gaps in the DNS architecture. Common issues include conditional forwarders pointing to privatelink zones instead of public zones, missing VNet links preventing resolution, and public resources returning NXDOMAIN when Private DNS zones exist without corresponding endpoints. Switching Azure Policy from Audit to Deny for new deployments in the pilot subscription validates that the automation functions correctly.

Phase 3: Controlled Rollout (Weeks 9-16)

Expand to production workloads one service type at a time. Prioritise services handling sensitive data, including databases storing PII, Key Vaults with encryption keys, and storage accounts containing regulated content. Each service type requires validation of access patterns before proceeding to the next. For Azure Storage, this means testing blob, file, table, and queue access separately, as each requires its own Private Endpoint.

Implement Infrastructure-as-Code modules during this phase. Whether using Bicep, Terraform, or ARM templates, create reusable patterns for Private Endpoint deployment including DNS zone group configuration, NSG rules if using network policies, and tagging for cost allocation. A critical implementation detail: if using DeployIfNotExists Policy for DNS integration, omit DNS zone group configuration from your IaC to avoid conflicts between Policy-driven and template-driven DNS record creation.

Update CI/CD pipelines to use self-hosted agents within VNets, as Microsoft-hosted agents cannot reach private endpoints. Deploy VMSS-based agent pools in the hub VNet with access to all required Private Endpoints. Budget approximately £11-30 monthly per agent depending on VM size and uptime requirements. Configure service connections in Azure DevOps or GitHub Actions to authenticate using managed identities rather than service principals where possible.

Phase 4: Enterprise-Wide Enforcement (Weeks 17+)

Enable Private Endpoints for all supported services across the Azure estate. Switch Azure Policy from Deny on new deployments to Audit on existing resources, generating a gap analysis of resources still using public endpoints. Create a prioritised migration plan based on data sensitivity, compliance requirements, and operational complexity. Services with simple access patterns like Key Vault migrate quickly, whilst services with complex integrations like Azure SQL Managed Instance or Databricks require more planning.

Disable public network access on each PaaS resource after validating private connectivity. This is a separate per-resource configuration that must be enforced through Azure Policy or automated via PowerShell scripts. Key Vault, Storage Accounts, SQL Databases, and most other services provide a publicNetworkAccess property that should be set to Disabled once Private Endpoints are operational.

Implement ongoing governance processes during this phase. Audit idle Private Endpoints monthly, as each costs £5.40 regardless of traffic volume. Monitor data processing volumes to optimise tier usage if traffic patterns suggest moving from first-petabyte to higher-volume pricing tiers. Update DNS zones as Azure adds new Private Link-supported services, maintaining the centralised DNS architecture. Review and update Azure Policy definitions quarterly to incorporate new service types and refined controls based on operational experience.

Decision Framework: When to Use Private Endpoints

Private Endpoints justify their complexity and cost when organisations face specific technical or regulatory drivers. For workloads subject to PCI-DSS, HIPAA, ISO 27001, or SOC 2 compliance, Private Endpoints provide documented technical controls that accelerate certification. Auditors increasingly expect network isolation for regulated data, making Private Endpoints a compliance requirement rather than optional security enhancement. Organisations processing personal data under UK GDPR benefit from demonstrable technical safeguards for data in transit, addressing Article 32 security of processing requirements.

For hybrid connectivity scenarios requiring on-premises access to Azure PaaS services, Private Endpoints over ExpressRoute or VPN provide the only architecture that avoids public internet routing. Service Endpoints cannot extend to on-premises networks, making Private Endpoints essential for hybrid cloud architectures. Organisations with multiple Azure subscriptions or complex hub-and-spoke topologies benefit from Private Endpoints’ resource-level granularity compared to Service Endpoints’ region-wide service-type access.

Data exfiltration prevention requirements drive Private Endpoint adoption in sensitive environments. Financial services organisations handling customer financial data, healthcare providers managing PHI, and government agencies processing classified information require architectural controls preventing data transfer to unauthorised destinations. Private Endpoints’ per-resource connectivity model provides this control at the network layer, complementing application-layer security controls.

Avoid Private Endpoints when workloads lack these specific drivers. Internal development environments with no regulatory requirements often find Service Endpoints adequate, providing free backbone-routed access without DNS overhead. Public-facing applications that must accept internet traffic still benefit from Private Endpoints on backend services, but the frontend necessarily retains public endpoints. Rapidly scaling ephemeral resources like short-lived containers or serverless functions that spin up and tear down frequently may not justify the endpoint creation overhead and DNS propagation delay.

Organisations lacking DNS management expertise should carefully weigh the operational burden. Every new Azure service type added to Private Link requires a new DNS zone and conditional forwarder update across the entire DNS infrastructure. At scale, this becomes a continuous operational task requiring coordination between networking, security, and platform teams. The b.telligent consultancy’s documented advice resonates across multiple implementations: decide on private networking early in your Azure journey, because retrofitting later is significantly more complex and often doubles the engineering effort compared to building private-first architectures from the beginning.

Key Takeaways

Private Endpoints represent the most architecturally sound path to securing Azure PaaS services at enterprise scale. At approximately £1,200 monthly for 100 endpoints, the infrastructure cost is modest compared to the security and compliance gains. The elimination of public attack surface, built-in data exfiltration prevention, and direct alignment with PCI-DSS, HIPAA, ISO 27001, and SOC 2 requirements provide measurable value that justifies the investment for regulated workloads.

The real cost is operational rather than financial. DNS architecture demands rigorous upfront planning with centralised Private DNS zones, conditional forwarders from on-premises infrastructure, and automation through Azure Policy to maintain consistency at scale. CI/CD pipelines require re-engineering for private access using self-hosted agents within VNets. Infrastructure-as-Code complexity roughly doubles compared to public endpoint configurations. These challenges are solvable at scale, as demonstrated by organisations like Michelin and ELCA IT, but they require commitment to proper architecture rather than tactical implementations.

The decision framework is ultimately straightforward. If your data is regulated, your compliance auditors expect network isolation, or your risk posture demands zero public exposure, Private Endpoints are not optional. They are foundational infrastructure that should be designed into Azure architectures from the beginning rather than retrofitted later. The DNS complexity, operational overhead, and CI/CD pipeline changes are necessary engineering work that enables production-grade security posture for enterprise Azure deployments.

Organisations successfully deploying Private Endpoints at scale share common characteristics: centralised DNS management in hub subscriptions, Azure Policy automation preventing configuration drift, phased rollouts that validate architecture before enforcement, and Infrastructure-as-Code patterns that embed Private Endpoint best practices into standard deployment templates. These patterns, combined with the technical implementation guidance from Microsoft’s Cloud Adoption Framework, provide a proven path to zero-trust networking at enterprise scale.

Useful Links

- Azure Private Link Official Documentation – Microsoft’s comprehensive technical reference covering Private Link, Private Endpoints, and Private Link Service

- Private Link and DNS Integration at Scale – Cloud Adoption Framework – Microsoft’s enterprise DNS architecture patterns and Azure Policy automation strategies

- Azure Private Endpoint DNS Integration Scenarios – Detailed DNS resolution patterns for hub-and-spoke, on-premises, and multi-tenant scenarios

- Azure Private Link Pricing Calculator – Official pricing details for Private Endpoints and Private Link Service

- Private Link Service Availability – Current list of Azure services supporting Private Endpoints, updated regularly by Microsoft

- KPMG UK Deep Dive into Azure Private Endpoints – Detailed technical analysis from KPMG UK’s engineering team on implementation challenges and solutions

- Azure Private Link in Hub-and-Spoke Networks – Azure Architecture Center reference implementation for hub-and-spoke Private Endpoint placement

- Azure DNS Private Resolver Overview – Technical documentation for Microsoft’s managed DNS resolver service for hybrid scenarios

- Apply Zero Trust to Azure PaaS Services – Microsoft’s Zero Trust guidance showing how Private Endpoints fit into broader security architecture

- Azure Private Link vs Service Endpoints Comparison – Community technical analysis comparing capabilities and use cases