Your weekly dose of actionable cloud wisdom to start the week right

The Problem

Your entire business runs on AWS, and then eu-west-1 goes down for 6 hours. Or Azure has a global authentication outage. Or Google Cloud has a networking issue. You’re completely offline, losing revenue every minute, whilst your competitors who planned for multi-cloud resilience keep serving customers.

The Solution

Implement a multi-cloud backup strategy that protects against provider outages, vendor lock-in, and regional disasters. You don’t need to run everything everywhere – just ensure you can recover when your primary cloud provider has a bad day.

Multi-Cloud Backup Strategies:

1. Cross-Cloud Database Backups

# AWS RDS to Azure Blob Storage

aws rds create-db-snapshot --db-snapshot-identifier daily-backup-$(date +%Y%m%d)

aws rds copy-db-snapshot --source-db-snapshot-identifier daily-backup-$(date +%Y%m%d) \

--target-db-snapshot-identifier cross-cloud-backup

# Export to Azure using AzCopy

azcopy copy "s3://my-rds-exports/*" "https://backupstorage.blob.core.windows.net/rds-backups/"

2. File Sync Across Providers

# Sync AWS S3 to Google Cloud Storage

gsutil -m rsync -d -r s3://my-aws-bucket gs://my-gcp-backup-bucket

# Sync Azure Blob to AWS S3

az storage blob download-batch \

--destination ./temp-backup \

--source backups \

--account-name myazurestorage

aws s3 sync ./temp-backup s3://my-cross-cloud-backups/azure-backup/

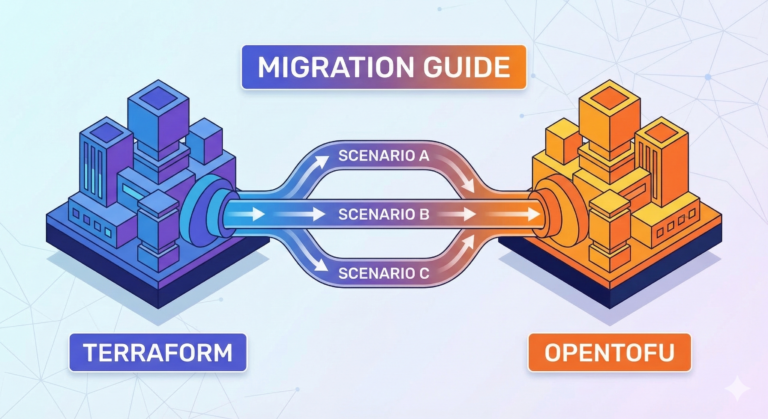

3. Infrastructure as Code Replication

# Terraform modules that work across providers

module "database" {

source = "./modules/database"

# AWS configuration

aws_region = "eu-west-1"

aws_instance_class = "db.t3.micro"

# Azure configuration (dormant until needed)

azure_location = "UK South"

azure_sku = "Basic"

# GCP configuration (dormant until needed)

gcp_region = "europe-west2"

gcp_tier = "db-f1-micro"

}

4. Application Deployment Automation

# GitHub Actions for multi-cloud deployment

name: Multi-Cloud Backup Deployment

on:

workflow_dispatch:

inputs:

target_cloud:

description: 'Deploy to which cloud?'

required: true

default: 'aws'

type: choice

options:

- aws

- azure

- gcp

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- name: Deploy to AWS

if: github.event.inputs.target_cloud == 'aws'

run: |

# AWS deployment commands

- name: Deploy to Azure

if: github.event.inputs.target_cloud == 'azure'

run: |

# Azure deployment commands

- name: Deploy to GCP

if: github.event.inputs.target_cloud == 'gcp'

run: |

# GCP deployment commands

Multi-Cloud Strategy Levels

Level 1: Data Backup (Start Here)

- Daily database exports to different cloud provider

- File synchronisation between cloud storage services

- Configuration backups stored in multiple locations

- Documentation of recovery procedures

Level 2: Warm Standby

- Infrastructure code ready to deploy elsewhere

- DNS failover configured for quick switching

- Application deployments tested on backup cloud

- Monitoring of backup systems

Level 3: Active-Active (Advanced)

- Load balancing across cloud providers

- Database replication in real-time

- Automatic failover with health checks

- Cost optimisation across providers

Why It Matters

- Business Continuity: Keep serving customers during outages

- Negotiating Power: Avoid vendor lock-in and improve contract terms

- Compliance: Many regulations require geographically distributed backups

- Risk Management: Protect against provider-specific risks

Try This Week

- Audit your current setup – How dependent are you on one provider?

- Choose your backup cloud – Pick a secondary provider for critical data

- Start with data backup – Export your most critical databases/files

- Document recovery procedures – Write down the steps to restore from backup

Quick Win: Cross-Cloud Storage Sync

# Set up automated daily sync (using cron)

#!/bin/bash

# daily-backup.sh

# Export critical data

aws s3 sync s3://production-data ./backup-staging/

azcopy copy "./backup-staging/*" "https://backup.blob.core.windows.net/daily-backups/"

# Clean up local staging

rm -rf ./backup-staging/*

# Log success

echo "$(date): Cross-cloud backup completed" >> /var/log/backup.log

Cost-Effective Multi-Cloud Tips

- Use cold storage for backups (Amazon Glacier, Azure Archive, GCP Coldline)

- Compress data before cross-cloud transfer to reduce egress costs

- Schedule transfers during off-peak hours for better rates

- Monitor costs – set up billing alerts for backup storage

Recovery Testing

- Monthly tests: Restore a small dataset from backup cloud

- Quarterly drills: Full application recovery exercise

- Document everything: Time to recover, issues encountered, lessons learned

- Automate testing: Use scripts to verify backup integrity

Common Pitfalls to Avoid

- Egress costs: Moving data out of clouds can be expensive

- Authentication complexity: Manage credentials securely across providers

- Data sovereignty: Ensure backups comply with local regulations

- Version compatibility: Test that backups work with current application versions

Pro Tip: Start small with just your most critical data. It’s better to have 90% of your data in one cloud and 10% backed up elsewhere than to have 100% of your data at risk from a single provider outage.

Successfully recovered from a cloud outage using multi-cloud backups? I’d love to hear your war stories and lessons learned – they make the best case studies!