The landscape of cloud computing has evolved dramatically over the past decade, presenting developers and architects with an abundance of choices for deploying applications. Google Cloud Platform’s compute offerings exemplify this evolution, providing three distinct yet complementary services: Cloud Run, App Engine, and Google Kubernetes Engine (GKE). Each serves different needs, scales differently, and requires varying levels of operational overhead.

The challenge lies not in the availability of options, but in selecting the most appropriate one for your specific requirements. This decision impacts everything from development velocity and operational complexity to cost optimisation and long-term scalability. Understanding the nuances of each service enables teams to make informed architectural decisions that align with both current needs and future growth trajectories.

Understanding the Fundamental Approaches

Before diving into detailed comparisons, it’s essential to grasp the philosophical differences underpinning each service. These aren’t merely technical variations; they represent distinct approaches to application deployment and management.

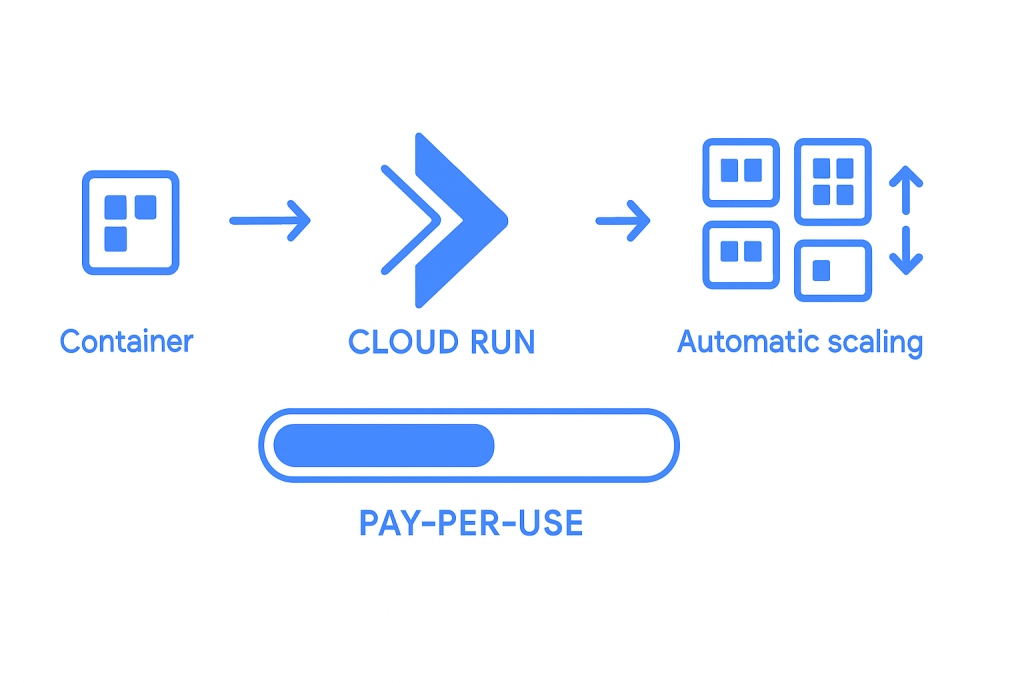

Cloud Run: Serverless Container Platform

Cloud Run embodies the serverless paradigm whilst providing the flexibility of containerisation. It abstracts away infrastructure management entirely, automatically scaling from zero to handle traffic spikes and scaling back down when demand subsides. The service charges only for actual usage, making it particularly attractive for workloads with variable traffic patterns.

The appeal of Cloud Run lies in its simplicity. Developers package their applications in containers and deploy them without concerning themselves with server management, load balancing, or scaling configuration. The platform handles these operational aspects automatically, allowing teams to focus entirely on application logic and user experience.

However, this abstraction comes with trade-offs. While Cloud Run excels at stateless, HTTP-driven workloads, it has limitations in terms of execution time, memory allocation, and networking capabilities. Understanding these constraints is crucial for determining whether Cloud Run aligns with your application’s requirements.

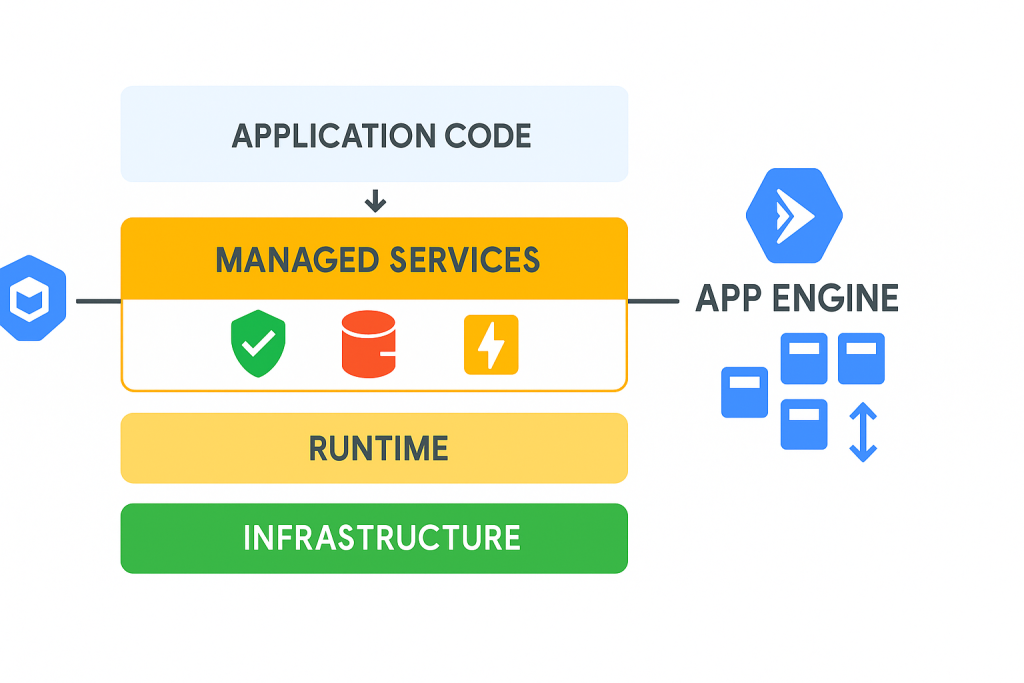

App Engine: Platform-as-a-Service Heritage

App Engine represents Google’s original Platform-as-a-Service offering, launched in 2008 as one of the first major PaaS solutions. It provides a higher level of abstraction than Cloud Run, offering built-in services for common application needs such as user authentication, data storage, and task queues.

The service comes in two flavours: Standard Environment and Flexible Environment. The Standard Environment provides faster scaling and built-in services but restricts runtime choices and system access. The Flexible Environment offers more runtime flexibility and system access but with longer startup times and different scaling characteristics.

App Engine’s strength lies in its comprehensive managed services ecosystem. For applications that can leverage these built-in capabilities, App Engine can significantly accelerate development and reduce operational overhead. The platform’s automatic scaling, version management, and traffic splitting capabilities make it particularly well-suited for web applications and APIs.

Google Kubernetes Engine: Enterprise-Grade Orchestration

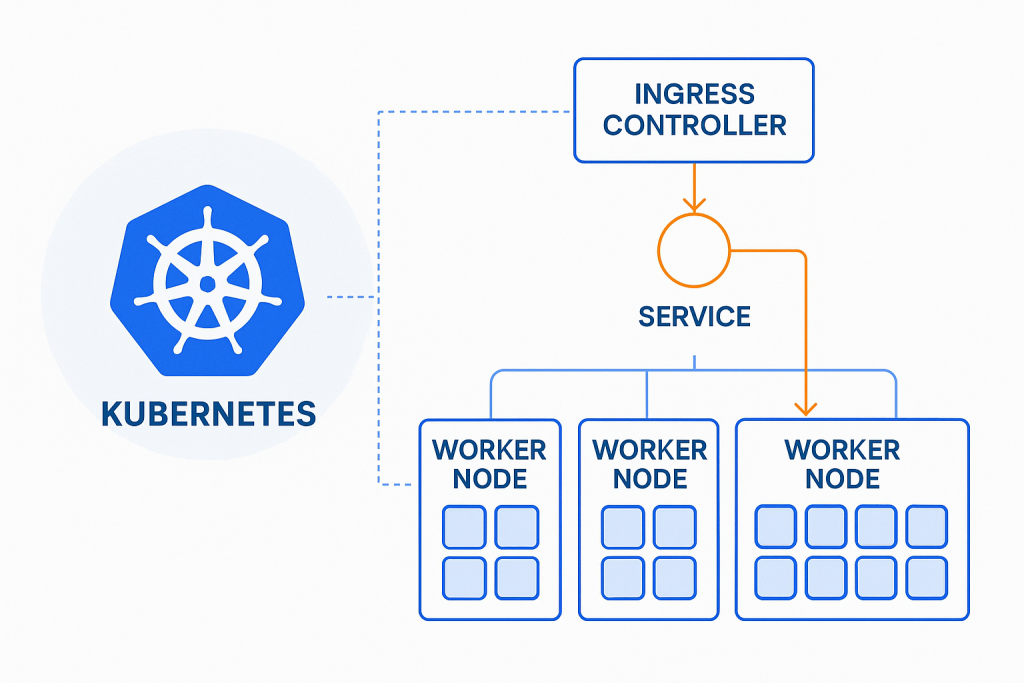

GKE provides a managed Kubernetes service that offers the full power and flexibility of container orchestration whilst reducing the operational burden of managing Kubernetes clusters. It represents the most flexible but also most complex option among the three services.

The fundamental advantage of GKE lies in its ability to handle complex, multi-tier applications with sophisticated networking, storage, and scaling requirements. It supports stateful applications, batch processing, machine learning workloads, and virtually any containerised application architecture.

However, this flexibility comes with increased complexity. Teams choosing GKE must understand Kubernetes concepts, manage application manifests, and handle cluster operations. While GKE abstracts away much of the underlying infrastructure management, it still requires significant Kubernetes expertise to operate effectively.

Detailed Service Comparison

Scaling Characteristics and Performance

The scaling behaviour of each service reflects its underlying architecture and intended use cases. These differences have profound implications for application performance and cost optimisation.

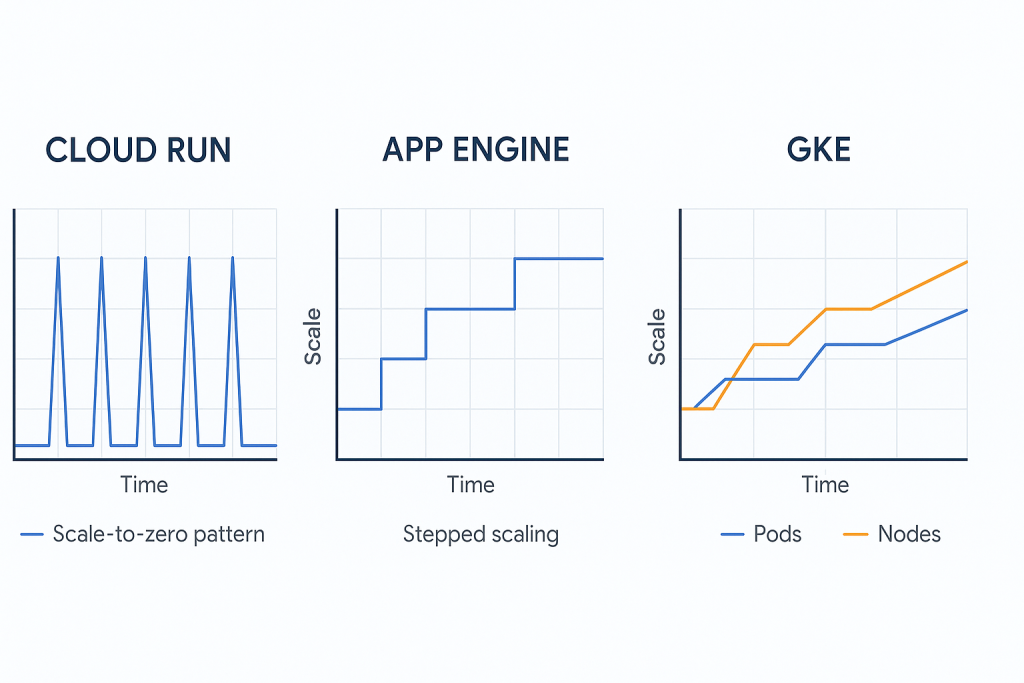

Cloud Run’s scaling model is particularly elegant for HTTP workloads. It can scale from zero instances to thousands within seconds, automatically adjusting based on incoming request volume. Each instance can handle multiple concurrent requests, with the platform managing load distribution automatically. The scale-to-zero capability means applications incur no costs during idle periods, making Cloud Run exceptionally cost-effective for intermittent workloads.

The service imposes certain limitations: maximum execution time of 60 minutes per request, memory limits up to 32GB per instance, and CPU allocation only during request processing (in the default configuration). These constraints make Cloud Run ideal for stateless, request-response workloads but unsuitable for long-running processes or applications requiring persistent connections.

App Engine’s scaling behaviour varies between its environments. The Standard Environment can scale to zero and start new instances in milliseconds, similar to Cloud Run but with tighter resource constraints. The Flexible Environment uses virtual machines and takes longer to start new instances but provides more flexibility in terms of runtime and system access.

App Engine Standard excels at handling sudden traffic spikes for web applications, with its rapid scaling capabilities and built-in services for session management, caching, and user authentication. The platform’s automatic scaling algorithms consider factors beyond simple request volume, including response latency and instance utilisation.

GKE’s scaling operates at multiple levels: cluster-level node scaling and pod-level horizontal and vertical scaling. The Cluster Autoscaler can add or remove nodes based on resource demands, whilst the Horizontal Pod Autoscaler adjusts the number of application replicas based on CPU utilisation, memory usage, or custom metrics.

This multi-tier scaling approach provides fine-grained control over resource allocation and cost optimisation. However, it requires careful configuration and monitoring to ensure optimal performance and cost efficiency. GKE also supports Vertical Pod Autoscaling, which automatically adjusts resource requests and limits based on actual usage patterns.

Development and Deployment Experience

The development experience varies significantly across the three platforms, reflecting their different abstraction levels and target audiences.

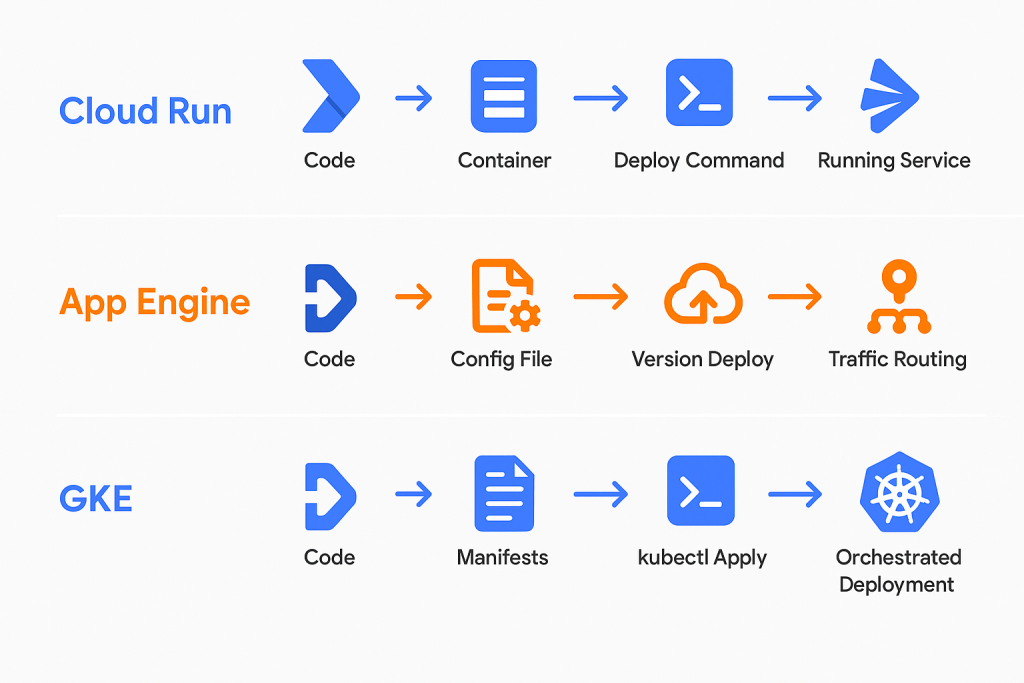

Cloud Run provides the most streamlined deployment experience. Developers build container images using standard Docker workflows and deploy them with a single command. The platform automatically handles service configuration, load balancing, and SSL certificate provisioning. The integration with Cloud Build enables seamless CI/CD pipelines that build and deploy applications directly from source code repositories.

The service supports blue-green deployments and gradual traffic migration through its revision management system. Each deployment creates a new revision, and traffic can be split between revisions for testing or gradual rollouts. This approach simplifies deployment strategies whilst maintaining the flexibility to rollback quickly if issues arise.

App Engine’s deployment model centers around versions and services. Applications are deployed as versions within services, with traffic routing controlled at the version level. This model facilitates A/B testing, canary deployments, and feature flagging without additional infrastructure complexity.

The Standard Environment’s deployment process is particularly streamlined for supported runtimes, requiring only application code and a simple configuration file. The platform handles dependency management, runtime provisioning, and service configuration automatically. However, this simplicity comes with restrictions on runtime modifications and system-level access.

GKE deployments involve Kubernetes manifests that define application configurations, resource requirements, and networking policies. While this approach provides maximum flexibility, it requires understanding of Kubernetes concepts such as Deployments, Services, ConfigMaps, and Secrets.

The complexity of GKE deployments can be managed through tools like Helm charts, Kustomize, or GitOps solutions like Argo CD. These tools provide templating, configuration management, and automated deployment capabilities that streamline the deployment process whilst maintaining the flexibility that GKE provides.

Operational Overhead and Management

Operational requirements vary dramatically between the three services, influencing team structure, skill requirements, and ongoing maintenance efforts.

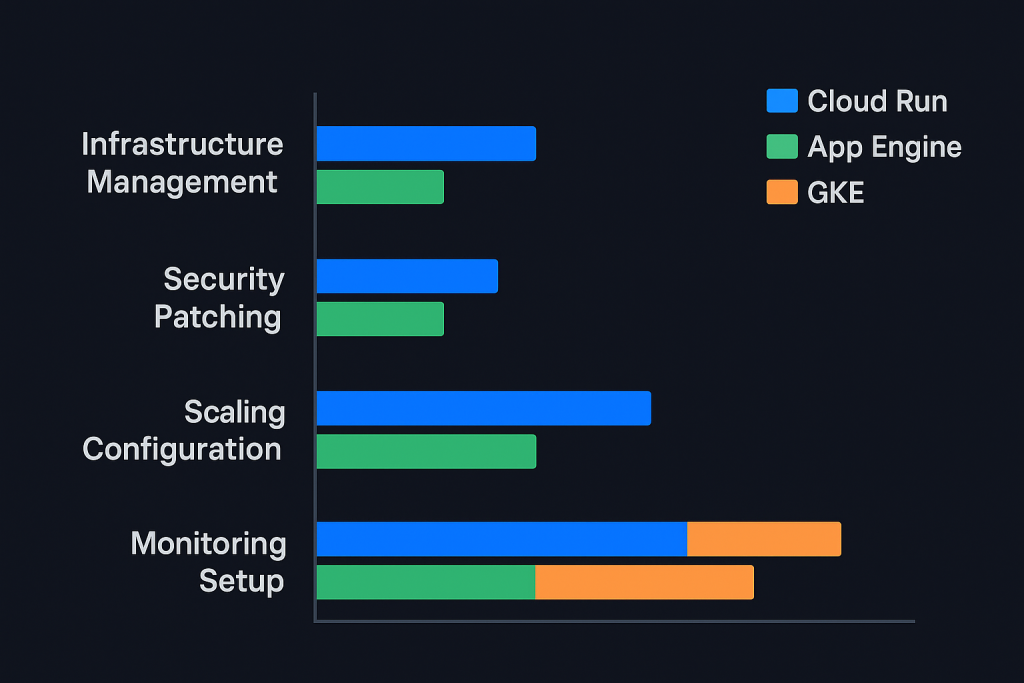

Cloud Run requires minimal operational overhead. The platform handles infrastructure management, security patching, and capacity planning automatically. Operations teams can focus on application monitoring, performance optimisation, and cost management rather than infrastructure concerns.

Monitoring and debugging are straightforward through Cloud Logging and Cloud Monitoring integration. The service provides detailed metrics on request volume, latency, error rates, and resource utilisation. The scale-to-zero model means applications naturally recover from most infrastructure-related issues without manual intervention.

App Engine similarly abstracts away infrastructure management but provides additional operational capabilities through its built-in services. The platform includes comprehensive logging, monitoring, and debugging tools specifically designed for web applications. Version management capabilities enable sophisticated deployment strategies and quick rollbacks.

The Standard Environment’s operational model is particularly hands-off, with automatic security patching, runtime updates, and capacity management. However, the Flexible Environment requires more operational attention, as it uses virtual machines that need periodic maintenance and updates.

GKE operational overhead is significantly higher, requiring teams to understand Kubernetes cluster management, networking configuration, and security policies. While GKE automates many cluster operations through features like auto-upgrade and auto-repair, teams must still manage application configurations, resource allocation, and cluster-level policies.

However, this operational complexity brings corresponding benefits in terms of observability and control. Kubernetes provides extensive APIs for monitoring, logging, and metrics collection. Tools like Prometheus, Grafana, and Jaeger integrate seamlessly with GKE to provide comprehensive observability solutions.

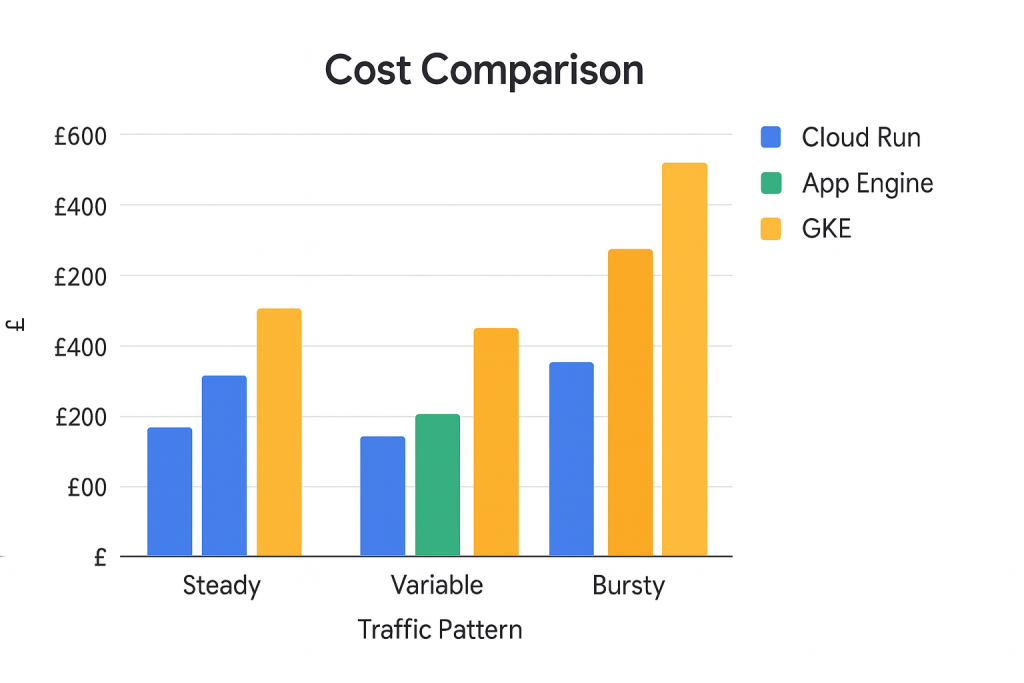

Cost Considerations and Optimisation

Understanding the cost implications of each service is crucial for making informed decisions, as pricing models and optimisation strategies differ significantly.

Cloud Run Pricing Model

Cloud Run’s pricing model is usage-based, charging for CPU and memory allocation during request processing and for the number of requests served. The scale-to-zero capability means applications incur no compute costs during idle periods, making Cloud Run exceptionally cost-effective for variable workloads.

The pricing structure includes CPU, memory, and request charges, with additional costs for networking and storage. CPU costs are calculated based on allocated vCPUs multiplied by execution time, whilst memory costs are based on allocated memory during the same period. Request charges are relatively minimal but can accumulate for high-volume applications.

Cost optimisation strategies for Cloud Run focus on right-sizing resource allocations and optimising application startup times. Since billing occurs only during request processing, faster application startup and response times directly reduce costs. Implementing efficient caching strategies and optimising container images can significantly impact overall expenses. For teams just starting with GCP, understanding the free tier limitations and opportunities can help maximise value whilst learning the platform.

App Engine Cost Structure

App Engine’s pricing varies between its environments. The Standard Environment uses instance-hour pricing for frontend instances and request-based pricing for automatic scaling instances. The Flexible Environment charges for virtual machine resources similar to Compute Engine.

The Standard Environment’s automatic scaling can lead to cost savings for applications with variable traffic patterns, as instances automatically shut down during low traffic periods. However, applications requiring always-on instances may find the costs higher than expected.

Built-in services like Datastore, Memcache, and Task Queues have separate pricing structures that should be factored into total cost calculations. These services often provide cost advantages compared to managing equivalent functionality on other platforms.

GKE Economic Considerations

GKE pricing includes cluster management fees and the underlying Compute Engine resources. The cluster management fee is relatively small compared to node costs, but the flexibility to choose machine types and implement sophisticated scaling strategies can lead to significant cost optimisations.

Effective GKE cost management involves rightsizing node pools, implementing cluster autoscaling, using preemptible instances where appropriate, and optimising resource requests and limits. The platform’s flexibility enables sophisticated cost optimisation strategies but requires ongoing monitoring and adjustment.

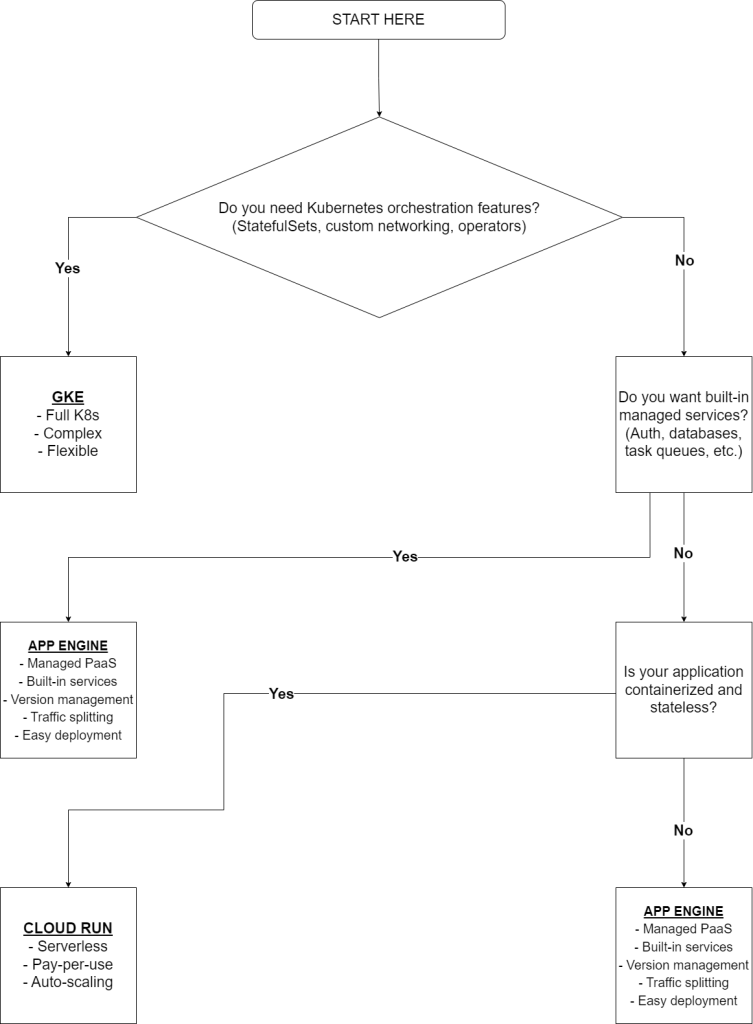

Decision Framework and Use Case Analysis

Selecting the appropriate service requires evaluating multiple factors including application characteristics, team capabilities, operational requirements, and business constraints.

When to Choose Cloud Run

Cloud Run excels for stateless, HTTP-driven applications that benefit from automatic scaling and pay-per-use pricing. It’s particularly well-suited for:

API Services and Microservices: RESTful APIs, GraphQL services, and microservices that handle discrete requests benefit from Cloud Run’s automatic scaling and cost-effective pricing model. The service’s ability to scale to zero makes it ideal for services with varying load patterns.

Event-Driven Applications: Applications triggered by Cloud Storage events, Pub/Sub messages, or HTTP webhooks can leverage Cloud Run’s rapid scaling capabilities. The integration with Eventarc enables sophisticated event-driven architectures without infrastructure management overhead.

Batch Processing Jobs: Short to medium-duration batch jobs that process data in response to triggers can benefit from Cloud Run’s execution model. The 60-minute timeout accommodates many batch processing scenarios whilst the automatic scaling ensures efficient resource utilisation.

Development and Testing Environments: The rapid deployment capabilities and scale-to-zero billing make Cloud Run excellent for development and testing environments where resources are used intermittently. Combined with GCP’s generous free tier offerings, this makes Cloud Run particularly attractive for experimentation and learning.

Cloud Run may not be suitable for applications requiring persistent connections, long-running processes, or complex networking configurations. Applications with strict latency requirements might also face challenges due to cold start times.

When to Choose App Engine

App Engine is optimal for web applications that can benefit from its comprehensive managed services ecosystem and simplified deployment model.

Traditional Web Applications: Multi-page web applications with user authentication, session management, and database integration benefit from App Engine’s built-in services. The platform’s traffic splitting and version management capabilities facilitate A/B testing and gradual feature rollouts.

Content Management Systems: Applications requiring user authentication, content storage, and dynamic rendering can leverage App Engine’s integrated services for rapid development and deployment.

API Backends with Rich Functionality: APIs that require user authentication, rate limiting, and integration with Google services can benefit from App Engine’s built-in capabilities rather than implementing these features independently.

Applications Requiring Rapid Prototyping: The comprehensive service ecosystem and simplified deployment model make App Engine excellent for rapidly prototyping and validating application concepts.

App Engine may not suit applications requiring specific runtime modifications, complex networking configurations, or tight integration with third-party systems that aren’t supported by the platform’s runtime restrictions.

When to Choose GKE

GKE provides the flexibility and power needed for complex, enterprise-grade applications that require sophisticated orchestration capabilities.

Multi-Tier Applications: Complex applications with multiple interconnected services, databases, and caching layers benefit from Kubernetes’ orchestration capabilities and networking flexibility.

Stateful Applications: Applications requiring persistent storage, database clustering, or stateful services can leverage Kubernetes’ StatefulSets and persistent volume capabilities.

Batch and ML Workloads: Large-scale batch processing, machine learning training jobs, and data pipeline applications benefit from GKE’s job scheduling and resource management capabilities.

Legacy Application Modernisation: Existing applications being containerised and modernised often require the flexibility that GKE provides for gradual migration and integration with existing systems.

Multi-Cloud and Hybrid Deployments: Applications requiring deployment across multiple cloud providers or integration with on-premises infrastructure benefit from Kubernetes’ standardised APIs and tooling.

GKE requires significant Kubernetes expertise and operational overhead, making it less suitable for simple applications or teams without container orchestration experience.

Migration Considerations

Teams often need to migrate between services as application requirements evolve. Understanding migration paths and strategies helps inform initial architecture decisions and future planning.

Migrating to Cloud Run

Applications currently running on traditional servers or other container platforms can often migrate to Cloud Run with minimal changes, provided they meet the service’s constraints. The primary requirements are containerisation and stateless operation.

Key migration considerations include adapting to the request-response model, implementing graceful shutdown procedures, and optimising for cold start performance. Applications using persistent connections or requiring background processing may need architectural modifications.

Migrating from Cloud Run

Applications outgrowing Cloud Run’s constraints might migrate to GKE for increased flexibility or App Engine for additional managed services. Cloud Run’s containerised approach facilitates migration to GKE, as existing containers can often run on Kubernetes with appropriate manifest configurations.

Migration to App Engine typically requires more significant changes, as applications must adapt to App Engine’s runtime environment and service integration model.

App Engine Evolution Paths

App Engine applications can migrate to Cloud Run for improved scaling characteristics and container flexibility. This migration typically involves containerising the application and adapting to Cloud Run’s execution model.

Migration to GKE provides access to the full Kubernetes ecosystem but requires significant operational overhead increases. This path is typically chosen when applications require capabilities that App Engine’s managed environment cannot provide.

GKE Optimisation and Alternatives

GKE applications might migrate to Cloud Run when simplification is desired and application requirements align with Cloud Run’s capabilities. This migration reduces operational overhead whilst maintaining containerisation benefits.

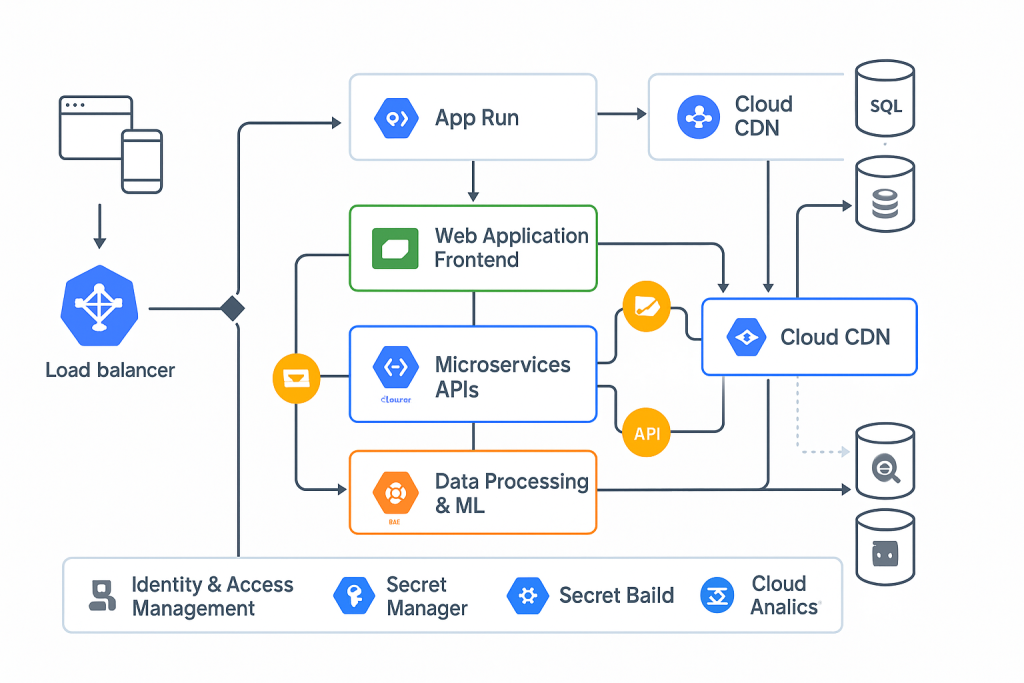

Advanced Scenarios and Hybrid Approaches

Real-world applications often benefit from combining multiple services rather than committing to a single platform. Understanding how these services complement each other enables sophisticated architectural patterns.

Service Composition Patterns

Many applications use Cloud Run for stateless API services whilst leveraging GKE for stateful components like databases or message queues. This hybrid approach optimises costs and operational overhead whilst maintaining the flexibility needed for complex functionality.

App Engine can serve as the primary web application platform whilst using Cloud Run for specific microservices that require different scaling characteristics or resource requirements. This combination leverages App Engine’s comprehensive services whilst providing flexibility for specialised components.

Event-Driven Architectures

Cloud Run’s integration with Eventarc and Pub/Sub enables sophisticated event-driven architectures where different services handle specific types of events. GKE can process long-running workflows triggered by Cloud Run services, creating efficient resource utilisation patterns.

Data Processing Pipelines

Complex data processing often combines multiple services: Cloud Run for API endpoints that trigger processing, GKE for intensive computation tasks, and App Engine for user interfaces that visualise results. This approach optimises each component for its specific role whilst maintaining system cohesion.

Monitoring and Observability

Effective monitoring strategies vary across the three platforms, reflecting their different operational models and capabilities.

Cloud Run Observability

Cloud Run provides comprehensive monitoring through Cloud Logging and Cloud Monitoring integration. Key metrics include request volume, latency distributions, error rates, and cold start frequencies. The service automatically creates dashboards and alerting policies for common scenarios.

Custom metrics can be exported through OpenTelemetry or Cloud Monitoring APIs, enabling sophisticated observability solutions. The serverless model simplifies monitoring by eliminating infrastructure-level concerns whilst focusing on application performance metrics.

App Engine Monitoring

App Engine includes built-in monitoring capabilities specifically designed for web applications. The platform provides detailed request tracing, error reporting, and performance profiling tools that integrate seamlessly with the development workflow.

The service’s version management capabilities enable sophisticated monitoring strategies where different versions can be monitored independently, facilitating A/B testing and gradual rollout monitoring.

GKE Observability Solutions

GKE’s monitoring capabilities are the most comprehensive but also the most complex. The platform integrates with the entire Cloud Operations suite whilst supporting third-party monitoring solutions like Prometheus and Grafana.

Kubernetes-native monitoring tools provide detailed insights into cluster health, resource utilisation, and application performance. The flexibility to implement custom monitoring solutions enables sophisticated observability strategies tailored to specific application requirements.

Security and Compliance

Security considerations vary significantly across the three services, reflecting their different abstraction levels and operational models.

Cloud Run Security

Cloud Run provides security through multiple layers: automatic HTTPS encryption, IAM integration for service access, and container-level isolation. The serverless model eliminates many traditional security concerns related to server patching and infrastructure management.

Applications must implement authentication and authorisation logic, as Cloud Run focuses on infrastructure security rather than application-level security features. Integration with Cloud IAM enables sophisticated access control policies whilst maintaining the simplicity of the serverless model.

App Engine Security Features

App Engine includes comprehensive security features designed for web applications: built-in user authentication, CSRF protection, and automatic security updates. The Standard Environment provides additional security through its sandboxed execution model.

The platform’s integrated security features reduce the implementation burden for common security requirements whilst maintaining flexibility for custom security logic where needed.

GKE Security Landscape

GKE security operates at multiple levels: cluster security, network policies, pod security, and workload identity. The platform provides tools for implementing sophisticated security policies but requires teams to understand and configure these features appropriately.

Kubernetes’ extensive security model enables fine-grained control over application access, network communication, and resource allocation. However, this flexibility requires careful configuration and ongoing management to maintain security posture.

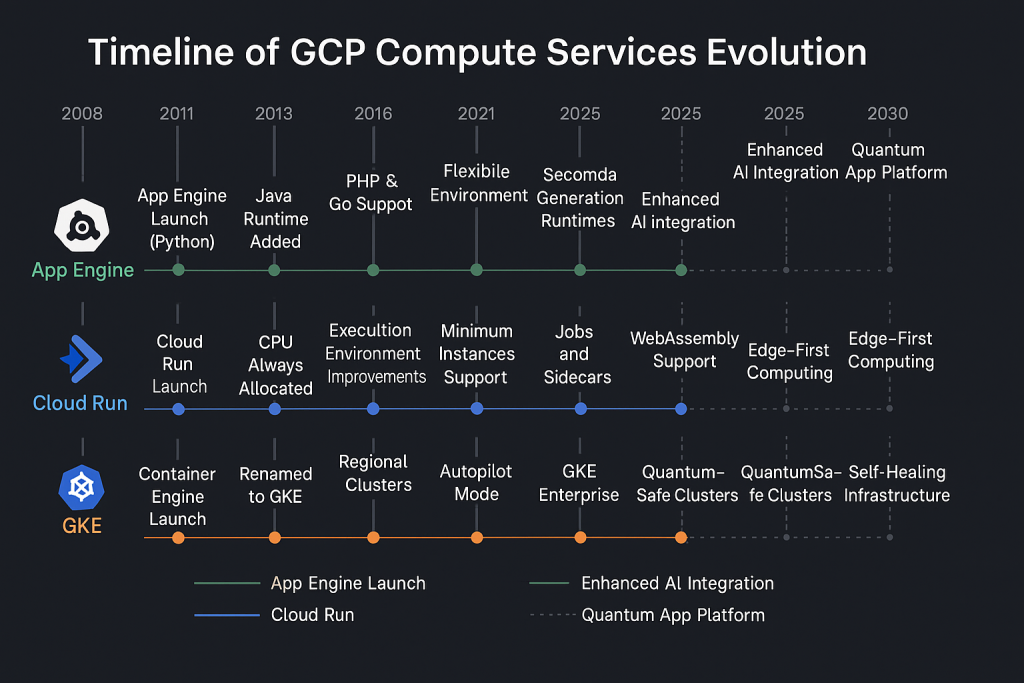

Future-Proofing and Technology Evolution

Understanding the evolution trajectory of each service helps inform long-term architectural decisions and technology investments.

Cloud Run Innovation

Cloud Run continues evolving towards supporting more use cases whilst maintaining its simplicity advantage. Recent developments include improved cold start performance, expanded runtime options, and enhanced integration with other Google services.

The service’s roadmap focuses on expanding capabilities without compromising the serverless developer experience, making it increasingly viable for a broader range of applications.

App Engine Modernisation

App Engine evolution focuses on improving performance and expanding runtime support whilst maintaining its comprehensive managed services advantage. The platform continues integrating with newer Google services whilst preserving its simplified deployment model.

Future developments aim to bridge the gap between App Engine’s simplicity and the flexibility offered by container-based platforms.

GKE Enterprise Features

GKE development emphasises enterprise features like multi-cluster management, advanced security policies, and improved operational tooling. The platform continues adopting upstream Kubernetes innovations whilst providing Google-specific enhancements.

The focus on enterprise capabilities makes GKE increasingly suitable for large-scale, mission-critical applications whilst maintaining compatibility with the broader Kubernetes ecosystem.

Conclusion and Practical Recommendations

The choice between Cloud Run, App Engine, and GKE isn’t merely a technical decision—it’s a strategic choice that impacts development velocity, operational overhead, cost optimisation, and long-term flexibility. Each service excels in specific scenarios whilst presenting trade-offs that must be carefully evaluated against your requirements.

Start with simplicity: For new projects or teams new to cloud-native development, beginning with the simplest service that meets your requirements often proves most effective. Cloud Run’s minimal operational overhead makes it excellent for learning cloud-native patterns, whilst App Engine provides comprehensive services for traditional web applications.

Plan for evolution: Architecture decisions should accommodate future growth and changing requirements. Understanding migration paths between services enables teams to start simple and evolve towards more sophisticated platforms as needs develop.

Consider team capabilities: The operational overhead and required expertise vary significantly between services. Aligning service choice with team capabilities ensures sustainable long-term success rather than struggling with platforms that exceed current expertise levels.

Optimise for total cost of ownership: Direct service costs represent only part of the total economic impact. Consider development velocity, operational overhead, and scaling efficiency when evaluating economic implications. The Google Cloud Pricing Calculator can help estimate costs across different scenarios.

The landscape of cloud computing continues evolving rapidly, with each service incorporating new capabilities and improving existing functionality. However, the fundamental architectural patterns and decision criteria outlined here provide a stable foundation for making informed choices in this dynamic environment.

By understanding the strengths, limitations, and appropriate use cases for each service, teams can make confident decisions that align with both current requirements and future aspirations. The goal isn’t to choose the “best” service in abstract terms, but to select the most appropriate platform for your specific context and requirements.

The future belongs to teams that can navigate this complexity with confidence, leveraging the right tools for each job whilst maintaining the flexibility to evolve as requirements change. Whether that path leads through Cloud Run’s elegant simplicity, App Engine’s comprehensive services, or GKE’s powerful flexibility depends entirely on your unique circumstances and objectives.

Further Reading: