The machine learning landscape has evolved dramatically since AWS SageMaker first democratized cloud-based ML development in 2017. Fast-forward to 2025, and we’re living in an era where these platforms handle trillion-parameter models, generative AI workloads, and mission-critical enterprise applications. If you’re choosing between Google Cloud’s Vertex AI, AWS SageMaker, and Azure Machine Learning, you’re not just picking a tool—you’re making a strategic decision that will shape your organization’s AI capabilities for years to come.

Having tried all three platforms, I’ve learned that the “best” platform doesn’t exist. Instead, each excels in different scenarios, and the right choice depends heavily on your specific needs, existing infrastructure, and team composition.

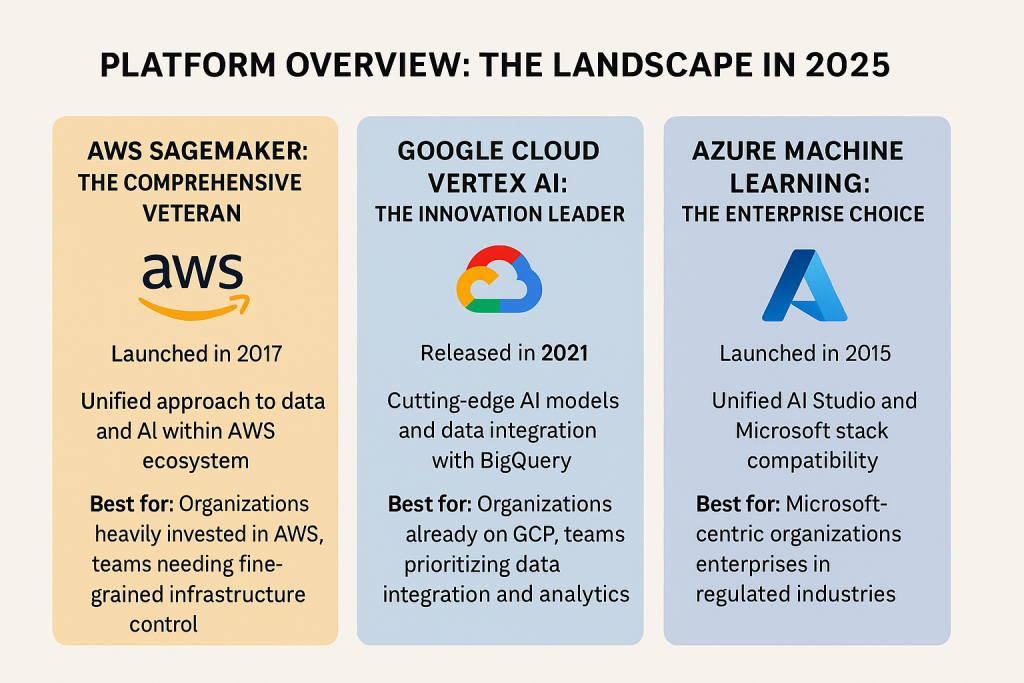

Platform Overview: The Landscape in 2025

AWS SageMaker: The Comprehensive Veteran

Launched in 2017, SageMaker is the most mature player in this space with over 380 features and capabilities. AWS has built an end-to-end ecosystem that covers every aspect of the ML lifecycle, from data preparation to model monitoring in production.

What sets SageMaker apart in 2025 is its unified approach to data and AI. The new SageMaker Unified Studio brings together data lakes, ML workflows, and generative AI into a single orchestration layer. This means you can query data from S3 and Redshift without ETL, train massive models with HyperPod (which now achieves 99.9% uptime during multi-week training runs), and deploy with specialized silicon like Inferentia3 chips that reduce LLM inference costs by 58%.

Best for: Organizations heavily invested in AWS, teams that need fine-grained infrastructure control, and enterprises building production ML systems at scale.

Google Cloud Vertex AI: The Innovation Leader

Released in 2021, Vertex AI is the newest but arguably most feature-rich platform in this comparison. Google consolidated its scattered AI services into one unified platform, and the result is impressive. Vertex AI provides access to Google’s cutting-edge models including Gemini 2.0, Imagen 4, and Veo 3 for video generation.

The platform’s standout feature is its Model Garden, which offers the widest variety of models in the industry, first-party models from Google, third-party models including Anthropic’s Claude family, and open-source models like Llama and Gemma. Vertex AI also provides unique access to Google’s Tensor Processing Units (TPUs), with TPU v5p clusters achieving training speeds 2.8x faster than previous generation hardware.

In 2025, Vertex AI’s integration with BigQuery is unmatched. Data teams can build models directly in SQL and deploy them as REST endpoints with one-click registry integration, slashing time-to-production from weeks to days.

Best for: Organizations already on GCP, teams prioritizing data integration and analytics, and companies that want access to the latest AI models and research.

Azure Machine Learning: The Enterprise Choice

Azure ML has evolved into a comprehensive platform that particularly shines in enterprise environments. The 2025 flagship feature is Unified AI Studio, which merges generative AI workflows with traditional predictive modelling, a game-changer for organizations running mixed AI pipelines.

Azure’s strength lies in its tight integration with the Microsoft ecosystem. If your organization uses Microsoft Fabric, Power BI, or Azure Synapse, Azure ML provides seamless data flow and governance across the stack. The platform’s Confidential ML capabilities leverage Intel SGX v4 to encrypt model weights during training, addressing critical security requirements in regulated industries.

The visual Designer tool makes Azure ML particularly accessible to business analysts and less technical users, while still providing powerful capabilities for data scientists through code-first approaches with Python and R.

Best for: Microsoft-centric organizations, enterprises in regulated industries (healthcare, finance), and teams that need both no-code and code-first capabilities.

Deep Dive: Key Capabilities Compared

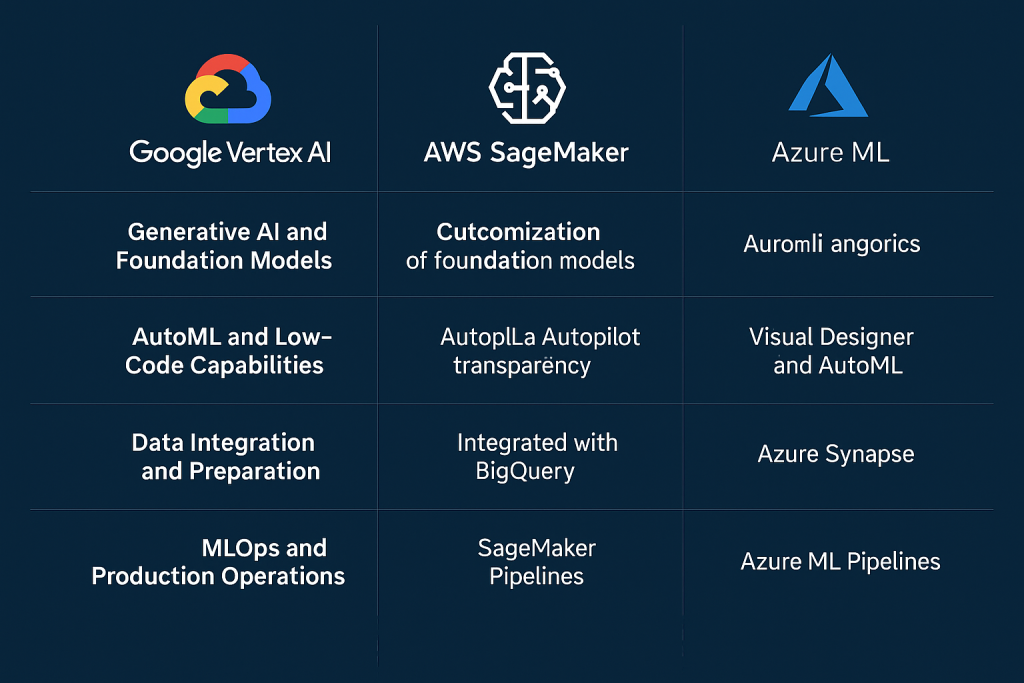

Generative AI and Foundation Models

Vertex AI leads in generative AI breadth. The Model Garden provides access to 11,000+ models, and Google’s own Gemini 2.0 models offer native multimodality, processing text, code, and 4K video frames in unified workflows. The Agent Builder makes it straightforward to create enterprise AI agents with grounding, orchestration, and customization capabilities.

SageMaker has made massive strides with Amazon Bedrock integration and the introduction of Amazon Nova models. The platform now supports extensive customization of foundation models through continued pre-training, supervised fine-tuning, and reinforcement learning. JumpStart provides access to popular models including OpenAI’s open-weight models (gpt-oss-120b and gpt-oss-20b).

Azure ML integrates deeply with Azure OpenAI Service and Azure AI Foundry, which now supports reinforcement fine-tuning (RFT) for improving reasoning and context-aware responses. The platform’s Model Router and Model Leaderboard simplify model selection by providing transparent benchmarks and performance comparisons across 11,000+ available models.

Winner: Vertex AI for variety and Google’s native models, but all three are competitive.

AutoML and Low-Code Capabilities

All three platforms offer robust AutoML, but with different philosophies:

- Vertex AI AutoML is praised for its intuitive interface and strong performance, particularly for tabular, image, text, and video data. It requires minimal coding and produces high-quality models quickly.

- SageMaker Autopilot automatically explores different algorithms and configurations, providing transparency into the process. It’s more technical than Vertex AI’s approach but gives experienced practitioners more control.

- Azure ML Designer offers the most visual, drag-and-drop experience. Combined with its AutoML capabilities, Azure makes ML accessible to business analysts while still supporting custom Python and R scripts for advanced users.

Winner: Azure ML for no-code accessibility, Vertex AI for AutoML quality.

Data Integration and Preparation

This is where the platforms diverge significantly based on their cloud ecosystems:

Vertex AI’s integration with BigQuery is unbeatable. You can prepare data, train models, and deploy, all without moving data. The platform natively integrates with Dataproc, Dataflow, and BigQuery for seamless data processing. The Vertex AI Feature Store ensures online and offline features stay synchronized.

SageMaker’s Data Wrangler provides visual data preparation, and the platform integrates with S3, AWS Glue, EMR, and Redshift. The new Lakehouse Federation allows querying across S3 and Redshift without ETL. However, many AWS customers I’ve worked with supplement with third-party tools like Databricks or Snowflake.

Azure ML integrates with Azure Data Lake Storage, Azure Data Factory, and Azure Synapse Analytics. The platform’s Dataset and Datastore concepts are straightforward, and the integration with Microsoft Fabric provides a unified data foundation for analytics and AI.

Winner: Vertex AI for out-of-the-box data platform capabilities.

MLOps and Production Operations

All three platforms have mature MLOps capabilities, but they approach it differently:

SageMaker Pipelines offers comprehensive workflow orchestration with strong model registry, monitoring, and governance features. The Model Monitor continuously tracks performance, and Clarify provides bias detection and explainability. SageMaker’s serverless inference option abstracts infrastructure management entirely.

Vertex AI Pipelines (based on Kubeflow) provides end-to-end workflow management with built-in model monitoring for data drift and skew detection. The platform’s integration with TensorFlow Extended (TFX) makes it natural for TensorFlow users. However, monitoring capabilities are less mature than SageMaker’s for models deployed outside the ecosystem.

Azure ML Pipelines integrates MLflow and provides its own orchestration solutions. The platform excels at CI/CD integration with Azure DevOps and GitHub Actions. Azure Monitor tracks deployed models, and the platform’s governance features (powered by Purview) provide comprehensive lineage tracking and compliance reporting.

Winner: SageMaker for comprehensive MLOps features, Azure ML for enterprise governance.

Training Infrastructure and Performance

Infrastructure capabilities have become a major differentiator in 2025:

SageMaker HyperPod reduces training time for foundation models by up to 40% with automatic fault recovery. The platform supports distributed training at massive scale (15,000+ nodes) and provides access to AWS Inferentia3 and Trainium chips for specialized workloads. Spot instance strategies reduce costs by 90% compared to on-demand pricing.

Vertex AI provides access to Google’s custom TPU v5p chips and supports GPU clusters that scale efficiently. The platform’s strength is in its research-grade infrastructure, it’s what Google uses internally for training their own models. Batch predictions offer 50% cost reduction for Gemini models through parallel processing.

Azure ML offers ND H100 vGPUs that deliver 1.4 petaflops per cluster and can train 70B-parameter models in under 8 hours. The Arc-enabled edge deployments support hybrid scenarios with 150+ sites, making it unique for manufacturers who need to train centrally but infer locally.

Winner: Vertex AI for research-grade compute, SageMaker for cost optimization, Azure ML for hybrid deployments.

User Experience and Learning Curve

The learning curve varies significantly:

Vertex AI has the cleanest UI but can be challenging for beginners due to its power and flexibility. The global resource views (seeing all resources regardless of region) prevent costly mistakes. Documentation is improving but sometimes lags behind the feature releases.

SageMaker Studio provides a comprehensive IDE but can feel technical and overwhelming initially. AWS’s documentation is excellent and extensive. The modular nature means you can learn incrementally, but the sheer number of services can be daunting.

Azure ML Studio offers the most approachable interface with its visual Designer and intuitive workflows. The platform caters well to users of varying skill levels. Integration with VS Code and the ability to run training scripts locally are highly valued by developers.

Winner: Azure ML for approachability, SageMaker for documentation quality.

Cost Considerations

Pricing is complex across all platforms, with compute resources accounting for the bulk of costs:

- SageMaker charges based on compute usage and data processed. AWS offers savings plans that can reduce costs by up to 64%. Spot instances and Inferentia chips provide significant cost optimization opportunities.

- Vertex AI pricing is based on “Consumed ML Units” which can be harder to predict upfront. However, batch predictions offer substantial savings (50% for Gemini models), and the ability to customize GPU/CPU ratios provides flexibility. Overall costs are comparable to SageMaker.

- Azure ML has a similar pricing model to SageMaker with compute as the primary cost driver. Reserved instances offer up to 42% savings. The platform is generally competitive on price, though costs can climb with complex configurations.

Reality check: All three platforms can become expensive if not managed properly. Cost optimization requires active monitoring, right-sizing instances, using spot/preemptible capacity, and choosing the right deployment strategy (batch vs. real-time).

Real-World Use Cases and Fit

Choose Vertex AI if:

- You’re already using GCP services extensively

- You need cutting-edge AI models (Gemini, PaLM)

- Data integration with BigQuery is critical

- You’re building data-heavy applications

- You want TPU access for specialized workloads

- Your team values unified data and AI workflows

Choose SageMaker if:

- You’re invested in the AWS ecosystem

- You need comprehensive MLOps capabilities

- You’re deploying models at massive scale

- Fine-grained infrastructure control is important

- Your team is engineering-heavy

- Cost optimization through specialized chips (Inferentia) is a priority

Choose Azure ML if:

- You’re a Microsoft-centric organization

- You need strong governance and compliance features

- Your industry is heavily regulated (healthcare, finance)

- You want to support both technical and non-technical users

- Integration with Microsoft Fabric, Power BI, or Dynamics is valuable

- Hybrid cloud deployment is required

The Verdict: It Depends (But Here’s How to Decide)

The truth is that all three platforms are mature, capable, and continuously improving. The “best” choice depends on your specific context:

- Follow your cloud ecosystem: If you’re already on AWS, SageMaker is the natural choice. Same logic applies for GCP (Vertex AI) and Azure (Azure ML). The integration benefits and reduced data movement costs make this the primary decision factor for most organizations.

- Consider your team composition: Engineering-heavy teams often prefer SageMaker’s control and flexibility. Teams with mixed skill levels benefit from Azure ML’s accessibility. Research-focused teams appreciate Vertex AI’s cutting-edge capabilities.

- Evaluate your data infrastructure: If you’re heavily using BigQuery, Vertex AI is the clear winner. If you have complex data pipelines across multiple sources, consider SageMaker’s Lakehouse Federation or Azure’s Synapse integration.

- Think about governance requirements: Regulated industries should seriously consider Azure ML for its comprehensive governance and compliance features.

- Factor in generative AI strategy: If you’re building extensively with foundation models, evaluate the model catalogs. Vertex AI currently has the edge in variety and access to Google’s latest research.

The ML platform market in 2025 is incredibly healthy, with all three vendors pushing each other to innovate. You truly can’t make a wrong choice, but you can make an informed one by aligning platform strengths with your organization’s needs, existing infrastructure, and strategic priorities.

This analysis is based on platform capabilities as of November 2025 and draws from official documentation, industry reports, and hands-on experience with all three platforms.