Welcome back to our Azure Storage Master Class. In Part 1, we explored the fundamentals of Azure Storage and took a deep dive into Blob Storage. Today, we’ll continue our journey by examining three more members of the Azure Storage family: File Storage, Queue Storage, and Table Storage.

These services might not get as much attention as Blob Storage, but they solve critical problems in enterprise architectures. I’ve seen organisations achieve remarkable results by leveraging these services in the right scenarios, and I’ll share those insights with you.

Azure File Storage: Cloud File Shares Done Right

Let’s start with Azure File Storage, which provides fully managed file shares accessible via industry-standard SMB and NFS protocols.

When to Use Azure File Storage

Azure File Storage excels in several scenarios:

- Hybrid file services: Extend your on-premises file servers to the cloud

- Lift-and-shift applications: Support legacy apps that require file system access

- Shared application settings: Store configuration files accessible by multiple VMs

- Container persistent storage: Provide persistent storage for containerized applications

- Developer environments: Maintain shared code repositories or tools

File Storage Media Tiers

Azure File Storage offers two main media tiers to match your workload requirements:

- SSD (Premium): Provides consistent high performance with low latency (single-digit milliseconds for most IO operations). SSD file shares are ideal for IO-intensive workloads like databases, web hosting, and development environments. They support both SMB and NFS protocols, operate on the provisioned v1 billing model, and offer higher availability SLA compared to standard tiers.

- HDD (Standard): Offers cost-effective storage for general-purpose file sharing needs. HDD file shares are available with both provisioned v2 and pay-as-you-go billing models, though Microsoft recommends the provisioned v2 model for new deployments. This tier is well-suited for workloads where low latency isn’t critical, such as team shares or shares cached on-premises with Azure File Sync.

SMB vs. NFS: Choosing the Right Protocol

Azure File Storage supports both SMB (Server Message Block) and NFS (Network File System) protocols, though not on the same file share:

- SMB: Native Windows protocol, supports authentication via Active Directory. Available on both premium and standard tiers. SMB file shares support many authentication methods including identity-based authentication with Kerberos.

- NFS: Common in Linux/Unix environments with POSIX file system semantics. Currently only supported on premium file shares (FileStorage accounts). Uses host-based authentication and provides features like hard links and symbolic links that aren’t available with SMB.

Your choice depends primarily on your operating environment, authentication requirements, and file system semantics needed for your application. The protocol can’t be changed after share creation, so choosing correctly during initial deployment is important.

# Linux example: Mounting an Azure SMB file share

sudo mount -t cifs //mystorageaccount.file.core.windows.net/myshare /mnt/myshare -o username=mystorageaccount,password=myStorageAccountKey,serverino# Windows example: Mounting an Azure SMB file share

$connectTestResult = Test-NetConnection -ComputerName mystorageaccount.file.core.windows.net -Port 445

if ($connectTestResult.TcpTestSucceeded) {

net use Z: \\mystorageaccount.file.core.windows.net\myshare /u:AZURE\mystorageaccount myStorageAccountKey

} else {

Write-Error "Unable to reach the Azure storage account via port 445. Check your network configuration."

}Integrating with Azure Active Directory

For environments requiring stronger authentication, Azure Files supports Azure AD-based authentication for SMB shares. This allows you to:

- Use existing user and group permissions

- Implement file-level permissions

- Eliminate the need to embed storage account keys in scripts

This capability is particularly valuable in enterprise environments where security and compliance requirements are stringent.

Advanced Features: Snapshots and Soft Delete

Azure File Storage includes several data protection features:

- File share snapshots: Point-in-time, read-only copies of your data

- Soft delete: Protection against accidental deletion or corruption

- Backup integration: Native integration with Azure Backup

# PowerShell example: Creating a file share snapshot

New-AzStorageShareSnapshot -Share $share -Context $ctxPro Tip: I recommend implementing an automated snapshot strategy for all production file shares. While this increases storage costs slightly, the ability to quickly recover from accidental deletions or corruptions without a formal backup restore process is invaluable for maintaining business continuity.

Hybrid Scenarios: Azure File Sync

Azure File Sync is a service that transforms your on-premises file servers into a quick cache of your Azure file shares, offering the best of both worlds:

- Local performance with cloud benefits

- Multi-site access and collaboration

- Tiering of cold data to the cloud

- Centralized backup and disaster recovery

Here’s a typical implementation pattern:

- Deploy the Azure File Sync agent to your Windows Server

- Create a sync group in the Azure portal

- Add server endpoints (local folders) and cloud endpoints (Azure file shares)

- Configure cloud tiering if desired

- Monitor synchronisation health

If you are looking for more information on Azure File Sync you can check out our guide Mastering Azure File Sync: The Ultimate Guide to Streamlining Your File Storage

Azure Queue Storage: Reliable Messaging for Distributed Applications

Next, let’s explore Queue Storage, which provides reliable messaging between application components.

Queue Storage Fundamentals

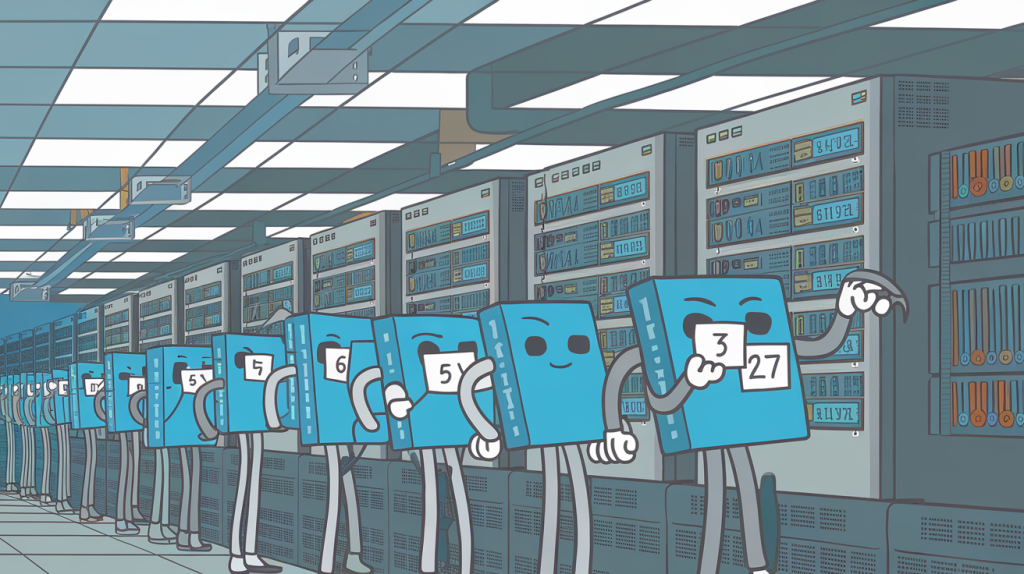

At its core, Azure Queue Storage implements a simple but powerful concept:

- Messages (up to 64KB) are added to the back of a queue

- Messages are retrieved from the front of the queue

- When a message is retrieved, it becomes invisible for a specified period

- The recipient must explicitly delete the message after processing

- If not deleted, the message reappears in the queue

This pattern enables reliable communication between distributed components, even when those components may be temporarily unavailable or under heavy load.

Key Scenarios for Queue Storage

Queue Storage shines in these common scenarios:

- Workload decoupling: Separate producers and consumers of work

- Load levelling: Handle traffic spikes without overloading backend systems

- Batch processing: Collect work items for efficient processing

- Asynchronous communication: Enable “fire and forget” operations

- Microservice communication: Implement resilient service-to-service messaging

// C# example: Adding a message to a queue

CloudStorageAccount storageAccount = CloudStorageAccount.Parse(connectionString);

CloudQueueClient queueClient = storageAccount.CreateCloudQueueClient();

CloudQueue queue = queueClient.GetQueueReference("myqueue");

await queue.CreateIfNotExistsAsync();

// Create and add the message

CloudQueueMessage message = new CloudQueueMessage("Hello, World");

await queue.AddMessageAsync(message);// C# example: Processing messages from a queue

CloudQueue queue = queueClient.GetQueueReference("myqueue");

CloudQueueMessage retrievedMessage = await queue.GetMessageAsync();

if (retrievedMessage != null)

{

// Process the message

Console.WriteLine($"Message: {retrievedMessage.AsString}");

// Delete the message

await queue.DeleteMessageAsync(retrievedMessage);

}Queue Storage vs. Service Bus: Making the Right Choice

Azure offers two primary queuing services: Queue Storage and Service Bus. Here’s when to choose each:

Choose Queue Storage when you need:

- Simple, cost-effective queuing

- Messages up to 64KB

- Queue size potentially exceeding 80GB

- Message tracking without requiring guaranteed FIFO delivery

Choose Service Bus when you need:

- Advanced messaging features (topics, sessions, transactions)

- Guaranteed FIFO delivery

- Automatic duplicate detection

- Messages up to 100MB (with premium tier)

- Integration with hybrid environments

Decision framework: In my work, I generally recommend starting with Queue Storage for simplicity and cost-effectiveness, then migrating to Service Bus only when specific advanced features are required. This approach aligns with the cloud principle of starting simple and scaling up as needed.

Performance and Scalability Considerations

For high-throughput scenarios, consider these best practices:

- Use multiple queues: Distribute load across multiple queues

- Batch operations: Use batch retrieval and deletion where possible

- Implement backoff strategies: Exponentially back off when queues are empty

- Consider message TTL: Set appropriate time-to-live values

- Optimise visibility timeout: Balance between retry speed and processing time

Azure Table Storage: NoSQL Storage for Semi-Structured Data

Finally, let’s explore Table Storage, Azure’s NoSQL key-attribute store.

Understanding Table Storage Basics

Azure Table Storage provides a schema-less, key-attribute store for semi-structured data:

- Data is stored in tables (similar to NoSQL collections)

- Each table contains entities (similar to rows)

- Entities have a partition key and row key that together form a unique identifier

- Entities can have up to 255 properties (columns) of various data types

- Each entity can have different properties and data types

This flexibility makes Table Storage ideal for scenarios where data structures may evolve over time.

Key Scenarios for Table Storage

Table Storage is particularly well-suited for:

- User/device preferences: Storing settings or preferences

- Catalogue data: Product information, pricing data

- Reference data: ZIP codes, tax rates, configuration data

- IoT device metadata: Device information (not the high-volume telemetry itself)

- Session state: Web application session information

// C# example: Adding an entity to Table Storage

CloudStorageAccount storageAccount = CloudStorageAccount.Parse(connectionString);

CloudTableClient tableClient = storageAccount.CreateCloudTableClient();

CloudTable table = tableClient.GetTableReference("people");

await table.CreateIfNotExistsAsync();

// Create a new employee entity

CustomerEntity customer = new CustomerEntity("Harp", "Walter")

{

Email = "walter@example.com",

PhoneNumber = "425-555-0101"

};

// Add the entity

TableOperation insertOperation = TableOperation.Insert(customer);

await table.ExecuteAsync(insertOperation);Partition Key Selection: The Key to Performance

The most critical design decision in Table Storage is your partition key choice:

- Entities with the same partition key are stored together

- Operations within a single partition are more efficient

- Queries that specify a partition key are more efficient

- Choose partition keys based on access patterns

Table Storage vs. Cosmos DB: Evolution Path

While Table Storage remains useful, Azure Cosmos DB now offers a Table API with enhanced capabilities:

- Global distribution

- Higher throughput

- Multiple consistency levels

- Automatic indexing

- Richer query capabilities

For new projects, consider starting with Cosmos DB’s Table API, which provides an upgrade path for existing Table Storage applications.

Integration Patterns: Bringing It All Together

Now that we’ve explored each service individually, let’s look at how they can work together in comprehensive solutions.

Pattern 1: Media Processing Pipeline

- Blob Storage: Stores incoming media files

- Event Grid: Triggers when new files are uploaded

- Queue Storage: Manages processing tasks

- Azure Functions: Processes media based on queue messages

- Table Storage: Tracks processing status and metadata

This pattern decouples upload from processing, allowing for resilient, scalable media handling.

Pattern 2: Hybrid Document Management

- File Storage: Provides SMB access for legacy applications

- File Sync: Enables local caching with cloud storage

- Blob Storage: Long-term archival of historical documents

- Table Storage: Stores document metadata and access records

This pattern provides a seamless transition path from traditional file servers to cloud-native storage.

Pattern 3: IoT Data Platform

- Queue Storage: Ingests device messages reliably

- Blob Storage: Archives raw telemetry data

- Table Storage: Stores device metadata and configuration

- Azure Functions: Processes and aggregates telemetry data

This pattern handles variable device connectivity while maintaining data integrity.

Security Best Practices Across Storage Services

Regardless of which storage services you use, apply these security principles:

- Use managed identities: Leverage Azure AD identities rather than shared keys

- Implement least privilege: Grant only the permissions needed for each application

- Enable advanced threat protection: Detect and respond to unusual access patterns

- Encrypt sensitive data: Use client-side encryption for highly sensitive information

- Implement network isolation: Use private endpoints where possible

- Enable soft delete and versioning: Protect against accidental or malicious deletion

- Monitor and audit: Enable diagnostic logs and regularly review access patterns

Security recommendation: I strongly encourage all organisations to implement a regular storage security review process. The most common vulnerability I encounter in audits is overly permissive access controls that accumulate over time through “temporary” solutions that become permanent.

Cost Optimisation Strategies

Managing costs effectively requires a deliberate approach:

- Right-size your storage: Use appropriate performance tiers

- Implement lifecycle management: Automate tiering and deletion

- Reserve capacity: Use Azure Reserved Capacity for predictable workloads

- Monitor usage patterns: Identify and address unexpected increases

- Optimise transaction counts: Batch operations where possible

- Clean up unneeded data: Regularly review and remove unnecessary data

Cost insight: In one cloud optimisation project, we reduced storage costs by 38% simply by implementing the correct access tiers and reservation strategy – with no performance impact whatsoever. This type of “free money” is often overlooked in busy IT operations.

Coming Up Next

In Part 3, our final instalment, we’ll explore advanced topics including:

- Disaster recovery strategies across storage services

- Data migration approaches and tools

- Performance tuning for high-scale implementations

- Integrating with other Azure services (Functions, Logic Apps, etc.)

- Monitoring and operational excellence

- Future road map and emerging storage capabilities

I’ll also provide a comprehensive implementation checklist to ensure you’ve covered all bases when deploying Azure Storage in production environments.

Until then, I encourage you to experiment with the services we’ve discussed today. Create test environments, compare performance characteristics, and determine which combination of services best meets your specific requirements.