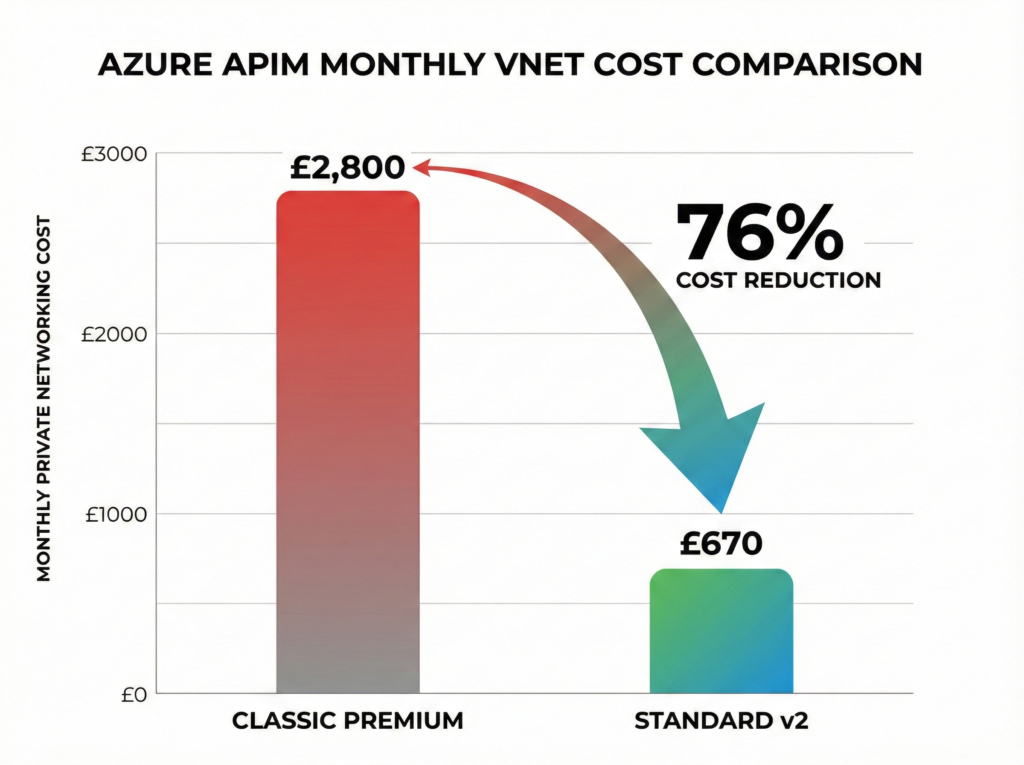

Azure API Management processes over 2 trillion API calls monthly across 35,000+ organisations, yet 60-75% of enterprises deploying it discover too late that its configuration creates vendor lock-in comparable to running your entire infrastructure on proprietary frameworks. The platform’s November 2024 Premium v2 launch and Model Context Protocol support signal a fundamental repositioning: Microsoft isn’t competing for the API gateway market anymore; it’s claiming the AI governance space before competitors realise there’s a race. The v2 tiers slash VNet integration costs from £2,800 to £670 monthly whilst eliminating the networking complexity that plagued Premium deployments, but organisations migrating from AWS API Gateway or Kong face a strategic calculation that extends far beyond monthly billing.

Traditional API management treated APIs as HTTP endpoints requiring throttling and documentation. That model died when generative AI moved from prototype to production. Azure APIM’s token-based rate limiting, semantic caching for LLM responses, and native integration with Azure OpenAI create the first complete token lifecycle management framework available at the platform level. The Access Group’s migration from 450 million annual API calls to a governance framework supporting 1,200+ API definitions demonstrates production-scale viability, whilst Backbase’s deployment across 25 countries with sub-second latency proves the architecture scales globally. The question facing platform architects isn’t whether Azure APIM works at enterprise scale – the case studies confirm it does – but whether committing to Microsoft’s policy model and Entra ID integration represents strategic positioning or constraint.

Most organisations approach API management as a procurement decision: evaluate features, compare pricing, select vendor. This thinking treats a 5-10 year architectural commitment as a software purchase. Azure APIM’s value proposition centres on ecosystem lock-in as competitive advantage. Deep integration with Entra ID, Key Vault, Functions, Application Insights, and Azure OpenAI means authentication flows, secrets management, serverless backends, and observability require minimal configuration if you’re all-in on Microsoft. Step outside that ecosystem and you’re writing custom policies for every integration, managing duplicate identity systems, and explaining to auditors why your API gateway can’t enforce the same conditional access policies protecting your other resources. The v2 architecture, AI gateway capabilities, and hybrid deployment model through self-hosted gateways position APIM as Microsoft’s integration control plane – which makes it either your strategic advantage or your largest technical dependency.

The 2026 API Management Market: Strategic Inflection Point

The API management market reached $5.4-8.9 billion in 2024, with analysts projecting 17-35% compound annual growth through 2030 as organisations shift from managing REST endpoints to governing AI model access. Cloud-deployed solutions command 60-80% market share, whilst hybrid deployments grow at 22% annually as regulatory requirements force data residency patterns that pure SaaS cannot satisfy. Banking and financial services drive 28-34% of adoption, followed by IT/telecommunications and retail sectors where API-driven business models separate leaders from laggards.

Azure APIM holds 15% mindshare in the API management category according to PeerSpot’s 2024 analysis, down from 19.3% the previous year despite maintaining the platform’s highest user rating at 7.8/10. The decline reflects market fragmentation as organisations pursue multi-cloud API strategies rather than vendor consolidation indicating fundamental strategic repositioning. Microsoft’s absence from public Leader claims in Gartner’s 2024 Magic Quadrant for Full Lifecycle API Management marks a notable shift from its 2020-2022 Leader designations, though it retained seventh-consecutive-year Leader status in the iPaaS Magic Quadrant, highlighting integration strength over pure API gateway capabilities.

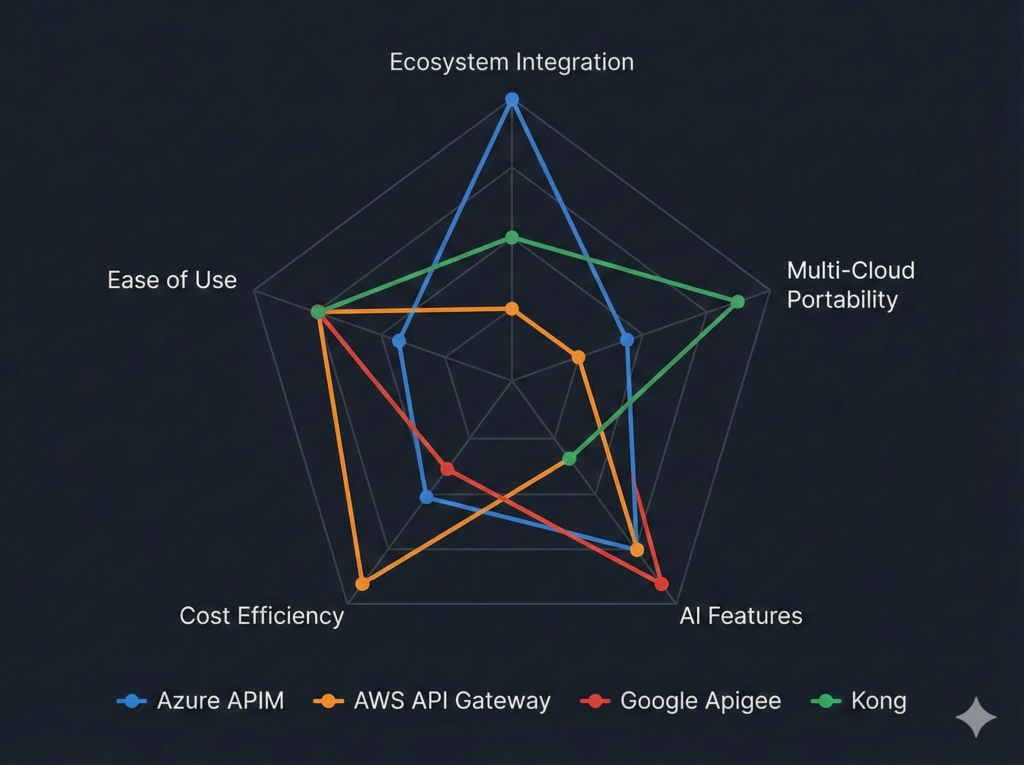

The competitive landscape reveals distinct strategic positions. AWS API Gateway dominates serverless-first architectures with per-call pricing starting at £0.0028 per million requests, but provides minimal lifecycle management beyond basic throttling and caching. Google Apigee maintains nine consecutive years as Gartner Leader with the industry’s strongest analytics and API monetisation capabilities, targeting organisations treating APIs as revenue-generating products. Kong scores highest on “Completeness of Vision” with the lowest vendor lock-in risk through its open-source core, appealing to multi-cloud strategies requiring portability. MuleSoft leads integration depth but commands premium pricing reflecting its enterprise service bus heritage. Azure APIM’s differentiator remains ecosystem depth, building on the hybrid cloud capabilities we explored in our Azure Arc analysis, particularly for organisations already standardised on Entra ID for identity governance.

V2 Architecture: Fundamental Platform Rebuild

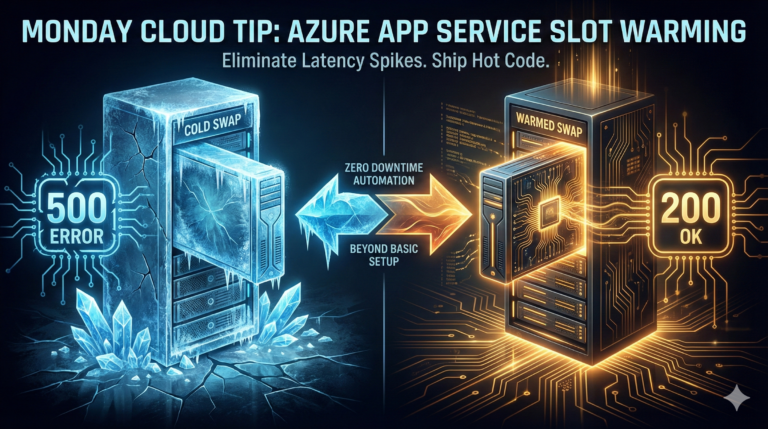

The v2 tier launch represents ground-up platform reconstruction rather than incremental improvement. Basic v2 and Standard v2 reached general availability in April 2024, with Premium v2 following in November 2024, delivering deployment times measured in minutes rather than hours and scaling to 10 units (30 for Premium v2) compared to classic tiers’ single-digit limits.

Standard v2’s most significant impact centres on VNet integration economics. The classic tier required Premium at £2,800 monthly for private networking, creating a £33,600 annual barrier for organisations requiring internal API exposure. Standard v2 delivers identical VNet integration at approximately £670 monthly, reducing the private networking entry point by 76% whilst eliminating the route tables and service endpoints classic Premium demanded from customer networks. Premium v2 further simplifies VNet injection by removing infrastructure prerequisites from tenant networks entirely, addressing the most common deployment blocker for classic Premium implementations.

The architectural improvements extend beyond cost. Version 2 platforms support zone redundancy natively, eliminating the multi-region complexity classic tiers required for availability zone distribution. The new compute infrastructure enables horizontal scaling that responds to traffic patterns in minutes rather than the 15-45 minute scaling windows classic tiers exhibited under load. Microsoft’s telemetry indicates v2 deployments consume 40% less memory for equivalent workloads through optimised runtime efficiency.

Current v2 limitations require strategic consideration. Multi-region deployment remains unavailable, forcing organisations requiring geographic distribution to deploy multiple instances with external traffic management. Git-based configuration and backup/restore capabilities planned for future releases mean infrastructure-as-code workflows currently require alternative approaches. Migration from classic tiers lacks automated tooling, necessitating manual rebuild for organisations with established APIM deployments. These gaps represent delivery priorities rather than architectural constraints, but affect deployment timing for specific use cases.

AI Gateway Capabilities: Token Governance at Scale

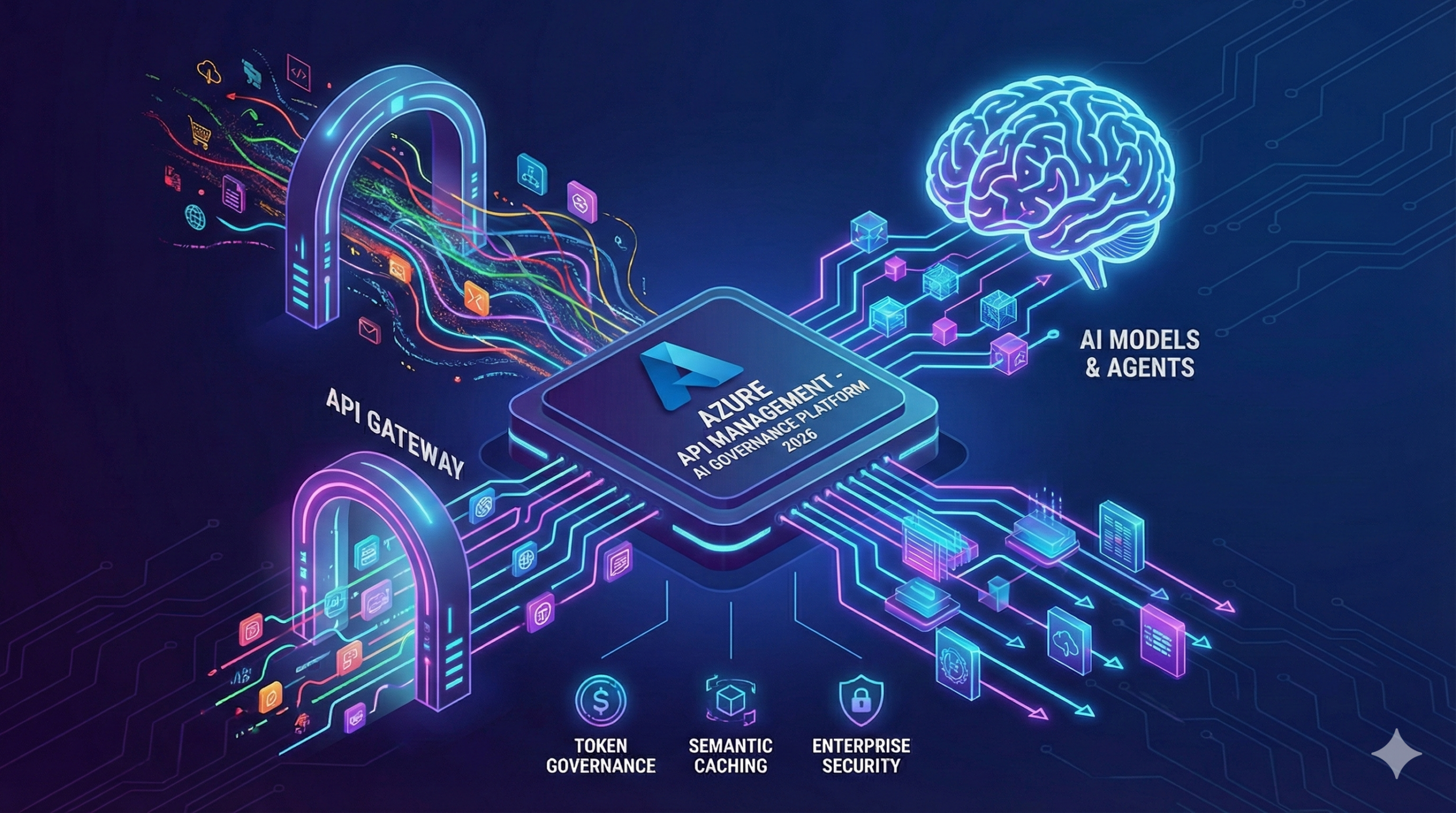

Azure APIM’s evolution from API gateway to AI governance platform represents the most strategically significant development in Microsoft’s 2024-2025 roadmap. The September 2025 Model Context Protocol server support positions APIM as the governance layer for agentic AI systems, enabling policy enforcement across AI agents, tools, and models alongside traditional API management.

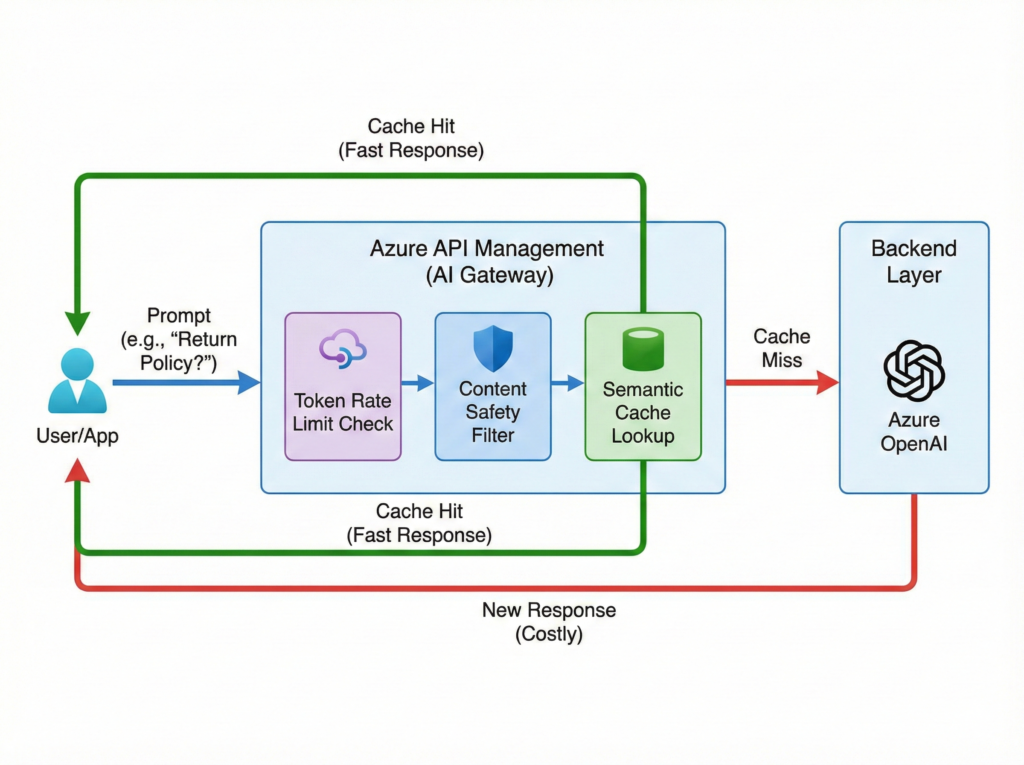

Token-based rate limiting addresses the fundamental challenge of LLM cost management. Traditional API throttling measures requests per second, but AI workloads incur costs per token processed. APIM’s token-aware policies enable limits like “5,000 tokens per minute per API key” with built-in retry logic and queue-based buffering during quota exhaustion. Semantic caching extends beyond traditional response caching by recognising semantically equivalent prompts, reducing redundant LLM calls for common queries. A customer support chatbot answering “What’s your return policy?” and “How do I return an item?” can serve both from cache despite different phrasing, delivering sub-100ms responses whilst eliminating duplicate Azure OpenAI charges.

Content safety policies integrate Azure AI Content Safety for automated moderation, blocking harmful content at the gateway level before reaching backend models. The policy framework supports configurable severity thresholds across violence, hate speech, self-harm, and sexual content categories with audit logging for compliance requirements. Organisations can implement different safety profiles across development, staging, and production environments, enforcing stricter controls in customer-facing deployments whilst allowing broader experimentation in non-production contexts.

Native Azure OpenAI integration simplifies LLM backend management through declarative configuration. APIM handles load balancing across multiple Azure OpenAI deployments, failover during regional outages, and circuit breaking when error rates exceed thresholds. The backend pool configuration supports weighted distribution for A/B testing model versions, enabling gradual rollout of GPT-4o deployments whilst maintaining GPT-4 fallbacks. Circuit breaking prevents cascade failures by temporarily removing unhealthy backends from rotation, implementing the resilience patterns detailed in our hybrid cloud architecture guide.

Vendor Comparison: Strategic Position Analysis

Azure APIM’s competitive positioning requires evaluation against four distinct architectural approaches, each optimised for different organisational constraints and strategic priorities.

Azure API Management: Ecosystem Integration

Strengths centre on Microsoft ecosystem depth. Entra ID integration enables conditional access policies, multi-factor authentication, and privileged identity management through native configuration rather than custom policy development. Key Vault integration provides automatic certificate rotation and secrets management with audit trails through Microsoft Defender for Cloud. Application Insights delivers distributed tracing, live metrics, and anomaly detection with zero custom instrumentation. Azure Functions backend integration supports serverless composition with sub-10ms latency for in-region deployments.

Limitations include policy model lock-in and multi-cloud complexity. The XML-based policy language lacks cross-platform portability, creating 60-75% vendor-specific configuration that cannot migrate to alternative platforms without complete rewrite. Multi-cloud deployments require self-hosted gateway installation and management on non-Azure infrastructure, with networking complexity increasing proportionally to deployment scale. Cost predictability suffers in high-throughput scenarios where consumption-based pricing creates variable monthly expenses, a pattern we examined in our vendor lock-in analysis.

Best use cases include Microsoft-standardised enterprises requiring hybrid cloud API management, organisations with Entra ID as identity authority needing consistent authentication enforcement, and Azure-native architectures where deep platform integration outweighs multi-cloud flexibility.

AWS API Gateway: Serverless-First Architecture

Strengths focus on serverless economics and AWS integration depth. Per-request pricing starting at £0.0028 per million calls eliminates minimum monthly costs, scaling to zero when unused. Lambda integration provides sub-millisecond backend invocation with automatic scaling and built-in X-Ray tracing. CloudFormation and CDK support enable infrastructure-as-code deployment through standard AWS tooling.

Limitations include lifecycle management gaps and enterprise governance constraints. API Gateway lacks centralised developer portal, API versioning beyond basic stage management, and advanced policy capabilities for transformation or content validation. Multi-account governance requires custom solutions rather than platform-native workspaces. Analytics capabilities stop at CloudWatch Logs without built-in business metrics or monetisation frameworks.

Best use cases include serverless-first architectures with Lambda backends, cost-sensitive workloads with variable traffic patterns, and AWS-standardised organisations prioritising cloud-native services over full lifecycle management.

Google Apigee: API-as-Product Platform

Strengths emphasise analytics sophistication and monetisation capabilities. Apigee’s nine consecutive years as Gartner Leader reflect its API-as-product positioning with revenue share models, developer tier management, and comprehensive business analytics. The platform supports complex traffic mediation scenarios with the industry’s most flexible policy framework and best-in-class caching architecture delivering sub-10ms response times for cached content.

Limitations centre on cost and operational complexity. Entry pricing exceeds Azure APIM Premium with additional charges for advanced features creating total cost of ownership challenges for organisations requiring full capabilities. Multi-cloud deployment requires Apigee hybrid installations with Kubernetes cluster dependencies increasing operational overhead. Learning curve steepness affects time-to-value for teams without prior Apigee experience.

Best use cases include organisations monetising APIs as products, scenarios requiring advanced analytics and developer portal sophistication, and Google Cloud-standardised enterprises seeking best-of-breed API management regardless of cost.

Kong: Open-Source Flexibility

Strengths focus on vendor neutrality and extensibility. Kong’s open-source core enables deployment across any infrastructure without licensing constraints, with community plugins providing broad integration coverage. The platform supports Kubernetes-native deployment through Ingress controllers, service mesh integration, and declarative configuration through Kubernetes CRDs. Multi-cloud portability ranks highest among evaluated platforms with minimal vendor-specific dependencies.

Limitations include enterprise feature gaps and support model constraints. Open-source Kong lacks the workspace management, RBAC sophistication, and analytics depth available in Kong Enterprise or competing platforms. Community support model creates risk for organisations requiring guaranteed SLAs and rapid issue resolution. Self-hosted deployments require operational expertise for high-availability clustering, monitoring, and security hardening.

Best use cases include multi-cloud strategies prioritising portability over deep platform integration, Kubernetes-centric architectures requiring service mesh compatibility, and organisations with strong operational capabilities able to manage self-hosted infrastructure.

Comparison Matrix

| Capability | Azure APIM | AWS Gateway | Apigee | Kong |

|---|---|---|---|---|

| Ecosystem Integration | Deep (Microsoft) | Deep (AWS) | Moderate | Minimal |

| Multi-Cloud Support | Hybrid gateway | Limited | Hybrid gateway | Native |

| API Lifecycle | Comprehensive | Basic | Comprehensive | Moderate |

| Developer Portal | Native | None | Advanced | Enterprise only |

| AI Gateway Features | Native | Limited | Planned | Plugin-based |

| Entry Cost (Monthly) | £144 | £0 + usage | £2,000+ | £0 (OSS) |

| Enterprise Cost | £2,800-7,000 | Variable | £5,000+ | £3,000+ |

| Vendor Lock-in Risk | High | High | Moderate | Low |

| Deployment Speed | Minutes (v2) | Seconds | Hours | Minutes |

Production Case Studies: Enterprise-Scale Validation

The Access Group: SaaS Platform API Governance

Company & Industry: The Access Group, enterprise software provider serving 100,000+ organisations across construction, manufacturing, and professional services sectors.

Scale Context: Managing 450 million API calls annually across 1,200+ API definitions, supporting 500+ distinct customer integrations with strict data isolation requirements.

Challenge: Legacy API infrastructure lacked centralised governance, creating security vulnerabilities through inconsistent authentication enforcement and compliance risks from ungoverned data exposure. Customer integration onboarding required 2-3 weeks manual configuration per tenant.

Solution Implemented: Azure API Management with workspace-based multi-tenancy, Entra ID integration for federated authentication, and self-hosted gateways for customer premise deployments. Deployed APIOps practices with Git-based configuration management and automated policy validation.

Measurable Outcomes:

- Reduced customer integration onboarding from 2-3 weeks to 2 days through standardised API publishing workflows

- Achieved 99.99% API availability through multi-region active-active deployment

- Decreased security incidents by 85% through centralised authentication policy enforcement

- Enabled SOC 2 Type II compliance through comprehensive API audit logging and access controls

Source: Microsoft Customer Story – The Access Group

Backbase: Global Digital Banking Platform

Company & Industry: Backbase, digital banking platform provider serving 150+ financial institutions across 25 countries with regulatory compliance requirements spanning GDPR, PCI-DSS, and regional banking regulations.

Scale Context: Processing millions of banking transactions daily across multi-region deployments, supporting 30+ million end users through customer bank implementations.

Challenge: Required API gateway infrastructure capable of sub-second latency for real-time banking transactions whilst maintaining strict data residency compliance across European, Asian, and North American deployments. Legacy infrastructure couldn’t scale to support rapid customer acquisition.

Solution Implemented: Azure API Management integrated with Grand Central platform using BIAN domain model, self-hosted gateways deployed in customer data centres for data residency compliance, and Azure Kubernetes Service for hosting integration platform connectors with Azure Service Bus for data synchronisation.

Measurable Outcomes:

- Maintained sub-second API latency across 25-country deployment

- Scaled to support 150+ financial institution customers through unified API management layer

- Achieved regional data residency compliance without sacrificing centralised governance

- Reduced implementation time by 6-12 months through built-in SDLC processes and automation

Source: Microsoft Customer Story – Backbase

KPMG Netherlands: Enterprise Integration Standardisation

Company & Industry: KPMG Netherlands, professional services firm providing advisory and audit services to 10,000+ business clients within the Dutch market.

Scale Context: Managing integrations across multiple vendor software systems, applications, and growing number of SaaS solutions within zero-trust security environment requiring continuous identity verification.

Challenge: Lack of centralised API system for application integration requests meant redundant work, slower onboarding, and system inaccuracies. Each integration required custom development without standardised framework, increasing operational security workload and integration complexity.

Solution Implemented: Azure Integration Services with Azure API Management as centralised API framework and Azure Logic Apps for low-code workflow orchestration, deployed through Azure DevOps using infrastructure-as-code practices to ensure consistent integration patterns.

Measurable Outcomes:

- Reduced integration processes from days to near-immediate availability

- Eliminated redundant custom integration development through standardised API framework

- Improved zero-trust security implementation through centralised API governance

- Achieved future-proof integration platform supporting scalable development without additional C# developer hiring

Source: Microsoft Customer Story – KPMG Netherlands

Cost Analysis and TCO Calculation

Azure API Management pricing follows consumption and reserved capacity models across six tiers, each optimised for different workload characteristics and organisational requirements.

Consumption tier charges £2.88 per million calls with no base fee, suitable for development environments and variable workloads averaging under 1 million daily calls. A workload processing 30 million monthly calls incurs £86.40 monthly, but lacks VNet support, custom domains, and SLA guarantees.

Developer tier costs £35.93 monthly with unlimited API calls, targeting pre-production environments. The tier supports 10 units of scale but carries no SLA, making production deployment unsuitable despite cost advantages.

Basic v2 provides £144.30 monthly base cost with 100,000 included calls and £1.44 per additional 10,000 calls. A typical workload processing 50 million monthly calls totals £864 monthly (£144 base + £720 overage). The tier delivers 99.95% SLA but lacks VNet integration required for most enterprise scenarios.

Standard v2 costs £670.44 monthly base with 1 million included calls and £0.67 per additional 10,000 calls. This tier represents the enterprise sweet spot: VNet integration, 99.95% SLA, 10-unit scaling, and zone redundancy at one-quarter classic Premium pricing. A workload processing 50 million monthly calls totals £4,012 monthly (£670 base + £3,342 overage), delivering private networking capabilities previously requiring Premium tier investment.

Premium v2 starts at £2,799.50 monthly with 1 million included calls and £2.66 per additional 10,000 calls, targeting high-throughput scenarios requiring 30-unit scaling and enhanced networking features. The tier’s simplified VNet injection eliminates infrastructure prerequisites justifying the premium for organisations with complex networking requirements.

Classic Premium maintains £2,799.50 monthly pricing but lacks v2 architecture advantages including faster deployment, zone redundancy, and simplified networking. Organisations on classic Premium should evaluate v2 migration, though multi-region requirements currently necessitate classic tier retention.

Total cost of ownership extends beyond gateway fees. Self-hosted gateway deployment requires compute infrastructure (typically £150-400 monthly per gateway instance), load balancer costs for high availability (£20-50 monthly), and operational overhead for certificate management and version upgrades. Application Insights costs scale with telemetry volume, typically adding £100-500 monthly for enterprise workloads. Backend service consumption (Functions, App Service, AKS) represents the largest TCO component, often exceeding gateway costs by 3-5x for compute-intensive workloads.

Comparative TCO analysis reveals distinct positioning. AWS API Gateway’s consumption model starts lower for small workloads (£0.0028 per million calls) but crosses APIM Standard v2 total cost near 200 million monthly calls when factoring in CloudWatch logging, X-Ray tracing, and developer time for lifecycle management feature gaps. Apigee’s entry pricing at £2,000+ monthly positions it above APIM Premium v2 before usage charges, with advanced analytics and monetisation features justifying premium for API-as-product scenarios. Kong Enterprise costs £3,000+ monthly with predictable subscription pricing eliminating variable usage charges but requiring operational expertise for self-hosted deployment.

Decision Framework: When to Choose Azure APIM

Azure API Management optimises for specific organisational profiles and technical requirements. The decision framework below provides evaluation criteria across strategic, technical, and operational dimensions.

Choose Azure APIM when:

Your organisation standardises on Microsoft Entra ID for identity governance and requires API authentication policies matching conditional access requirements enforced across other Azure resources. The platform delivers unified identity management without duplicate authentication infrastructure or policy synchronisation complexity.

Hybrid cloud architecture requires API exposure across Azure, on-premises data centres, and edge locations with centralised policy enforcement. Self-hosted gateway capability enables consistent governance regardless of backend location whilst maintaining Azure-based management plane.

Development teams require serverless backend composition through Azure Functions with sub-10ms in-region latency, benefiting from native integration that eliminates gateway-to-backend network traversal overhead present in external function invocations.

AI workload scaling demands token-based rate limiting, semantic caching for LLM responses, and content safety policy enforcement that standard API gateways cannot provide. Native Azure OpenAI integration positions APIM as the only platform offering complete AI governance capabilities without custom policy development.

Cost constraints require VNet integration under £1,000 monthly, where Standard v2’s £670 base fee delivers private networking at one-quarter classic Premium pricing whilst maintaining enterprise SLA guarantees.

Choose AWS API Gateway when:

Serverless-first architecture centres on Lambda functions requiring ultra-low-latency invocation (sub-millisecond) with consumption-based pricing scaling to zero during idle periods. The platform’s native Lambda integration delivers unmatched performance for AWS-standardised workloads.

Minimal lifecycle management requirements prioritise raw throughput and basic throttling over developer portal, API versioning, or advanced policy capabilities. Cost sensitivity favours per-request pricing without base monthly fees for variable workloads averaging under 10 million monthly calls.

Choose Google Apigee when:

API monetisation strategy requires revenue share models, developer tier management, and sophisticated business analytics tracking API usage as product metrics. The platform’s nine consecutive years as Gartner Leader reflect its API-as-product positioning unmatched by infrastructure-focused competitors.

Analytics sophistication demands real-time business metrics, custom dashboard creation, and developer engagement tracking beyond basic gateway telemetry. Apigee’s analytics engine provides the industry’s most comprehensive insights into API consumption patterns and business value generation.

Choose Kong when:

Multi-cloud portability outweighs deep platform integration, requiring vendor-neutral deployment across AWS, Azure, GCP, and on-premises infrastructure without architectural lock-in. Kong’s open-source core enables migration between platforms with minimal configuration changes.

Kubernetes-native architecture requires Ingress controller integration, service mesh compatibility, and declarative configuration through CRDs rather than vendor-specific management interfaces. Kong’s cloud-native design aligns with container orchestration patterns.

Operational expertise exists for self-hosted infrastructure management including high-availability clustering, monitoring stack integration, and security hardening. The platform’s flexibility demands corresponding operational sophistication for production deployment.

Implementation Roadmap: Enterprise Deployment Strategy

Azure APIM deployment follows a phased approach balancing rapid value delivery with operational maturity development.

Phase 1: Foundation and Proof of Value (Months 1-3)

Deploy Standard v2 tier in production region with VNet integration targeting 5-10 high-value APIs requiring centralised governance. Establish Entra ID integration for OAuth 2.0 authentication, configure Application Insights for distributed tracing, and implement basic rate limiting policies protecting backend services from traffic spikes. This phase prioritises rapid deployment over comprehensive governance, demonstrating API management value through measurable latency reduction and security improvement.

Key deliverables include published API definitions with OpenAPI specifications, configured developer portal with self-service API key generation, established monitoring dashboards tracking availability and latency metrics, and documented governance policies defining authentication requirements and throttling limits. Success metrics centre on 99.95% API availability, sub-100ms gateway latency for cached responses, and 50% reduction in authentication-related support incidents through standardised OAuth implementation.

Investment requires Standard v2 base subscription (£670 monthly), Application Insights standard tier (£100 monthly estimated), and 160 engineering hours across platform architecture, API migration, and policy development. This phase establishes foundation for scaled deployment whilst validating APIM’s fit for organisational requirements.

Phase 2: Expanded Adoption and APIOps (Months 4-9)

Scale to 50-100 APIs across business units, implementing workspace-based multi-tenancy for organisational isolation. Establish APIOps practices through Git-based configuration management, automated policy testing in CI/CD pipelines, and infrastructure-as-code deployment using Bicep or Terraform. Deploy self-hosted gateways in on-premises data centres requiring hybrid API exposure with consistent policy enforcement.

Introduce advanced capabilities including GraphQL facade over REST backends for mobile application optimisation, response transformation policies for legacy API modernisation, and custom backend integration for gRPC services. Implement comprehensive monitoring through Application Insights workbooks, custom alert rules for business-critical APIs, and capacity planning dashboards tracking consumption patterns.

This phase requires workspace architecture design, Git repository structure definition, CI/CD pipeline development for automated deployment, and self-hosted gateway installation documentation. Engineering investment increases to 320 hours across platform operations, API onboarding automation, and developer documentation. Additional costs include self-hosted gateway infrastructure (£400 monthly estimated), enhanced Application Insights tier (£300 monthly), and potential scaling to multiple Standard v2 units during traffic growth.

Phase 3: Enterprise Scale and Optimisation (Months 10-18)

Achieve enterprise-wide adoption supporting 200+ APIs with centralised governance through workspace federation model. Implement AI gateway capabilities for Azure OpenAI integration, including token-based rate limiting, semantic caching, and content safety policies. Deploy Premium v2 for workloads requiring 30-unit scaling or complex networking scenarios demanding simplified VNet injection.

Establish API Center integration for design-time governance, creating centralised inventory with compliance scorecards and semantic search capabilities. Implement advanced security patterns through mutual TLS authentication, certificate-based client authentication, and Azure Key Vault integration for automated certificate rotation. Optimise cost through caching strategies, compression policies, and backend connection pooling reducing unnecessary backend invocations.

This phase delivers complete API governance capability with metrics including 95%+ API coverage across organisation, sub-50ms p95 latency through optimised policies and caching, 30% cost reduction through eliminated redundant backend calls, and SOC 2 Type II compliance readiness through comprehensive audit logging. Investment scales to Premium v2 tier potential (£2,800+ monthly), expanded Application Insights retention (£500+ monthly), and 480 engineering hours for AI gateway implementation, advanced security patterns, and enterprise integration.

Organisations should expect 12-18 month journey from initial deployment to enterprise maturity, with quarterly governance reviews ensuring alignment with evolving requirements and platform capabilities.

Integration Patterns and Security Architecture

Azure APIM’s value proposition extends beyond API exposure to comprehensive integration and security patterns enabling enterprise-grade governance.

Backend integration supports diverse architectural styles including REST APIs with OpenAPI specification import, GraphQL endpoints with both pass-through and synthetic resolver patterns, WebSocket APIs for real-time communication, and gRPC services through self-hosted gateway deployments. The platform handles protocol translation enabling legacy SOAP backend exposure through modern REST facades, XML-to-JSON transformation for client compatibility, and response aggregation combining multiple backend calls into single client responses.

Authentication patterns leverage Entra ID capabilities including OAuth 2.0 and OpenID Connect for modern application integration, SAML 2.0 for enterprise identity federation, certificate-based authentication for service-to-service communication, and managed identities eliminating credential management for Azure service access. Conditional access integration extends identity security by enforcing device compliance, network location restrictions, and multi-factor authentication requirements matching organisational security policies, implementing the zero-trust principles detailed in our Azure cybersecurity guide.

Content transformation policies enable request and response manipulation through XML and JSON transformation, header injection and removal for backend compatibility, query parameter mapping, and payload validation against JSON schemas. Rate limiting implements per-subscription quotas, per-API throttling, rate limit by header patterns for client identification, and burst handling through token bucket algorithms preventing traffic spikes from overwhelming backends.

Monitoring integration spans Application Insights for distributed tracing and performance analytics, Azure Monitor for metric collection and alerting, Log Analytics for query-based log analysis, and Event Grid for event-driven monitoring workflows. Custom policies enable webhook notifications for API failures, telemetry enrichment with business context, and correlation ID propagation across distributed systems.

Common Implementation Pitfalls and Mitigation

Azure APIM deployment patterns reveal recurring mistakes that compromise security, increase costs, or limit scalability. Understanding these pitfalls enables proactive risk mitigation.

Security misconfigurations include deploying Developer tier in production environments despite lacking SLA guarantees and scaling limitations, treating APIM as Web Application Firewall when it provides API-focused security but requires Application Gateway or Front Door upstream for comprehensive protection, and failing to rotate self-hosted gateway credentials within recommended 30-day windows creating credential exposure risk. APIM should handle cross-cutting API concerns including authentication, rate limiting, and monitoring rather than business logic, resisting temptation to implement complex transformation chains that obscure actual backend functionality.

Operational surprises centre on infrastructure modification timing, where scaling operations, VNet configuration changes, and certificate updates require 15-45 minutes affecting change window planning. Tier upgrades alter static IP addresses necessitating DNS record updates and firewall rule modifications, whilst autoscaling instability results from poorly configured cooldown periods creating flapping between scale units during variable traffic.

Policy complexity accumulates when organisations implement extensive transformation logic in gateway policies rather than delegating to backend services. Policy execution increases latency by 5-50ms per transformation operation, compounds debugging complexity through multi-layer processing, and creates vendor lock-in through XML-based policy syntax lacking cross-platform portability. Establish policy governance early, limiting gateway policies to authentication, rate limiting, and routing whilst maintaining transformation logic in backend services enables architectural flexibility.

Cost overruns emerge from underestimated consumption charges where organisations budget for base tier costs whilst ignoring per-call charges that dominate total cost above 10 million monthly calls. Self-hosted gateway deployment requires compute infrastructure (VMs or containers), load balancer configuration, and certificate management adding 30-50% operational overhead beyond gateway licensing. Application Insights costs scale with telemetry volume, frequently exceeding initial estimates by 2-3x without sampling configuration and retention policies.

Adopting APIOps practices late creates technical debt compounding with each manual API deployment. Git-based configuration management, automated policy testing, and infrastructure-as-code deployment should begin in Phase 1 rather than retrofitting governance after establishing manual processes. The migration path from manual to automated management typically requires 3-6 months organisational change management beyond technical implementation.

Future Direction: AI Gateway and Federation

Azure APIM’s roadmap centres on two strategic themes: AI governance capabilities and federated management models enabling enterprise-scale API programmes.

AI gateway evolution positions APIM as governance layer for agentic AI ecosystems through Model Context Protocol server support enabling policy enforcement across AI agents, tools, and models alongside traditional APIs. Token-based rate limiting addresses LLM cost management where traditional request throttling proves insufficient, whilst semantic caching recognises equivalent prompts regardless of phrasing reducing redundant LLM invocations. Content safety policies integrate Azure AI Content Safety for automated moderation, blocking harmful content before reaching backend models with configurable severity thresholds across violence, hate speech, and sexual content categories. Native Azure OpenAI integration simplifies LLM backend management through declarative configuration, load balancing across deployments, and circuit breaking during regional outages.

Workspaces and federated management represent the organisational model for enterprise-scale API governance, enabling decentralised team ownership with centralised oversight through shared gateways reducing infrastructure costs. API Center complements APIM as design-time governance layer providing centralised inventory, compliance scorecards, and semantic search for API discovery across federated deployments. The integration creates complete lifecycle platform spanning design, deployment, and runtime governance.

Multi-protocol support continues maturing with GraphQL support including pass-through and synthetic resolvers, WebSocket APIs for real-time communication, and gRPC support through self-hosted gateways with managed gateway capability expected as delivery priority. The send-service-bus-message policy represents early steps toward native event-driven API patterns, with AsyncAPI emerging as standard for asynchronous API definitions.

Platform v2 completion priorities include multi-region deployment capability addressing current limitation forcing geographic distribution through multiple instances, Git-based configuration and backup/restore enabling infrastructure-as-code workflows, and automated migration from classic tiers reducing manual rebuild requirements for established deployments. These features target delivery through 2025-2026 based on Microsoft’s public roadmap.

Strategic Recommendations and Implementation Priorities

Azure API Management in 2026 represents fundamentally different platform than even two years prior. The v2 tiers reshape cost-capability equation, making VNet integration accessible at Standard pricing whilst eliminating networking complexity that plagued Premium deployments. AI gateway capabilities create genuine strategic value for organisations scaling generative AI where token governance, semantic caching, and MCP server support exceed competitors’ current capabilities.

For organisations standardised on Microsoft ecosystem requiring full-lifecycle API management with hybrid deployment capability, Azure APIM delivers optimal integration depth through Entra ID authentication, Key Vault secrets management, and Application Insights observability without custom policy development. The platform scales to genuine enterprise workloads demonstrated through The Access Group’s 450 million annual API calls across 1,200+ definitions and Backbase’s 25-country deployment serving 150+ financial institutions.

The decision framework prioritises Azure APIM for Microsoft-invested organisations, AWS API Gateway for pure serverless architectures, Apigee for API-as-product strategies requiring advanced monetisation, and Kong for multi-cloud portability requirements. The 60-75% vendor lock-in in APIM configuration represents primary strategic risk, mitigated through keeping business logic in backend services, using OpenAPI as source of truth, and adopting APIOps from deployment inception.

For enterprises building API strategy today, APIM’s trajectory toward AI governance makes it beyond API gateway decision into platform bet on how AI workloads will be managed across the enterprise. Organisations should begin with Standard v2 deployment targeting high-value APIs, establish APIOps practices enabling scaled adoption, and evaluate AI gateway capabilities against LLM governance requirements. The platform’s hybrid capabilities enable consistent policy enforcement regardless of backend location, delivering the architectural flexibility essential for multi-year technology strategy whilst maintaining deep Microsoft ecosystem integration as competitive advantage.

Useful Links

- Azure API Management Official Documentation – Microsoft’s comprehensive technical documentation

- Azure API Management Pricing Calculator – Detailed pricing information and TCO estimation

- API Management v2 Tiers Overview – v2 architecture capabilities and limitations

- Gartner Magic Quadrant for Full Lifecycle API Management 2024 – Industry analyst competitive analysis

- Azure API Management Best Practices – Microsoft Cloud Adoption Framework guidance

- APIOps GitHub Repository – Infrastructure-as-code and CI/CD patterns

- Azure API Management AI Gateway Capabilities – Token governance and LLM integration

- API Management Policy Reference – Complete policy syntax documentation

- PeerSpot Azure API Management Reviews – User ratings and comparisons

- Azure Architecture Center – API Management Reference Architectures – Production deployment patterns