The artificial intelligence industry has reached a pivotal moment with the emergence of the Model Context Protocol (MCP), an open standard that promises to solve one of the most persistent challenges in AI development: connecting intelligent systems to external data sources and tools. Recent research reveals how this protocol is rapidly transforming enterprise AI implementations and development workflows across major platforms.

The Protocol That Changes Everything

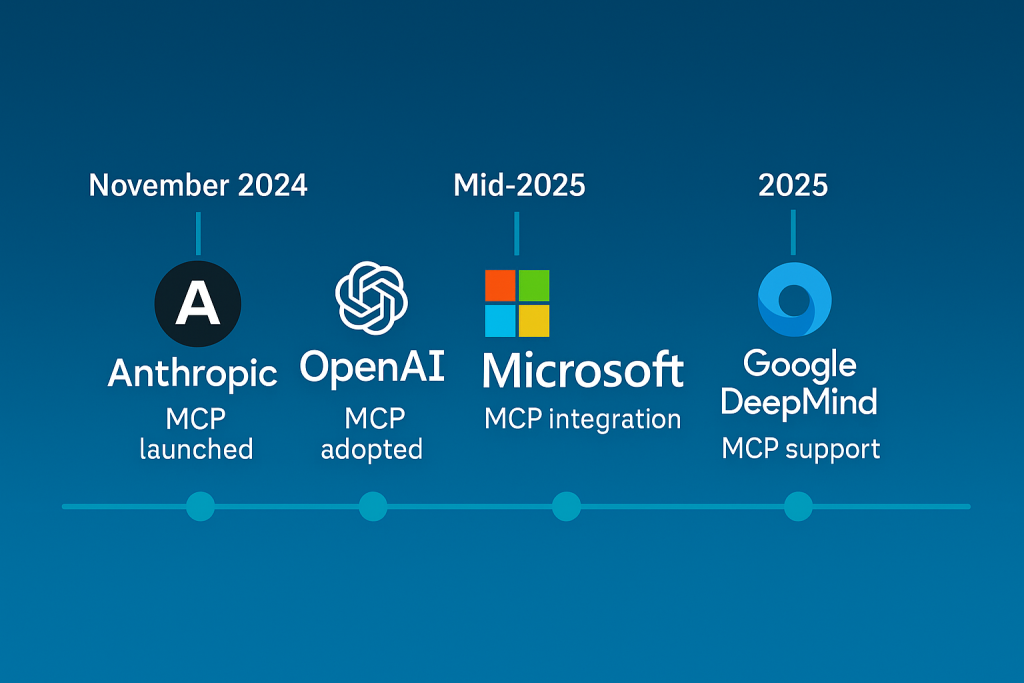

MCP represents a fundamental shift in how AI applications access external resources. Introduced by Anthropic in November 2024, the protocol has achieved remarkable industry adoption in an unusually short timeframe, with major players including Microsoft, OpenAI, and Google DeepMind integrating support across their platforms.

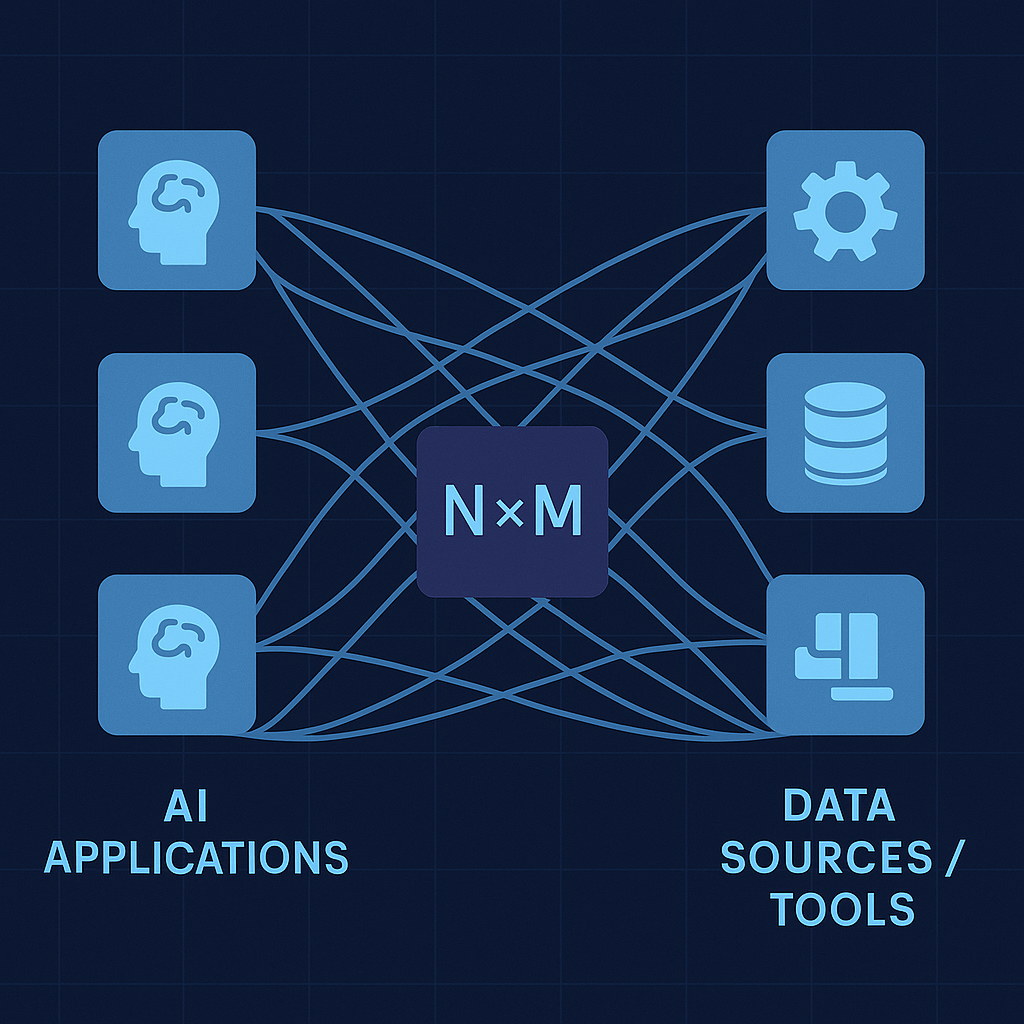

The protocol’s core value proposition addresses the notorious “N×M problem” where each AI application previously required custom integrations with every data source or tool. This approach created exponential complexity as organisations scaled their AI implementations, leading to maintenance nightmares and vendor lock-in scenarios.

Key Technical Architecture:

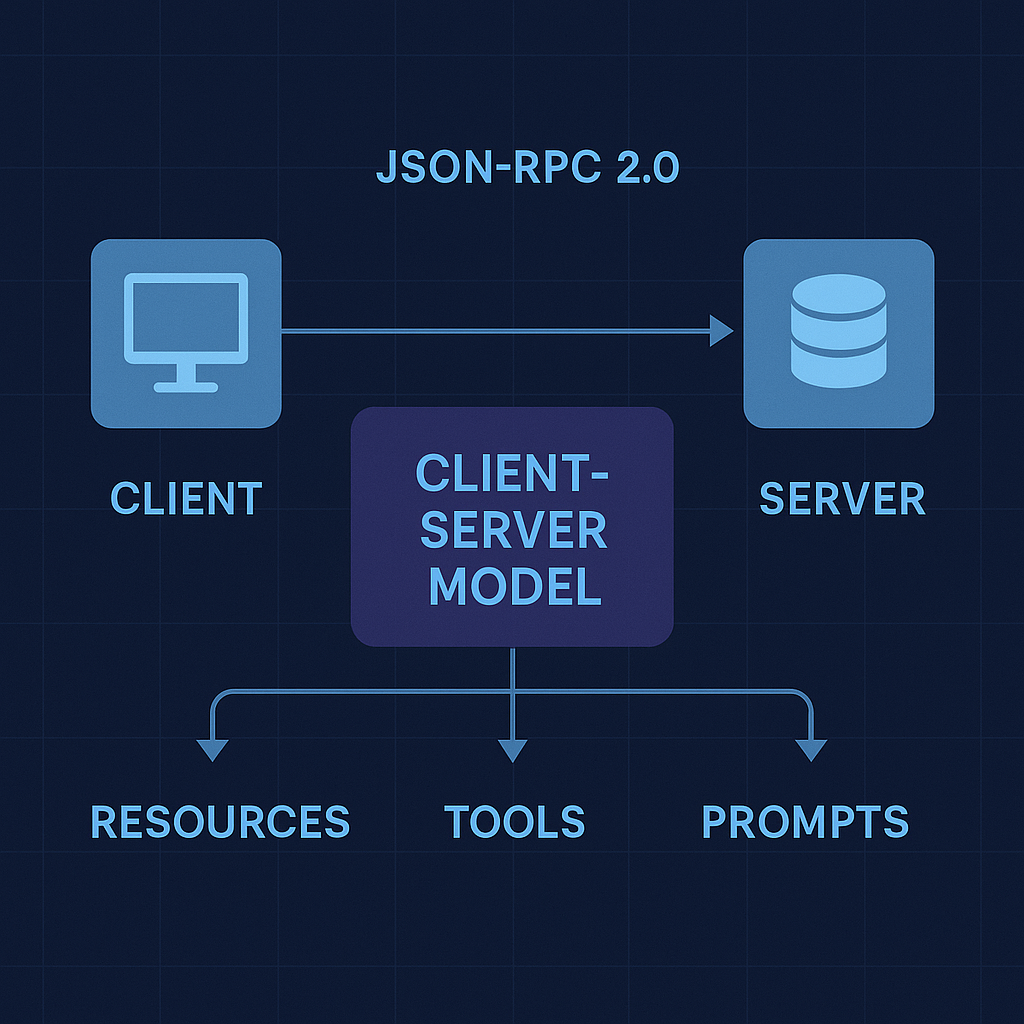

- Client-server model built on JSON-RPC 2.0

- Three primary interfaces: Resources, Tools, and Prompts

- Transport layer supporting multiple connection types

- Standardised session management and error handling

Microsoft’s Strategic Embrace

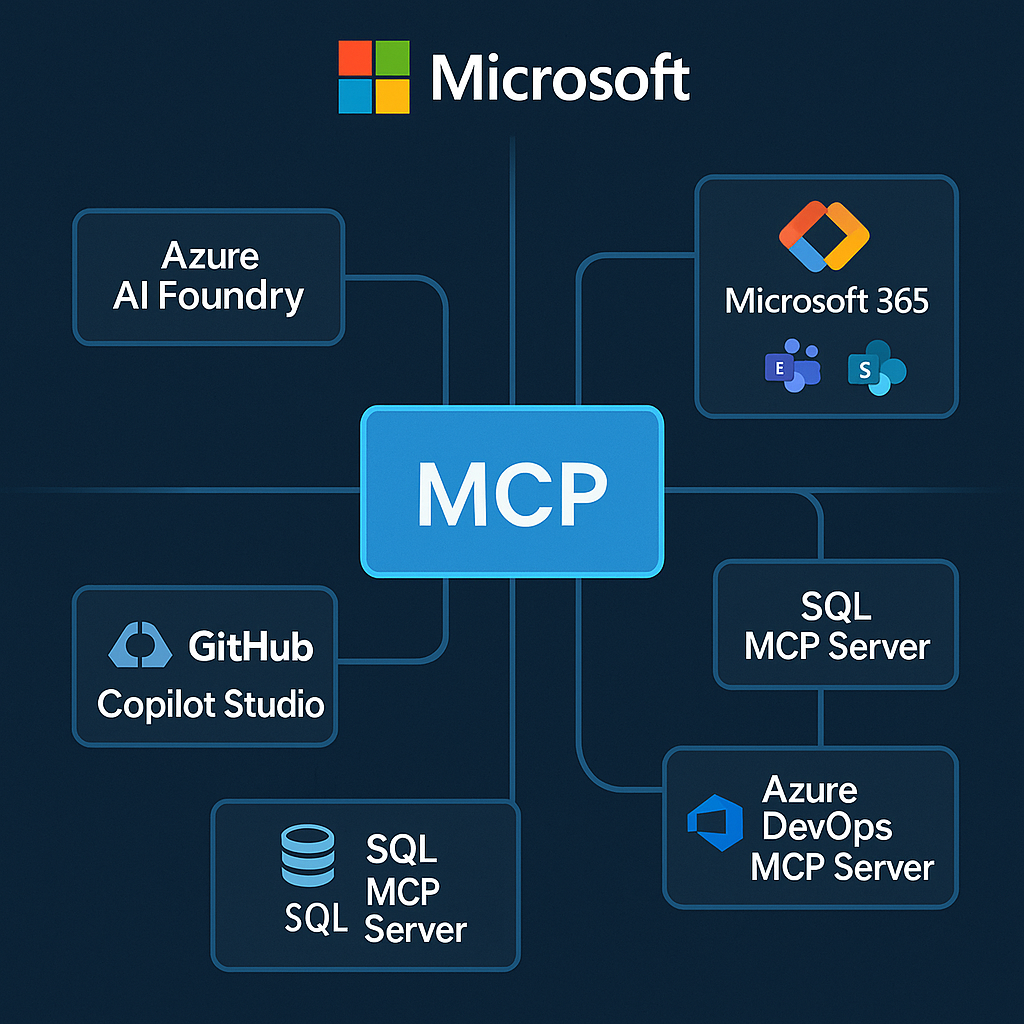

Perhaps the most significant development in MCP adoption comes from Microsoft’s comprehensive integration strategy. At Microsoft Build 2025, CEO Satya Nadella announced MCP as a first-class standard across Windows 11, GitHub, Copilot Studio, and Azure AI Foundry, positioning the protocol as central to Microsoft’s “open-by-design” AI ecosystem vision.

Azure AI Foundry Integration: The platform now supports direct MCP server connections without requiring custom function development. Agents automatically discover and import available tools from connected servers, with dynamic updates as underlying systems evolve. This approach dramatically reduces development overhead while maintaining enterprise security standards.

Copilot Studio Enhancement: MCP integration enables makers to connect AI agents to existing knowledge sources and APIs through a simplified interface. The platform supports both Microsoft’s official MCP servers and custom implementations, creating a plug-and-play environment for enterprise AI deployment.

Official Microsoft MCP Servers:

- Azure MCP Server: Natural language infrastructure management

- Microsoft 365 MCP Server: SharePoint, Teams, and productivity tool integration

- SQL MCP Server: Conversational database access with intelligent query generation

- Azure DevOps MCP Server: Development lifecycle tool connectivity

Industry-Wide Adoption Signals

The protocol’s rapid acceptance extends far beyond Microsoft’s ecosystem. OpenAI officially adopted MCP in March 2025, integrating the standard across ChatGPT desktop applications, the Agents SDK, and the Responses API. Sam Altman described this adoption as a crucial step toward standardising AI tool connectivity.

Google DeepMind’s Demis Hassabis confirmed upcoming MCP support in Gemini models, characterising the protocol as “rapidly becoming an open standard for the AI agentic era.” This endorsement from three of the industry’s largest AI providers suggests MCP has achieved critical mass for widespread adoption.

Development Tool Integration: Major development environments have embraced MCP to enhance AI-assisted coding workflows:

- Visual Studio Code: Natural language codebase queries and documentation generation

- Zed Editor: Real-time project context for AI coding assistants

- Replit: Integrated development and deployment workflows

- Sourcegraph: Code intelligence and search capabilities

Practical Implementation Realities

Research into real-world MCP deployments reveals both significant benefits and important considerations for enterprise adoption.

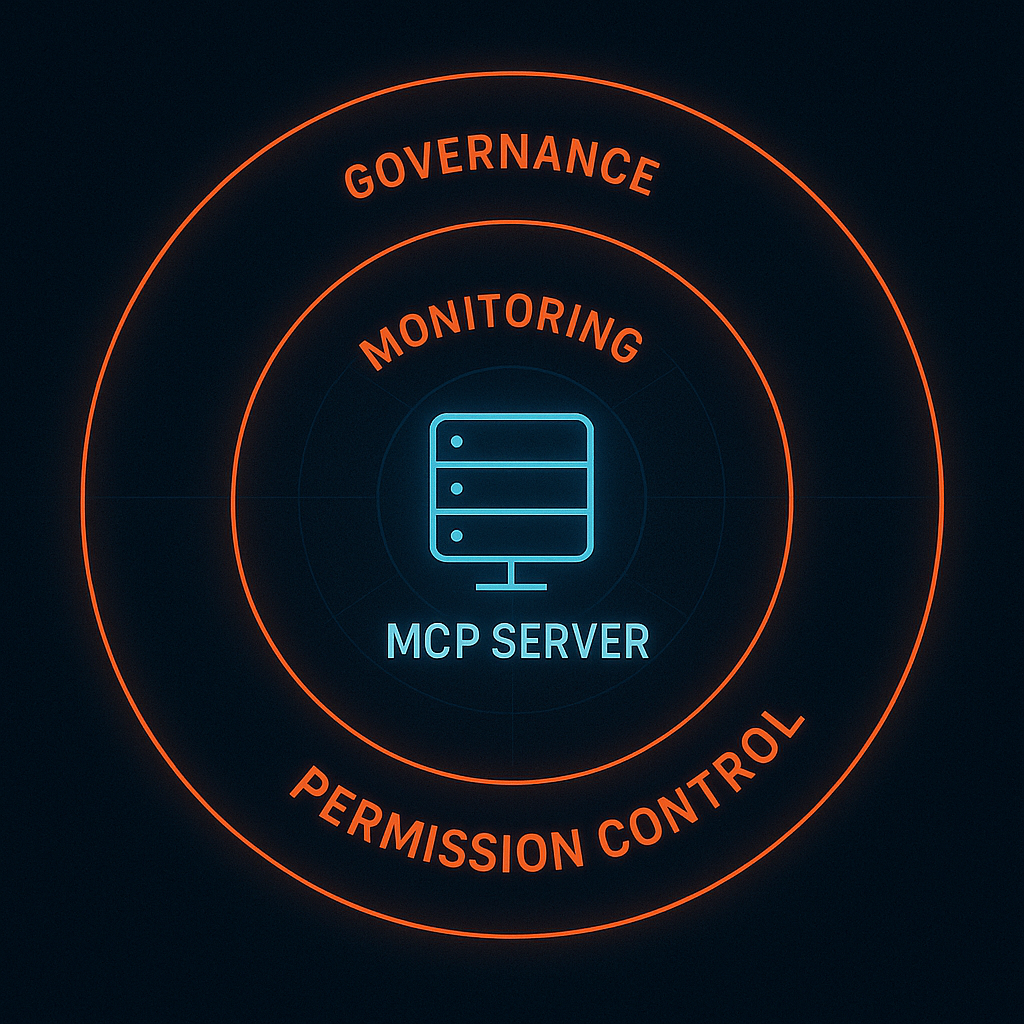

Security Architecture: MCP implementations support OAuth integration and respect existing identity management systems. Microsoft’s servers, for example, honour Azure Active Directory permissions, ensuring AI agents can only access data that users would normally be authorised to view. However, organisations must establish governance frameworks around approved MCP servers and implement principle of least privilege configurations.

Performance Considerations: Successful deployments require careful attention to server performance, particularly when serving multiple concurrent AI applications. Azure’s managed MCP servers include built-in scaling and monitoring capabilities, but custom implementations need robust resource management and error handling strategies.

Development Workflow Integration: The most successful MCP implementations integrate naturally with existing development practices. For Azure-based teams, this typically involves leveraging Azure CLI integration, connecting to ARM templates, and utilising familiar authentication patterns.

Security Landscape and Risk Mitigation

Recent security research has identified several important considerations for MCP deployments. The protocol’s flexibility, while enabling powerful integrations, also introduces potential attack vectors that organisations must address.

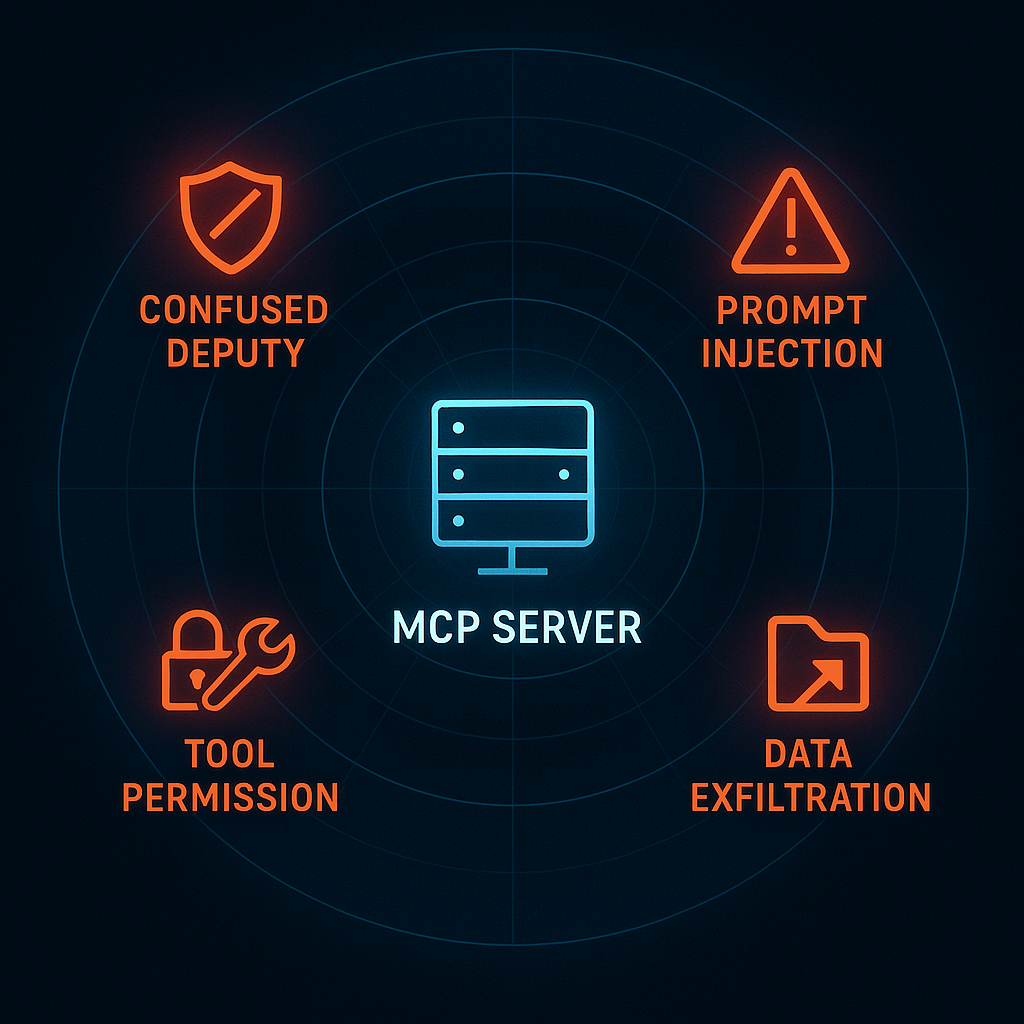

Key Security Risks:

- Confused deputy problems where MCP servers execute actions beyond user permissions

- Prompt injection vulnerabilities through malicious server responses

- Tool permission combinations that could enable data exfiltration

- Trust relationships with third-party MCP server providers

Mitigation Strategies:

- Implement comprehensive server vetting and approval processes

- Use OAuth and granular permission schemes

- Deploy monitoring and audit logging for MCP interactions

- Establish clear governance around approved server catalogues

Emerging Use Cases and Applications

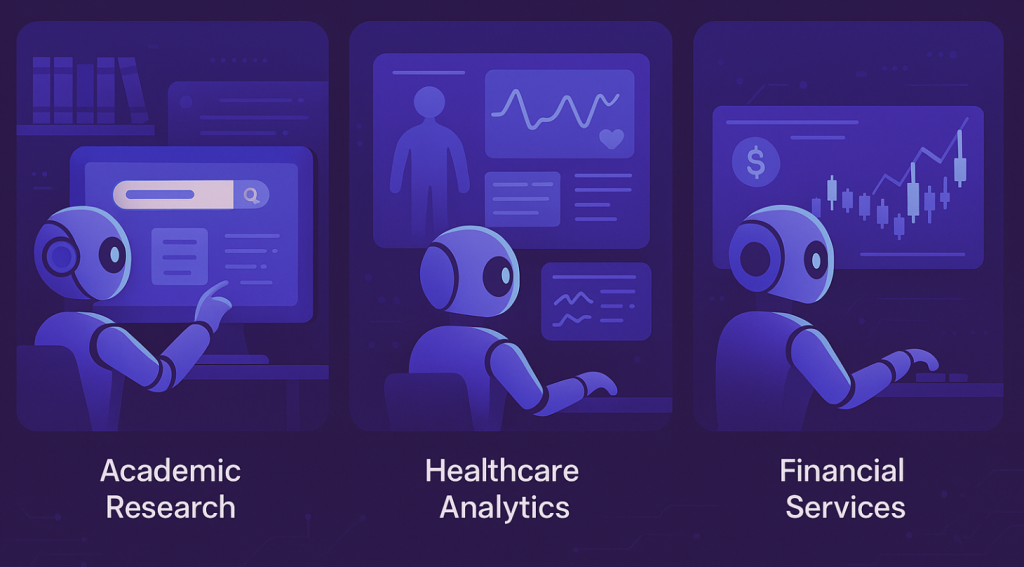

Research reveals MCP’s impact extending across diverse industry applications, from academic workflows to enterprise automation.

Academic Research Integration: Universities and research institutions are leveraging MCP to connect AI assistants with reference management systems like Zotero, enabling semantic searches across literature libraries and automated review generation.

Healthcare Analytics: Integration with remote patient monitoring solutions demonstrates MCP’s potential in healthcare data ecosystems, connecting AI models to real-time medical data while maintaining compliance requirements.

Financial Services: Early adopters in finance are using MCP to connect AI systems with trading platforms, risk management tools, and regulatory reporting systems, creating more responsive and context-aware financial applications.

Future Trajectory and Strategic Implications

The protocol’s trajectory suggests MCP proficiency will become increasingly valuable for development teams. Organisations investing in MCP infrastructure today position themselves to leverage future AI innovations without vendor lock-in concerns.

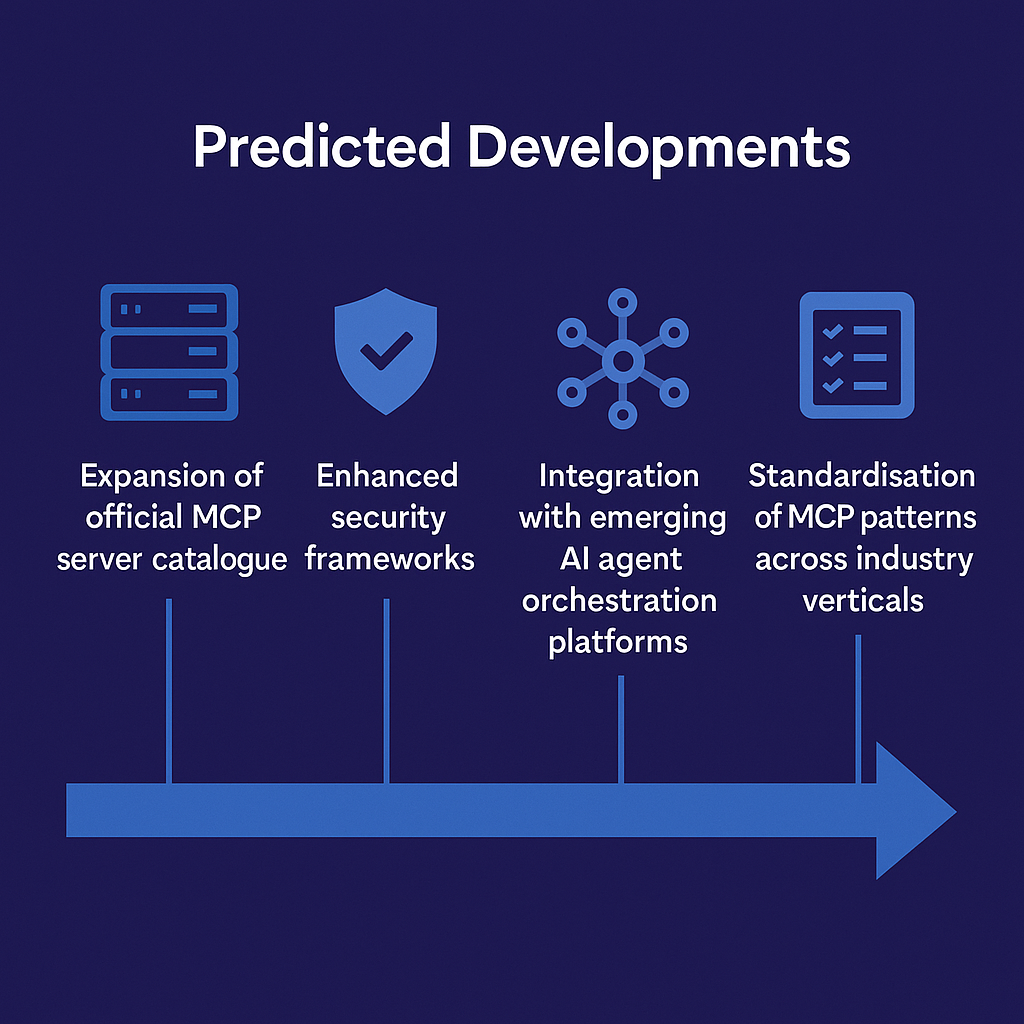

Predicted Developments:

- Expansion of official MCP server catalogues from major cloud providers

- Enhanced security frameworks and enterprise governance tools

- Integration with emerging AI agent orchestration platforms

- Standardisation of MCP patterns across industry verticals

The protocol’s potential extends to sophisticated autonomous workflows where AI agents coordinate across multiple systems. Future implementations may enable AI assistants to analyse business data, generate insights, create presentations, and coordinate meetings through standardised MCP interfaces.

Implementation Recommendations

For organisations considering MCP adoption, research suggests starting with focused use cases before expanding to complex multi-system scenarios.

Getting Started Strategy:

- Begin with Microsoft’s Azure AI Foundry for managed MCP capabilities

- Connect existing documentation systems or APIs as initial proof of concept

- Establish governance frameworks and security policies early

- Leverage official MCP servers before developing custom implementations

- Plan for monitoring and audit requirements from the outset

Success Factors:

- Integration with existing development workflows

- Clear governance and security frameworks

- Gradual expansion from simple to complex use cases

- Investment in team MCP expertise and training

Conclusion

The Model Context Protocol represents more than a technical standard; it signals the maturation of AI systems toward practical, enterprise-ready integration capabilities. With major industry players committing to MCP support and real-world deployments demonstrating clear value, the protocol appears positioned to become as fundamental to AI development as HTTP is to web applications.

Organisations that understand and implement MCP today will be better prepared to harness the full potential of tomorrow’s AI capabilities, while those that delay adoption risk falling behind in an increasingly AI-driven business landscape. The evidence suggests that MCP adoption is not a question of if, but when and how effectively organisations can integrate this transformative protocol into their AI strategies.